Microsoft MINDS Data: A Machine Learning Recommendation Engine

Published:

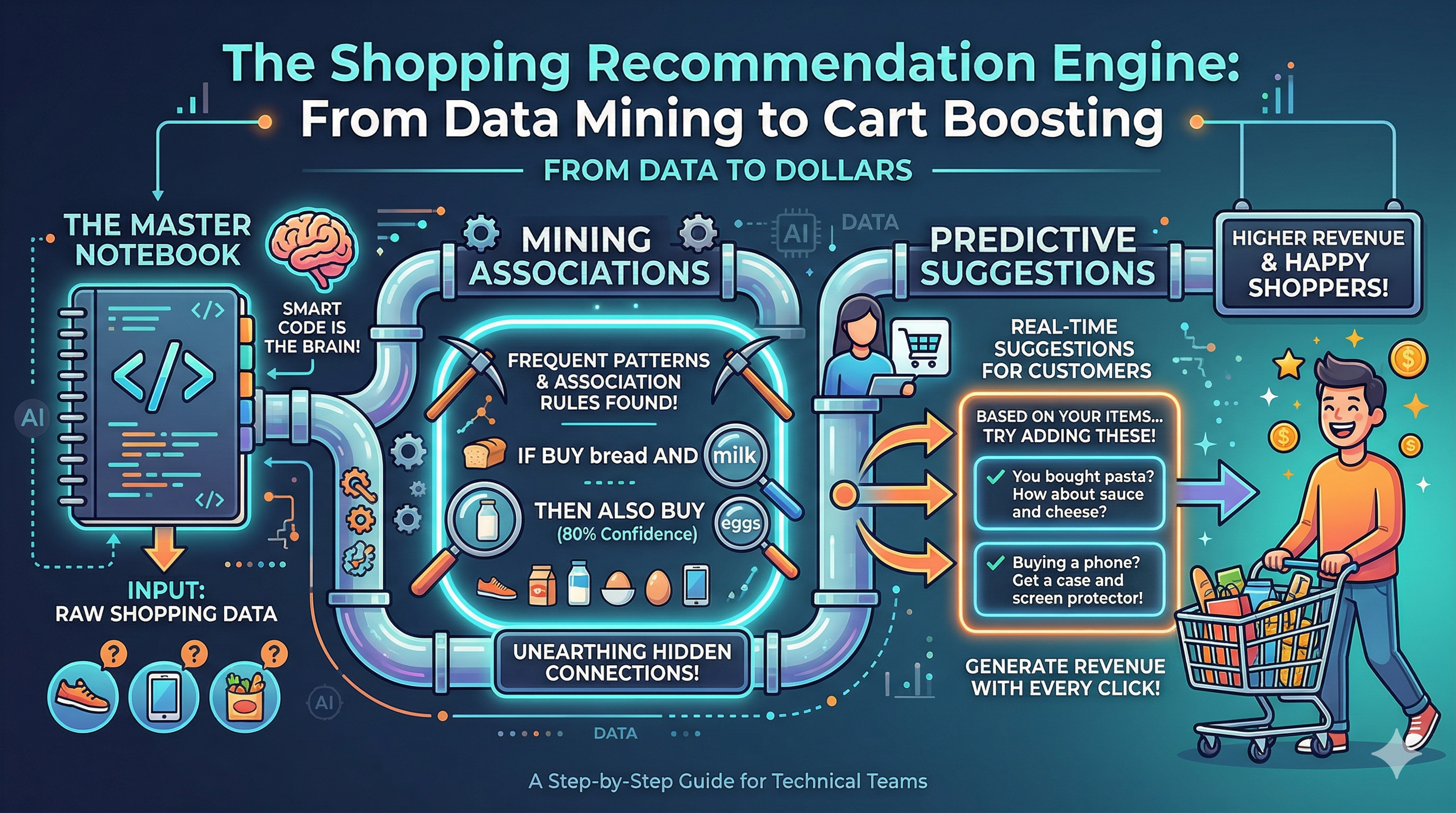

In this post, we build a series of recommendation engines for the Microsoft MINDS dataset using popular heuristic strategies and a combination of machine learning algorithms.

📰 News Data: Microsoft MIND — Two-Stage Generate-&-Rerank News Recommendation Engine

The MIND dataset is the standard benchmark for neural news recommendation, released by Microsoft Research. It contains ~160 K users, ~65 K articles, and 1 M+ click-through logs collected from MSN News in October 2019.

In this post we build a two-stage generate-and-rerank paradigm from large-scale recommendation systems:

| Stage | What it does |

|---|---|

| Stage 1 — Retrieval | Cast a wide net: merge candidates from popularity, category-affinity, item-CF, and recency signals |

| Stage 2 — Ranking | Re-score every candidate with a LightGBM meta-ranker that sees retriever membership, base scores, and rich user/article features |

📋 Table of Contents

🗺️ System Blueprint — How It All Fits Together

Before diving into the code, here’s a bird’s-eye view of the entire two-stage pipeline you’ll build in this notebook:

┌─────────────────────────────────────────────────────────────────────────────────┐

│ MIND News Recommendation Engine │

│ │

│ ┌──────────┐ ┌──────────┐ ┌─────────────────────────────────────────┐ │

│ │ Raw │ │ EDA & │ │ FEATURE STORE │ │

│ │ Data │───▶│ Stats │───▶│ user_stats · article_feat │ │

│ │(MIND TSV)│ │(Sec 2) │ │ user_cat_affinity · TF-IDF centroids │ │

│ └──────────┘ └──────────┘ └───────────────┬─────────────────────────┘ │

│ │ │

│ ┌────────────────────────────────────▼─────────────────────────┐ │

│ │ STAGE 1 — RETRIEVAL (Sec 8) │ │

│ │ │ │

│ │ S1 Popularity S2 Category S3 Item-CF S4 Temporal Taste │ │

│ │ ↓ ↓ ↓ ↓ │ │

│ │ MERGE & DEDUPLICATE │ │

│ │ 200-candidate pool (Recall@200 ~diagnostic) │ │

│ └────────────────────────┬──────────────────────────────────────┘ │

│ │ │

│ ┌────────────────────────▼──────────────────────────────────────┐ │

│ │ STAGE 2 — RERANKING (Sec 9) │ │

│ │ │ │

│ │ Base LightGBM (LambdaMART, SET_A) ──▶ OOF scores │ │

│ │ Meta-LGB (extended features, SET_B) ──▶ S6 │ │

│ │ XGBoost ensemble blend ──────────────────▶ S7 │ │

│ └────────────────────────┬──────────────────────────────────────┘ │

│ │ │

│ ┌────────────────────────▼──────────────────────────────────────┐ │

│ │ EVALUATION (Sec 10–12) │ │

│ │ Precision · Recall · F1 · NDCG · Hit-Rate @ K=5 & K=10 │ │

│ └───────────────────────────────────────────────────────────────┘ │

└─────────────────────────────────────────────────────────────────────────────────┘

Notebook Roadmap

| Section | Focus | Key Output |

|---|---|---|

| §1 Setup | Load MIND-small ZIPs | all_interactions, news DataFrames |

| §2 EDA | Statistical exploration | 8 visualisations, CTR & sparsity stats |

| §3 Features | Engineer 4 feature tables | user_stats, article_feat, user_cat_affinity, imp_train_df |

| §4 Item-CF | Sparse co-click similarity | item_sim_lookup (top-50 neighbours per article) |

| §5 Temporal | Recency-weighted taste | temporal_taste_matrix with 7-day half-life decay |

| §6 Eval + S1–S5 | Baseline strategies | Metrics for 5 retrieval/simple-rank methods |

| §7 Cold-start gate | Handle zero-history users | Binary cold/warm routing logic |

| §8 Stage 1 | Candidate pool fusion | 200 candidates, Recall@200 diagnostic |

| §9 Stage 2 | Meta-ranker training | meta_lgb model with enriched features |

| §10–12 | Full benchmark | S1→S7 leaderboard, lift metrics |

Reading tip: Each section opens with a

📖callout explaining the why before the code shows the how.

1. Setup & data loading

📖 Dataset & Problem Framing

The data: MIND-small contains ~1 M impression logs from 50,000 users over six weeks (Oct 12–Nov 22, 2019). Each impression records a user session: the articles shown, which ones were clicked (label=1) or ignored (label=0), and the user’s recent click history.

| File | Key columns | Role |

|---|---|---|

behaviors.tsv | ImpressionId, UserId, Time, History, Impressions | Primary signal — click/no-click |

news.tsv | NewsId, Category, SubCategory, Title, Abstract | Article metadata |

Task framing. Given a user’s click history, rank candidate news articles so that clicked articles appear at the top. We evaluate with ranking metrics (Precision@K, Recall@K, NDCG@K, Hit-Rate@K).

Train/test split strategy. MIND provides an official train split and a dev (validation) split. We use train behaviors for all model fitting and dev behaviors as the held-out test set, preserving the temporal ordering of the original benchmark.

Implicit feedback. Unlike star-ratings, every click is a positive signal (label = 1); every article shown but not clicked is a negative (label = 0). We treat clicks as our “liked” items throughout.

🔍 Data Schema at a Glance

behaviors.tsv — one row per user session (impression):

ImpressionId | UserId | Time | History | Impressions

─────────────┼────────┼───────────────────────┼──────────────────────┼──────────────────────────────

imp-1234 | U5678 | 10/15/2019 8:32:01 AM | N1001 N1087 N2334 … | N3301-1 N2201-0 N4412-0 …

↑ past click IDs ↑ candidate-label pairs

Each entry in Impressions is newsId-label where label=1 means clicked, label=0 means skipped. This is the core supervision signal.

news.tsv — one row per article:

newsId | category | subCategory | title | abstract

───────┼───────────┼─────────────┼────────────────────────────────┼──────────────

N1001 | Sports | NFL | "Eagles defeat Cowboys 31-14" | "The Philadelphia Eagles …"

N1087 | Finance | Stocks | "Apple earnings beat Q3" | "Apple Inc. reported …"

Key insight: The recommendation task is session-level re-ranking, not global ranking. For each impression, you rank the ~10–20 candidate articles shown in that session, using the user’s click history as context.

# Import libraries

import subprocess, sys

for pkg in ['lightgbm', 'xgboost', 'scikit-learn']:

subprocess.run([sys.executable, '-m', 'pip', 'install', '-q', pkg], check = True)

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

import seaborn as sns

import zipfile, gc, time, warnings, os, re

from datetime import datetime

from collections import defaultdict, Counter

from scipy.sparse import csr_matrix

from sklearn.preprocessing import normalize

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.metrics.pairwise import cosine_similarity

import lightgbm as lgb

from xgboost import XGBClassifier

from joblib import Parallel, delayed

from google.colab import drive

import zipfile

warnings.filterwarnings('ignore')

plt.style.use('seaborn-v0_8-whitegrid')

sns.set_palette('husl')

pd.set_option('display.float_format', '{:.4f}'.format)

pd.set_option('display.max_columns', None)

print('✅ Libraries loaded')

✅ Libraries loaded

# Connect to data

drive.mount('/content/drive')

Mounted at /content/drive

# Function to perform parsing

def parse_behaviors_from_zip(zip_path, inner_path):

with zipfile.ZipFile(zip_path, 'r') as z:

with z.open(inner_path) as f:

raw = pd.read_csv(f, sep = '\t', header = None, names = BEH_COLS)

raw['time'] = pd.to_datetime(raw['time'], format='%m/%d/%Y %I:%M:%S %p')

raw['ts'] = raw['time'].astype('int64') // 10**9

rows = []

for _, r in raw.iterrows():

uid = r['userId']

ts = r['ts']

if pd.notna(r['impressions']):

for pair in str(r['impressions']).split():

nid, lbl = pair.rsplit('-', 1)

rows.append((uid, nid, int(lbl), ts))

df = pd.DataFrame(rows, columns=['userId','newsId','clicked','timestamp'])

return df, raw

# Function to perform metrics for ranking

def precision_at_k(recs, true_set, k):

return len(set(recs[:k]) & true_set) / k if k else 0.0

def recall_at_k(recs, true_set, k):

return len(set(recs[:k]) & true_set) / len(true_set) if true_set else 0.0

def f1_at_k(recs, true_set, k):

p = precision_at_k(recs, true_set, k)

r = recall_at_k(recs, true_set, k)

return 2*p*r/(p+r) if (p+r) > 0 else 0.0

def ndcg_at_k(recs, true_set, k):

dcg = sum(1 / np.log2(i + 2) for i, m in enumerate(recs[:k]) if m in true_set)

ideal = sum(1 / np.log2(i + 2) for i in range(min(len(true_set), k)))

return dcg/ideal if ideal else 0.0

def score_recs(recs, true_set, K):

return {'precision': precision_at_k(recs, true_set, K),

'recall' : recall_at_k(recs, true_set, K),

'f1' : f1_at_k(recs, true_set, K),

'ndcg' : ndcg_at_k(recs, true_set, K),

'hit_rate' : 1 if any(m in true_set for m in recs[:K]) else 0,}

def evaluate_strategy(score_fn, eval_df, K = 10, n = None):

# score_fn(uid, candidates) -> candidates sorted best-first

rows = eval_df if n is None else eval_df.sample(n=n, random_state=100)

m = {k: [] for k in ('precision','recall','f1','ndcg','hit_rate')}

for _, row in rows.iterrows():

recs = score_fn(row['userId'], row['imp_candidates'])

s = score_recs(recs, row['true_items'], K)

for k in m:

m[k].append(s[k])

result = {k: float(np.mean(v)) for k, v in m.items()}

# FIX 4: composite = mean(NDCG, Hit-Rate) — avoids double-counting P/R via F1

result['composite'] = float(np.mean([result['ndcg'], result['hit_rate']]))

return result

metric_keys = ['precision','recall','f1','ndcg','hit_rate']

def parse_history_length(raw_df):

raw_df = raw_df.copy()

raw_df['history_len'] = raw_df['history'].fillna('').apply(lambda h: len(str(h).split()) if str(h).strip() else 0)

return raw_df.groupby('userId')['history_len'].max()

def daily_agg(df, split_label):

tmp = df.copy()

tmp['date'] = pd.to_datetime(tmp['timestamp'], unit='s').dt.date

tmp['split'] = split_label

return (tmp.groupby(['date','split']).agg(impressions=('clicked','count'), clicks=('clicked','sum')).reset_index().assign(ctr=lambda d: d['clicks']/d['impressions']))

# Function to filter previously seen articles

def _filter_seen(article_list, uid):

seen = _seen_cache.get(uid, set())

return [a for a in article_list if a not in seen]

# Ranking metrics

def s1_popularity(uid, N = 50):

return _filter_seen(POPULARITY_POOL, uid)[:N]

def s2_category(uid, N = 50):

if uid not in user_cat_affinity.index:

return s1_popularity(uid, N)

uvec = user_cat_affinity.loc[uid].values.astype('float32')

uvec_n = uvec / (np.linalg.norm(uvec) + 1e-9)

scores = article_cat_norm @ uvec_n

ranking = np.argsort(-scores)

ordered = [article_cat_idx[i] for i in ranking]

return _filter_seen(ordered, uid)[:N]

def s3_itemcf(uid, N = 50):

clicked = list(user_click_sets.get(uid, []))

if not clicked:

return s1_popularity(uid, N)

score_acc = defaultdict(float)

for aid in clicked[-20:]:

for n_aid, sim in item_sim_lookup.get(aid, [])[:30]:

score_acc[n_aid] += sim

seen = _seen_cache.get(uid, set())

ranked = sorted(score_acc.items(), key=lambda x: -x[1])

filtered = [a for a, _ in ranked if a not in seen]

if len(filtered) < N:

filtered += _filter_seen(POPULARITY_POOL, uid)[:N]

return filtered[:N]

def s4_temporal(uid, N = 50):

if uid not in user_taste_norm.index:

return s1_popularity(uid, N)

tvec = user_taste_norm.loc[uid].values.astype('float32')

scores = article_cat_taste_norm @ tvec

ranking = np.argsort(-scores)

ordered = [taste_article_idx[i] for i in ranking]

return _filter_seen(ordered, uid)[:N]

# Compute tfidf centroids and resulting article affinity

def tfidf_affinity(uid, aid):

'''Cosine sim: user click-history TF-IDF centroid vs article.'''

centroid = user_tfidf_centroids.get(uid)

if centroid is None:

return 0.0

i = tfidf_idx.get(aid, -1)

if i < 0:

return 0.0

return float(tfidf_mat[i].dot(centroid))

def recent_tfidf_affinity(uid, aid):

'''Cosine sim using centroid of user recent 20 clicks only.'''

centroid = user_recent_tfidf_centroids.get(uid)

if centroid is None:

return 0.0

i = tfidf_idx.get(aid, -1)

if i < 0:

return 0.0

return float(tfidf_mat[i].dot(centroid))

# Evaluation scoring functions

def s1_score(uid, candidates):

return sorted(candidates, key = lambda a: -float(pop_stats.loc[a,'bayesian_ctr'] if a in pop_stats.index else 0))

def s2_score(uid, candidates):

if uid not in user_cat_affinity.index:

return s1_score(uid, candidates)

uvec = user_cat_affinity.loc[uid].values.astype('float32')

uvec /= np.linalg.norm(uvec) + 1e-9

def _s(a):

i = art_pos.get(a, -1)

return float(article_cat_norm[i] @ uvec) if i >= 0 else 0.0

return sorted(candidates, key=lambda a: -_s(a))

def s3_score(uid, candidates):

clicked = list(user_click_sets.get(uid, []))

if not clicked:

return s1_score(uid, candidates)

score_acc = defaultdict(float)

for aid in clicked[-20:]:

for n_aid, sim in item_sim_lookup.get(aid, [])[:30]:

score_acc[n_aid] += sim

return sorted(candidates, key=lambda a: -score_acc.get(a, 0))

def s4_score(uid, candidates):

if uid not in user_taste_norm.index:

return s1_score(uid, candidates)

tvec = user_taste_norm.loc[uid].values.astype('float32')

# Use taste_pos dict (O(1)) instead of list.index() (O(n))

def _s(a):

i = taste_pos.get(a, -1)

return float(article_cat_taste_norm[i] @ tvec) if i >= 0 else 0.0

return sorted(candidates, key=lambda a: -_s(a))

def _build_feature_matrix(uid, candidates, s2_vec, s4_vec):

'''Build the full FEATURE_COLS-aligned matrix for all impression candidates.

Includes within-impression context signals (ctr_norm_rank, imp_size).'''

n = len(candidates)

u_cc = float(us_click_count.get(uid, 0))

u_cf = float(us_click_freq.get(uid, 0))

ctrs = np.array([af_bayesian_ctr.get(a, 0) for a in candidates], dtype='float32')

ctr_norm_rank = np.argsort(np.argsort(-ctrs)).astype('float32') / max(1, n - 1)

rows = []

for k, a in enumerate(candidates):

ai = art_pos.get(a, -1)

ti = taste_pos.get(a, -1)

subc = newsid_to_subcat.get(a)

rows.append([

u_cc,

u_cf,

float(af_log_clicks.get(a, 0)),

float(af_log_impr.get(a, 0)),

float(af_article_len.get(a, 0)),

float(s2_vec[ai]) if ai >= 0 else 0.0,

float(s4_vec[ti]) if ti >= 0 else 0.0,

tfidf_affinity(uid, a),

recent_tfidf_affinity(uid, a),

float(af_article_age.get(a, 0)),

float(ctr_norm_rank[k]),

float(n),

float(user_subcat_clicks.get((uid, subc), 0)) if subc else 0.0,])

return np.array(rows, dtype='float32')

def s5_score(uid, candidates):

if uid in user_cat_affinity.index:

uvec = user_cat_affinity.loc[uid].values.astype('float32')

s2_vec = article_cat_norm @ (uvec / (np.linalg.norm(uvec) + 1e-9))

else:

s2_vec = np.zeros(len(article_cat_idx))

if uid in user_taste_norm.index:

tvec = user_taste_norm.loc[uid].values.astype('float32')

s4_vec = article_cat_taste_norm @ tvec

else:

s4_vec = np.zeros(len(taste_article_idx))

X = _build_feature_matrix(uid, candidates, s2_vec, s4_vec)

probs = lgb_model.predict(X)

return [candidates[i] for i in np.argsort(-probs)]

def s6_score(uid, candidates):

if is_cold(uid):

return s1_score(uid, candidates)

if uid in user_cat_affinity.index:

uvec = user_cat_affinity.loc[uid].values.astype('float32')

s2_vec = article_cat_norm @ (uvec / (np.linalg.norm(uvec) + 1e-9))

else:

s2_vec = np.zeros(len(article_cat_idx))

if uid in user_taste_norm.index:

tvec = user_taste_norm.loc[uid].values.astype('float32')

s4_vec = article_cat_taste_norm @ tvec

else:

s4_vec = np.zeros(len(taste_article_idx))

X_base = _build_feature_matrix(uid, candidates, s2_vec, s4_vec)

base_scores = lgb_model.predict(X_base)

cands_s2 = s2_category(uid, N_STAGE1)

cands_s3 = s3_itemcf(uid, N_STAGE1)

cands_s4 = s4_temporal(uid, N_STAGE1)

X_meta = _build_meta_features(uid, candidates, cands_s2, cands_s3, cands_s4, s2_vec, s4_vec, base_scores)

scores = meta_lgb.predict(X_meta)

return [candidates[i] for i in np.argsort(-scores)]

def s7_score(uid, candidates):

if is_cold(uid):

return s1_score(uid, candidates)

if uid in user_cat_affinity.index:

uvec = user_cat_affinity.loc[uid].values.astype('float32')

s2_vec = article_cat_norm @ (uvec / (np.linalg.norm(uvec) + 1e-9))

else:

s2_vec = np.zeros(len(article_cat_idx))

if uid in user_taste_norm.index:

tvec = user_taste_norm.loc[uid].values.astype('float32')

s4_vec = article_cat_taste_norm @ tvec

else:

s4_vec = np.zeros(len(taste_article_idx))

X_base = _build_feature_matrix(uid, candidates, s2_vec, s4_vec)

base_scores = lgb_model.predict(X_base)

cands_s2 = s2_category(uid, N_STAGE1)

cands_s3 = s3_itemcf(uid, N_STAGE1)

cands_s4 = s4_temporal(uid, N_STAGE1)

X_meta = _build_meta_features(uid, candidates, cands_s2, cands_s3, cands_s4, s2_vec, s4_vec, base_scores)

lgb_probs = meta_lgb.predict(X_meta)

xgb_probs = xgb_meta.predict_proba(X_meta)[:, 1]

scores = 0.6 * lgb_probs + 0.4 * xgb_probs

return [candidates[i] for i in np.argsort(-scores)]

def _build_feature_row(uid, aid, s2_scores_dict, s4_scores_dict):

'''Used by s5_lgb retriever (not evaluation path).'''

ai = art_pos.get(aid, -1)

ti = taste_pos.get(aid, -1)

cat_aff = float(s2_scores_dict.get(ai, 0))

tst_aff = float(s4_scores_dict.get(ti, 0))

return [

float(us_click_count.get(uid, 0)),

float(us_click_freq.get(uid, 0)),

float(af_log_clicks.get(aid, 0)),

float(af_log_impr.get(aid, 0)),

float(af_bayesian_ctr.get(aid, 0)),

float(af_article_len.get(aid, 0)),

cat_aff,

tst_aff,

tfidf_affinity(uid, aid),

float(af_article_age.get(aid, 0)),]

def _build_meta_features(uid, candidates, cands_s2, cands_s3, cands_s4, s2_vec, s4_vec, lgb_base_scores):

s2_rank = {a: r for r, a in enumerate(cands_s2)}

s3_rank = {a: r for r, a in enumerate(cands_s3)}

s4_rank = {a: r for r, a in enumerate(cands_s4)}

n = len(candidates)

ctrs = np.array([af_bayesian_ctr.get(a, 0) for a in candidates], dtype='float32')

ctr_norm_rank = np.argsort(np.argsort(-ctrs)).astype('float32') / max(1, n - 1)

rows = []

for k, aid in enumerate(candidates):

ai = art_pos.get(aid, -1)

ti = taste_pos.get(aid, -1)

cat_aff = float(s2_vec[ai]) if ai >= 0 else 0.0

tst_aff = float(s4_vec[ti]) if ti >= 0 else 0.0

in_s2 = int(aid in s2_rank)

in_s3 = int(aid in s3_rank)

in_s4 = int(aid in s4_rank)

rows.append([

float(us_click_count.get(uid, 0)),

float(us_click_freq.get(uid, 0)),

float(af_log_clicks.get(aid, 0)),

float(af_log_impr.get(aid, 0)),

float(af_article_len.get(aid, 0)),

cat_aff, tst_aff,

tfidf_affinity(uid, aid),

recent_tfidf_affinity(uid, aid),

float(af_article_age.get(aid, 0)),

float(ctr_norm_rank[k]),

float(n),

float(user_subcat_clicks.get((uid, newsid_to_subcat.get(aid)), 0))

if newsid_to_subcat.get(aid) else 0.0,

in_s2, in_s3, in_s4,

s2_rank.get(aid, N_STAGE1),

s3_rank.get(aid, N_STAGE1),

s4_rank.get(aid, N_STAGE1),

in_s2 + in_s3 + in_s4,

float(lgb_base_scores[k]),

])

return np.array(rows, dtype='float32')

def s5_lgb(uid, N = 50):

candidates = list(dict.fromkeys(s2_category(uid, K_CAND) + s3_itemcf(uid, K_CAND) + s4_temporal(uid, K_CAND)))[:K_CAND]

if not candidates:

return s1_popularity(uid, N)

if uid in user_cat_affinity.index:

uvec = user_cat_affinity.loc[uid].values.astype('float32')

s2_vec = article_cat_norm @ (uvec / (np.linalg.norm(uvec) + 1e-9))

else:

s2_vec = np.zeros(len(article_cat_idx))

if uid in user_taste_norm.index:

tvec = user_taste_norm.loc[uid].values.astype('float32')

s4_vec = article_cat_taste_norm @ tvec

else:

s4_vec = np.zeros(len(taste_article_idx))

X = _build_feature_matrix(uid, candidates, s2_vec, s4_vec)

probs = lgb_model.predict(X)

return [candidates[i] for i in np.argsort(-probs)][:N]

def s6_meta_lgb(uid, N = 50):

if is_cold(uid):

return s1_popularity(uid, N)

cands_s2 = s2_category(uid, N_STAGE1)

cands_s3 = s3_itemcf(uid, N_STAGE1)

cands_s4 = s4_temporal(uid, N_STAGE1)

candidates = list(dict.fromkeys(cands_s2 + cands_s3 + cands_s4))[:N_STAGE1]

if uid in user_cat_affinity.index:

uvec = user_cat_affinity.loc[uid].values.astype('float32')

s2_vec = article_cat_norm @ (uvec / (np.linalg.norm(uvec) + 1e-9))

else:

s2_vec = np.zeros(len(article_cat_idx))

if uid in user_taste_norm.index:

tvec = user_taste_norm.loc[uid].values.astype('float32')

s4_vec = article_cat_taste_norm @ tvec

else:

s4_vec = np.zeros(len(taste_article_idx))

X_base = _build_feature_matrix(uid, candidates, s2_vec, s4_vec)

base_scores = lgb_model.predict(X_base)

X_meta = _build_meta_features(uid, candidates, cands_s2, cands_s3, cands_s4, s2_vec, s4_vec, base_scores)

scores = meta_lgb.predict(X_meta)

return [candidates[i] for i in np.argsort(-scores)][:N]

def s7_ensemble(uid, N = 50):

if is_cold(uid):

return s1_popularity(uid, N)

cands_s2 = s2_category(uid, N_STAGE1)

cands_s3 = s3_itemcf(uid, N_STAGE1)

cands_s4 = s4_temporal(uid, N_STAGE1)

candidates = list(dict.fromkeys(cands_s2 + cands_s3 + cands_s4))[:N_STAGE1]

if uid in user_cat_affinity.index:

uvec = user_cat_affinity.loc[uid].values.astype('float32')

s2_vec = article_cat_norm @ (uvec / (np.linalg.norm(uvec) + 1e-9))

else:

s2_vec = np.zeros(len(article_cat_idx))

if uid in user_taste_norm.index:

tvec = user_taste_norm.loc[uid].values.astype('float32')

s4_vec = article_cat_taste_norm @ tvec

else:

s4_vec = np.zeros(len(taste_article_idx))

X_base = _build_feature_matrix(uid, candidates, s2_vec, s4_vec)

base_scores = lgb_model.predict(X_base)

X_meta = _build_meta_features(uid, candidates, cands_s2, cands_s3, cands_s4,

s2_vec, s4_vec, base_scores)

lgb_probs = meta_lgb.predict(X_meta)

xgb_probs = xgb_meta.predict_proba(X_meta)[:, 1]

scores = 0.6 * lgb_probs + 0.4 * xgb_probs

return [candidates[i] for i in np.argsort(-scores)][:N]

COLD_THRESHOLD = 2

def is_cold(uid):

if uid not in user_stats.index:

return True

return user_stats.loc[uid, 'click_count'] < COLD_THRESHOLD

def _raw_s2(uid, N):

if uid not in user_cat_affinity.index:

return POPULARITY_POOL[:N]

uvec = user_cat_affinity.loc[uid].values.astype('float32')

uvec = uvec / (np.linalg.norm(uvec) + 1e-9)

return [article_cat_idx[j] for j in np.argsort(-(article_cat_norm @ uvec))[:N]]

def _raw_s3(uid, N):

clicked = list(user_click_sets.get(uid, []))

if not clicked:

return POPULARITY_POOL[:N]

score_acc = defaultdict(float)

for aid in clicked[-20:]:

for n_aid, sim in item_sim_lookup.get(aid, [])[:30]:

score_acc[n_aid] += sim

ranked = [a for a, _ in sorted(score_acc.items(), key=lambda x: -x[1])]

return (ranked + POPULARITY_POOL)[:N]

def _raw_s4(uid, N):

if uid not in user_taste_norm.index:

return POPULARITY_POOL[:N]

tvec = user_taste_norm.loc[uid].values.astype('float32')

return [taste_article_idx[j] for j in np.argsort(-(article_cat_taste_norm @ tvec))[:N]]

def chunked_topn(A_norm, U_mat, article_idx_arr, n_top, rank_col):

parts = []

for start in range(0, n_users, CHUNK_SIZE):

end = min(start + CHUNK_SIZE, n_users)

u_batch = unique_users[start:end]

scores = A_norm @ U_mat[start:end].T

top_idx = np.argsort(-scores, axis=0)[:n_top]

chunk_len = end - start

parts.append(pd.DataFrame({

'userId': np.repeat(u_batch, n_top),

'newsId': article_idx_arr[top_idx.T.ravel()],

rank_col: np.tile(np.arange(n_top), chunk_len),

}))

del scores, top_idx

gc.collect()

return pd.concat(parts, ignore_index = True)

# Load the data

TRAIN_ZIP = 'drive/MyDrive/MINDsmall_train.zip'

DEV_ZIP = 'drive/MyDrive/MINDsmall_dev.zip'

# Quick sanity-check: list contents of each archive

for label, path in [('TRAIN', TRAIN_ZIP), ('DEV', DEV_ZIP)]:

with zipfile.ZipFile(path, 'r') as z:

print(f'{label} ZIP contents: {z.namelist()}')

TRAIN ZIP contents: ['MINDsmall_train/', 'MINDsmall_train/behaviors.tsv', 'MINDsmall_train/news.tsv', 'MINDsmall_train/entity_embedding.vec', 'MINDsmall_train/relation_embedding.vec']

DEV ZIP contents: ['MINDsmall_dev/', 'MINDsmall_dev/behaviors.tsv', 'MINDsmall_dev/news.tsv', 'MINDsmall_dev/entity_embedding.vec', 'MINDsmall_dev/relation_embedding.vec']

# Define columns of interest

NEWS_COLS = ['newsId','category','subCategory','title','abstract','url', 'titleEntities','abstractEntities']

BEH_COLS = ['impressionId', 'userId', 'time', 'history', 'impressions']

print('Loading train news...', end=' ', flush = True)

# Load the data from file

with zipfile.ZipFile(TRAIN_ZIP, 'r') as z:

with z.open('MINDsmall_train/news.tsv') as f:

news_train = pd.read_csv(f, sep = '\t', header = None, names = NEWS_COLS, usecols = ['newsId', 'category', 'subCategory', 'title', 'abstract'])

print(f'done ({len(news_train):,} articles)')

print('Loading dev news... ', end = ' ', flush = True)

# Load the data from file

with zipfile.ZipFile(DEV_ZIP, 'r') as z:

with z.open('MINDsmall_dev/news.tsv') as f:

news_dev = pd.read_csv(f, sep = '\t', header = None, names = NEWS_COLS, usecols = ['newsId','category','subCategory','title','abstract'])

print(f'done ({len(news_dev):,} articles)')

Loading train news... done (51,282 articles)

Loading dev news... done (42,416 articles)

# Merge ther files together

news = pd.concat([news_train, news_dev]).drop_duplicates('newsId').reset_index(drop = True)

# Fill empty cells

news['abstract'] = news['abstract'].fillna('')

news['text'] = news['title'] + ' ' + news['abstract']

print(f'\nUnique articles : {len(news):,}')

print(f'Categories : {news["category"].nunique()}')

print(f'Sub-categories : {news["subCategory"].nunique()}')

news.head()

Unique articles : 65,238

Categories : 18

Sub-categories : 270

| newsId | category | subCategory | title | abstract | text | |

|---|---|---|---|---|---|---|

| 0 | N55528 | lifestyle | lifestyleroyals | The Brands Queen Elizabeth, Prince Charles, an... | Shop the notebooks, jackets, and more that the... | The Brands Queen Elizabeth, Prince Charles, an... |

| 1 | N19639 | health | weightloss | 50 Worst Habits For Belly Fat | These seemingly harmless habits are holding yo... | 50 Worst Habits For Belly Fat These seemingly ... |

| 2 | N61837 | news | newsworld | The Cost of Trump's Aid Freeze in the Trenches... | Lt. Ivan Molchanets peeked over a parapet of s... | The Cost of Trump's Aid Freeze in the Trenches... |

| 3 | N53526 | health | voices | I Was An NBA Wife. Here's How It Affected My M... | I felt like I was a fraud, and being an NBA wi... | I Was An NBA Wife. Here's How It Affected My M... |

| 4 | N38324 | health | medical | How to Get Rid of Skin Tags, According to a De... | They seem harmless, but there's a very good re... | How to Get Rid of Skin Tags, According to a De... |

# Expand each impression list into one row per (user, article, label) from the behavioral data

print('Parsing train behaviors...', end = ' ', flush = True)

interactions_train, raw_train = parse_behaviors_from_zip(TRAIN_ZIP, 'MINDsmall_train/behaviors.tsv')

print(f'done ({len(interactions_train):,} rows)')

print('Parsing dev behaviors... ', end = ' ', flush = True)

interactions_dev, raw_dev = parse_behaviors_from_zip(DEV_ZIP, 'MINDsmall_dev/behaviors.tsv')

print(f'done ({len(interactions_dev):,} rows)')

# Tag splits and combine

interactions_train['split'] = 'train'

interactions_dev['split'] = 'dev'

all_interactions = pd.concat([interactions_train, interactions_dev], ignore_index = True)

print(f'\nTotal interactions : {len(all_interactions):,}')

print(f' Train : {len(interactions_train):,}')

print(f' Dev : {len(interactions_dev):,}')

Parsing train behaviors... done (5,843,444 rows)

Parsing dev behaviors... done (2,740,998 rows)

Total interactions : 8,584,442

Train : 5,843,444

Dev : 2,740,998

all_interactions.head()

| userId | newsId | clicked | timestamp | split | |

|---|---|---|---|---|---|

| 0 | U13740 | N55689 | 1 | 1573463158 | train |

| 1 | U13740 | N35729 | 0 | 1573463158 | train |

| 2 | U91836 | N20678 | 0 | 1573582290 | train |

| 3 | U91836 | N39317 | 0 | 1573582290 | train |

| 4 | U91836 | N58114 | 0 | 1573582290 | train |

all_interactions['split'].value_counts()

| count | |

|---|---|

| split | |

| train | 5843444 |

| dev | 2740998 |

all_interactions['userId'].nunique()

94057

# Split the data for training

train_clicks = interactions_train[interactions_train['clicked'] == 1]

train_clicks['newsId'] = train_clicks['newsId'].astype(str)

test_clicks = interactions_dev[interactions_dev['clicked'] == 1]

test_clicks['newsId'] = test_clicks['newsId'].astype(str)

# Compile the ground truths

_seen_cache = train_clicks.groupby('userId')['newsId'].apply(set).to_dict()

ground_truth = (test_clicks.groupby('userId')['newsId'].apply(set).rename('true_items'))

# Gather the users

train_users = set(train_clicks['userId'].unique())

test_users = set(ground_truth.index)

warm_users = train_users & test_users

cold_users = test_users - train_users

print(f'Train positive clicks : {len(train_clicks):,}')

print(f'Dev positive clicks : {len(test_clicks):,}')

print(f'Unique train users : {len(train_users):,}')

print(f'Unique test users : {len(test_users):,}')

print(f'Warm users (train test): {len(warm_users):,}')

print(f'Cold users (test only) : {len(cold_users):,}')

Train positive clicks : 236,344

Dev positive clicks : 111,383

Unique train users : 50,000

Unique test users : 50,000

Warm users (train test): 5,943

Cold users (test only) : 44,057

# Parse raw_dev into per-impression evaluation rows.

# Each impression is one independent ranking query: candidates = articles shown

# in that session, true_items = what was clicked. Keeping sessions separate

# prevents global popularity from dominating via cross-session aggregation.

eval_rows = []

for _, r in raw_dev.iterrows():

uid = r['userId']

if uid not in warm_users or pd.isna(r['impressions']):

continue

pairs = str(r['impressions']).split()

cands = [p.split('-')[0] for p in pairs]

clicked = {p.split('-')[0] for p in pairs if p.endswith('-1')}

if not clicked:

continue

eval_rows.append({'userId' : uid,

'impressionId' : r['impressionId'],

'imp_candidates': cands,

'true_items' : clicked})

eval_df = pd.DataFrame(eval_rows)

eval_warm = eval_df.reset_index(drop = True)

print(f'Eval impressions : {len(eval_warm):,}')

print(f'Unique warm users : {eval_warm["userId"].nunique():,}')

print(f'Avg candidates/impression : {eval_warm["imp_candidates"].apply(len).mean():.1f}')

print(f'Avg clicks/impression : {eval_warm["true_items"].apply(len).mean():.2f}')

eval_warm.head()

Eval impressions : 8,959

Unique warm users : 5,943

Avg candidates/impression : 37.6

Avg clicks/impression : 1.52

| userId | impressionId | imp_candidates | true_items | |

|---|---|---|---|---|

| 0 | U44035 | 24 | [N37204, N48487, N59933, N512, N51776, N64077,... | {N37204, N496} |

| 1 | U88867 | 66 | [N20036, N36786, N50055, N2960, N5940, N32536,... | {N31958, N23513} |

| 2 | U80349 | 69 | [N31958, N5472, N36779, N29393, N34130, N23513... | {N29393} |

| 3 | U61801 | 70 | [N20036, N53242, N6916, N48487, N36940, N46917... | {N5940} |

| 4 | U54826 | 82 | [N29363, N44289, N7344, N6340, N4610, N40943, ... | {N7344} |

# Count clicks per article across the training split

pop_counts = train_clicks.groupby('newsId')['clicked'].count().rename('click_count')

# Bayesian-smoothed score: (clicks + C*global_rate) / (impressions + C)

total_impressions = interactions_train.groupby('newsId')['clicked'].count().rename('impressions')

# Global click-through rate

GLOBAL_CTR = train_clicks.shape[0] / len(interactions_train)

# Smoothing constant

C = 50

pop_stats = (pop_counts.to_frame().join(total_impressions).fillna(0))

pop_stats['bayesian_ctr'] = ((pop_stats['click_count'] + C * GLOBAL_CTR) / (pop_stats['impressions'] + C)).astype('float32')

# Articles ranked by training CTR

#train_ranked = pop_stats.sort_values('bayesian_ctr', ascending=False).index.tolist()

# Dev articles not seen in training are appended so they are still reachable by every retriever

#train_pool_set = set(train_ranked)

#unseen_articles = [a for a in news['newsId'].astype(str) if a not in train_pool_set]

#POPULARITY_POOL = train_ranked + unseen_articles

POPULARITY_POOL = pop_stats.sort_values('bayesian_ctr', ascending = False).index.tolist()

print(f'Popularity pool : {len(POPULARITY_POOL):,} training articles')

print(f'Global CTR : {GLOBAL_CTR:.4f}')

pop_stats.sort_values('impressions', ascending=False).head(6)

Popularity pool : 7,713 training articles

Global CTR : 0.0404

| click_count | impressions | bayesian_ctr | |

|---|---|---|---|

| newsId | |||

| N47061 | 820 | 23037 | 0.0356 |

| N51048 | 1875 | 19242 | 0.0973 |

| N26262 | 1139 | 19106 | 0.0596 |

| N50872 | 279 | 18702 | 0.0150 |

| N55689 | 4316 | 18315 | 0.2351 |

| N38779 | 1490 | 18101 | 0.0822 |

2. Exploratory data analysis

📖 Understanding the data before modelling

This section answers eight key questions before building any model:

- How are clicks distributed across articles? (power law expected)

- How active are individual users?

- Which categories dominate the corpus?

- How does CTR vary by category?

- What is the article title-length distribution?

- How do click volumes trend over time?

- What fraction of users have very thin histories (cold-start risk)?

- How much overlap exists between train and dev article pools?

# Compile high-level stats

n_users = all_interactions['userId'].nunique()

n_articles= all_interactions['newsId'].nunique()

n_impr = len(all_interactions)

n_clicks = all_interactions['clicked'].sum()

overall_ctr = n_clicks / n_impr

print(f'{"Users":<30} {n_users:>10,}')

print(f'{"Articles":<30} {n_articles:>10,}')

print(f'{"Total impressions":<30} {n_impr:>10,}')

print(f'{"Total clicks":<30} {n_clicks:>10,}')

print(f'{"Overall CTR":<30} {overall_ctr:>10.4f}')

print(f'{"Sparsity":<30} {1 - n_clicks/(n_users*n_articles):>10.6f}')

Users 94,057

Articles 22,771

Total impressions 8,584,442

Total clicks 347,727

Overall CTR 0.0405

Sparsity 0.999838

Interpreting the headline numbers:

- ~3–5% CTR is typical for editorial news feeds. Random chance would yield ~10% (1 click in 10 shown), so position bias and user selectivity drive CTR well below that.

- Matrix sparsity > 99.9% means collaborative filtering on raw co-clicks alone is brittle — content and temporal signals are essential complements.

- The gap between unique articles and unique users (~65K vs ~50K) tells you the article space is only slightly larger than the user space in this small subset, which is atypically dense for a real-world recommender.

# Compile the clicks distribution and user activity

article_clicks = train_clicks.groupby('newsId')['clicked'].count()

user_clicks = train_clicks.groupby('userId')['clicked'].count()

fig, axes = plt.subplots(1, 3, figsize=(21, 5))

fig.suptitle('MIND - Small: Click distributions', fontsize = 14, fontweight = 'bold')

# (a) Article click histogram (log scale)

ax = axes[0]

ax.hist(np.log1p(article_clicks.values), bins = 60, color = 'steelblue', edgecolor = 'white', lw = 0.4)

ax.set_xlabel('log(1 + clicks per article)')

ax.set_ylabel('Number of articles')

ax.set_title('(a) Article popularity (log scale)')

top5 = article_clicks.nlargest(5)

# Iterate

for nid, cnt in top5.items():

title = news.set_index('newsId').loc[nid, 'title'] if nid in news['newsId'].values else nid

ax.axvline(np.log1p(cnt), color='red', lw=0.8, alpha=0.5)

# (b) User activity histogram

ax = axes[1]

ax.hist(np.log1p(user_clicks.values), bins=60, color='darkorange', edgecolor='white', lw=0.4)

ax.set_xlabel('log(1 + clicks per user)')

ax.set_ylabel('Number of users')

ax.set_title('(b) User activity (log scale)')

# (c) Click count CDF for articles

ax = axes[2]

sorted_clicks = np.sort(article_clicks.values)

cdf = np.arange(1, len(sorted_clicks)+1) / len(sorted_clicks)

ax.plot(np.log1p(sorted_clicks), cdf, color='purple', lw=2)

ax.axhline(0.8, color='grey', ls='--', lw=1)

ax.set_xlabel('log(1 + clicks)')

ax.set_ylabel('CDF')

ax.set_title('(c) Article popularity CDF')

# Find where 80% of articles have fewer than X clicks

p80_idx = np.searchsorted(cdf, 0.8)

ax.annotate(f'80% articles ≤ {sorted_clicks[p80_idx]} clicks',

xy=(np.log1p(sorted_clicks[p80_idx]), 0.8),

xytext=(np.log1p(sorted_clicks[p80_idx])+0.5, 0.65),

arrowprops=dict(arrowstyle='->', color='black'), fontsize=9)

plt.tight_layout()

plt.savefig('eda_click_distribution.png', dpi=150, bbox_inches='tight')

plt.show()

📊 What to look for in these plots:

Plot Expected shape Why it matters Article click histogram Long-tailed / power law A few viral articles capture most clicks — popularity bias is strong User activity histogram Right-skewed Most users click < 10 articles; a handful click 100+. Heavy-tail users dominate training signal Sparsity heatmap Nearly all-zero Collaborative filtering must handle extreme sparsity — motivates CF via item similarity rather than direct user–user CF A power-law click distribution is the single most important structural property of the dataset. It means:

- A popularity baseline (S1) is a surprisingly strong competitor.

- Personalisation gains are concentrated on heavy users who have rich histories.

- Cold-start users (zero history) must fall back to popularity.

# Analysis by category

news_lookup = news.set_index('newsId')[['category','subCategory','title']]

train_with_cat = train_clicks.join(news_lookup, on = 'newsId')

all_with_cat = all_interactions.join(news_lookup, on = 'newsId')

# Compile the stats by cat

cat_stats = (all_with_cat.groupby('category').agg(impressions = ('clicked','count'), clicks = ('clicked','sum')).assign(ctr = lambda d: d['clicks']/d['impressions']).sort_values('impressions', ascending = False))

fig, axes = plt.subplots(1, 2, figsize = (20, 6))

# (a) Volume per category

ax = axes[0]

palette = sns.color_palette('husl', len(cat_stats))

bars = ax.barh(cat_stats.index, cat_stats['impressions'], color=palette)

ax.set_xlabel('Total impressions')

ax.set_title('(a) Impressions per category')

ax.invert_yaxis()

for bar, (_, row) in zip(bars, cat_stats.iterrows()):

ax.text(bar.get_width()*1.01, bar.get_y()+bar.get_height()/2,

f'{row["ctr"]:.2%} CTR', va='center', fontsize=8)

# (b) CTR per category (sorted)

ax = axes[1]

cat_ctr = cat_stats.sort_values('ctr', ascending=False)

bars2 = ax.barh(cat_ctr.index, cat_ctr['ctr']*100, color=palette)

ax.set_xlabel('CTR (%)')

ax.set_title('(b) Click-through rate by category')

ax.invert_yaxis()

ax.axvline(GLOBAL_CTR*100, color = 'red', ls = '--', lw = 1.5, label = f'Global CTR {GLOBAL_CTR:.2%}')

ax.legend()

plt.tight_layout()

plt.savefig('eda_categories.png', dpi=150, bbox_inches='tight')

plt.show()

print(cat_stats.to_string())

impressions clicks ctr

category

news 2232125 95172 0.0426

lifestyle 1016267 45431 0.0447

sports 942187 54220 0.0575

finance 789133 24610 0.0312

foodanddrink 572554 17579 0.0307

entertainment 464494 13362 0.0288

travel 446318 10858 0.0243

health 441673 15331 0.0347

autos 382055 10282 0.0269

tv 374229 20176 0.0539

music 358613 19776 0.0551

movies 243102 7604 0.0313

video 181367 7076 0.0390

weather 140130 6246 0.0446

kids 166 3 0.0181

northamerica 29 1 0.0345

📊 Category plots — what they tell you:

The left panel (impression counts) reveals the supply of content per category. The right panel (CTR per category) reveals demand quality — which categories users actually engage with vs. merely see. Gaps between supply and CTR (e.g. high-impression, low-CTR categories) point to editorial over-representation and motivate category-affinity personalisation (S2).

all_interactions.head()

| userId | newsId | clicked | timestamp | split | |

|---|---|---|---|---|---|

| 0 | U13740 | N55689 | 1 | 1573463158 | train |

| 1 | U13740 | N35729 | 0 | 1573463158 | train |

| 2 | U91836 | N20678 | 0 | 1573582290 | train |

| 3 | U91836 | N39317 | 0 | 1573582290 | train |

| 4 | U91836 | N58114 | 0 | 1573582290 | train |

all_interactions['split'].value_counts()

| count | |

|---|---|

| split | |

| train | 5843444 |

| dev | 2740998 |

# Analysis of cold start data

hist_train = parse_history_length(raw_train)

hist_dev = parse_history_length(raw_dev)

# Visualize the cold start ratios

fig, axes = plt.subplots(1, 2, figsize=(20, 5))

ax = axes[0]

ax.hist(hist_train.clip(upper=100), bins=50, color='teal', edgecolor='white', lw=0.4)

ax.set_xlabel('History length (clicks, capped at 100)')

ax.set_ylabel('Users')

ax.set_title('Train: history length distribution')

cold_frac = (hist_train == 0).mean()

ax.axvline(0, color='red', lw=1.5, label=f'Cold ({cold_frac:.1%})')

ax.legend()

ax = axes[1]

thresholds = [0, 1, 3, 5, 10, 20]

fracs = [(hist_train <= t).mean() for t in thresholds]

ax.plot(thresholds, [f*100 for f in fracs], 'o-', color='darkorange', lw=2)

ax.set_xlabel('History length threshold')

ax.set_ylabel('% users at or below threshold')

ax.set_title('Cumulative cold-start risk')

ax.axhline(50, color='grey', ls='--', lw=1, label='50%')

ax.legend()

plt.tight_layout()

plt.savefig('eda_coldstart.png', dpi=150, bbox_inches='tight')

plt.show()

print(f'Train users with zero history : {(hist_train==0).sum():,} ({cold_frac:.2%})')

print(f'Train users with ≤5 history : {(hist_train<=5).sum():,} ({(hist_train<=5).mean():.2%})')

Train users with zero history : 892 (1.78%)

Train users with ≤5 history : 12,979 (25.96%)

❄️ Cold-start implications:

The history-length distribution directly sets your cold-start strategy. Users with zero history cannot benefit from personalised retrieval (no clicks to aggregate into a taste vector or to look up similar articles from). The pipeline handles this with a binary gate in §7:

is_cold(user) → True ➜ return top-N global popularity articles is_cold(user) → False ➜ run full personalised pipeline (S2 + S3 + S4)Even “warm” users with only 1–2 clicks have very noisy taste signals. The Bayesian smoothing in

bayesian_ctrand the normalised affinity vectors are designed to degrade gracefully in this sparse regime.

3. Feature engineering

We construct four reusable feature tables:

user_stats— per-user: click count, active days, click frequency, favourite categoryarticle_feat— per-article: click count (log), Bayesian CTR, category one-hot, TF-IDF centroiduser_cat_affinity— (user × category) matrix of normalised click preferencesimp_train_df— impression-level (userId, newsId, label) frame with query groups for LambdaRank (Fix 1 & 2)

The TF-IDF vectoriser is fit on training article titles+abstracts only and transforms both train and dev articles, preventing feature leakage from future text.

tfidf_sim (cosine similarity between user click-history TF-IDF centroid and each candidate article) and article_age_days (log-scaled age since first impression, capturing news recency).

🛠️ Feature Engineering Map

Four complementary feature tables are constructed — each captures a different signal about users and articles:

FEATURE ENGINEERING

═══════════════════

┌──────────────────────────────────────────────────────────────────────┐

│ SOURCE: train_clicks (positive interactions only) │

└───────────┬──────────────────────┬───────────────────────────────────┘

│ │

▼ ▼

┌────────────────────┐ ┌──────────────────────────────────────────┐

│ USER SIDE │ │ ARTICLE SIDE │

│ │ │ │

│ user_stats │ │ article_feat │

│ ───────────── │ │ ───────────── │

│ click_count │ │ log_clicks (log(1+n)) │

│ active_days │ │ log_impr │

│ click_freq │ │ bayesian_ctr ← smoothed CTR │

│ fav_category │ │ article_len ← title+abstract words │

│ │ │ article_age_days │

│ user_cat_affinity │ │ category one-hot (18 categories) │

│ ───────────── │ │ │

│ 18-dim L2-norm │ │ TF-IDF centroid (10k-dim, reduced) │

│ click distribution│ │ │

└────────────────────┘ └──────────────────────────────────────────┘

│ │

└──────────┬───────────┘

│ cross-signals

▼

┌───────────────────────┐

│ INTERACTION FEATURES │

│ │

│ cat_affinity ← user_cat · article_cat (dot product) │

│ taste_affinity ← temporal_taste · article_cat │

│ tfidf_sim ← user_centroid · article_tfidf │

│ recent_tfidf_sim ← recent-click centroid similarity │

└───────────────────────┘

Design principle: Each feature is normalized to a comparable scale before being passed to LightGBM. Tree models are invariant to monotonic transforms, but consistent scaling improves interpretability of feature importances.

# Compile user features

user_stats = train_clicks.groupby('userId').agg(click_count = ('newsId', 'count'),

first_ts = ('timestamp', 'min'),

last_ts = ('timestamp', 'max'),)

user_stats['active_days'] = ((user_stats['last_ts'] - user_stats['first_ts']) / 86400).clip(lower = 1).astype('float32')

user_stats['click_freq'] = (user_stats['click_count'] / user_stats['active_days']).astype('float32')

fav_cat = (train_with_cat.groupby(['userId','category'])['clicked'].count().reset_index().sort_values('clicked', ascending = False).drop_duplicates('userId').set_index('userId')['category'])

user_stats['fav_category'] = fav_cat

user_stats = user_stats.fillna({'fav_category': 'unknown'})

print(f'user_stats: {user_stats.shape}')

# Compile article features

article_feat = (pop_stats[['click_count','impressions','bayesian_ctr']].rename(columns={'click_count':'global_clicks','impressions':'global_impressions'}))

article_feat['log_clicks'] = np.log1p(article_feat['global_clicks']).astype('float32')

article_feat['log_impr'] = np.log1p(article_feat['global_impressions']).astype('float32')

article_feat = article_feat.join(news.set_index('newsId')[['category','subCategory','text']], how='left')

article_feat['article_len'] = article_feat['text'].fillna('').apply(len).astype('float32')

# Aticle recency — use earliest training impression as proxy for publish time

EVAL_TS = int(interactions_train['timestamp'].max())

article_first_seen = interactions_train.groupby('newsId')['timestamp'].min()

article_feat['article_age_days'] = (np.log1p((EVAL_TS - article_first_seen) / 86_400).clip(lower = 0).astype('float32').reindex(article_feat.index).fillna(article_feat['log_impr']))

print(f'article_feat: {article_feat.shape}')

# Sub-category click counts per user — finer-grained than category affinity

user_subcat_clicks = (train_with_cat.groupby(['userId', 'subCategory'])['clicked'].count().to_dict())

print(f'user_subcat_clicks entries: {len(user_subcat_clicks):,}')

user_stats: (50000, 6)

article_feat: (7713, 10)

user_subcat_clicks entries: 188,670

train_cat_vocab = pd.get_dummies(article_feat['category'].dropna(), prefix = 'cat').columns

all_news_cat = news.set_index('newsId')['category'].dropna()

article_cat = (pd.get_dummies(all_news_cat, prefix = 'cat').astype('float32').reindex(columns = train_cat_vocab, fill_value = 0))

cat_cols = article_cat.columns.tolist()

print(f'Category columns ({len(cat_cols)}): {cat_cols}')

print(f'article_cat covers {len(article_cat):,} articles '

f'(train: {len(article_feat):,} dev-only: {len(article_cat)-len(article_feat):,})')

Category columns (16): ['cat_autos', 'cat_entertainment', 'cat_finance', 'cat_foodanddrink', 'cat_health', 'cat_kids', 'cat_lifestyle', 'cat_movies', 'cat_music', 'cat_news', 'cat_northamerica', 'cat_sports', 'cat_travel', 'cat_tv', 'cat_video', 'cat_weather']

article_cat covers 65,238 articles (train: 7,713 dev-only: 57,525)

user_stats.head()

| click_count | first_ts | last_ts | active_days | click_freq | fav_category | |

|---|---|---|---|---|---|---|

| userId | ||||||

| U100 | 1 | 1573544052 | 1573544052 | 1.0000 | 1.0000 | news |

| U1000 | 4 | 1573686978 | 1573771041 | 1.0000 | 4.0000 | news |

| U10001 | 3 | 1573450221 | 1573710414 | 3.0115 | 0.9962 | autos |

| U10003 | 3 | 1573455962 | 1573481638 | 1.0000 | 3.0000 | sports |

| U10008 | 1 | 1573308813 | 1573308813 | 1.0000 | 1.0000 | weather |

article_feat.head()

| global_clicks | global_impressions | bayesian_ctr | log_clicks | log_impr | category | subCategory | text | article_len | article_age_days | |

|---|---|---|---|---|---|---|---|---|---|---|

| newsId | ||||||||||

| N10032 | 1 | 190 | 0.0126 | 0.6931 | 5.2523 | foodanddrink | recipes | 14 butternut squash recipes for delightfully c... | 172.0000 | 0.2827 |

| N10051 | 1 | 370 | 0.0072 | 0.6931 | 5.9162 | autos | autosenthusiasts | VW ID.3 Electric Motor Is So Compact That Fits... | 160.0000 | 0.2640 |

| N10056 | 6 | 38 | 0.0912 | 1.9459 | 3.6636 | sports | football_nfl | Russell Wilson, Richard Sherman swap jerseys d... | 176.0000 | 1.4091 |

| N10057 | 2 | 41 | 0.0442 | 1.0986 | 3.7377 | weather | weathertopstories | Venice swamped by highest tide in more than 50... | 243.0000 | 1.0215 |

| N1006 | 1 | 2 | 0.0581 | 0.6931 | 1.0986 | sports | football_nfl | Jaguars vs. Colts: A.J. Cann, Will Richardson ... | 487.0000 | 0.2862 |

article_cat.head()

| cat_autos | cat_entertainment | cat_finance | cat_foodanddrink | cat_health | cat_kids | cat_lifestyle | cat_movies | cat_music | cat_news | cat_northamerica | cat_sports | cat_travel | cat_tv | cat_video | cat_weather | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| newsId | ||||||||||||||||

| N55528 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 1.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| N19639 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 1.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| N61837 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 1.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| N53526 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 1.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| N38324 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 1.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

# Perform TF-IDF

train_news_ids = set(train_clicks['newsId'].unique())

news_indexed = news.set_index('newsId')

train_texts = news_indexed.loc[news_indexed.index.isin(train_news_ids), 'text'].fillna('')

print('Fitting TF-IDF on train articles...', end = ' ', flush = True)

tfidf = TfidfVectorizer(max_features = 50000, sublinear_tf = True, min_df = 2, ngram_range = (1,2))

tfidf.fit(train_texts)

print('done.')

# Transform all articles (train + dev)

all_texts = news_indexed['text'].fillna('')

tfidf_mat = tfidf.transform(all_texts) # sparse (n_articles, 5000)

tfidf_idx = {nid: i for i, nid in enumerate(news_indexed.index)}

print(f'TF-IDF matrix: {tfidf_mat.shape} nnz={tfidf_mat.nnz:,}')

Fitting TF-IDF on train articles... done.

TF-IDF matrix: (65238, 46525) nnz=3,181,345

%%time

# Build per-user TF-IDF centroids (click-history text profile)

# The centroid is the mean of the TF-IDF vectors of all articles a user has clicked,

# normalised to unit L2 so dot products equal cosine similarity at scoring time.

print('Building user TF-IDF centroids...', end = ' ', flush = True)

user_tfidf_centroids = {}

for uid, group in train_clicks.groupby('userId'):

idxs = [tfidf_idx[nid] for nid in group['newsId'] if nid in tfidf_idx]

if not idxs:

continue

centroid = np.asarray(tfidf_mat[idxs].mean(axis=0)).ravel() # (10000,)

norm = np.linalg.norm(centroid)

if norm > 1e-9:

user_tfidf_centroids[uid] = centroid / norm

print(f'done ({len(user_tfidf_centroids):,} users have centroids)')

# Centroid of only the last 20 clicks — captures recent vs lifetime interest

print('Building recent TF-IDF centroids (last 20 clicks)...', end = ' ', flush = True)

user_recent_tfidf_centroids = {}

for uid, group in train_clicks.sort_values('timestamp').groupby('userId'):

recent_nids = group['newsId'].tolist()[-20:]

idxs = [tfidf_idx[nid] for nid in recent_nids if nid in tfidf_idx]

if not idxs:

continue

centroid = np.asarray(tfidf_mat[idxs].mean(axis=0)).ravel()

norm = np.linalg.norm(centroid)

if norm > 1e-9:

user_recent_tfidf_centroids[uid] = centroid / norm

print(f'done ({len(user_recent_tfidf_centroids):,} users)')

Building user TF-IDF centroids... done (50,000 users have centroids)

Building recent TF-IDF centroids (last 20 clicks)... done (50,000 users)

CPU times: user 17min 48s, sys: 1min 15s, total: 19min 3s

Wall time: 2min 26s

# Create an affinity matrix for user-category: compute normalised click counts per category

user_cat = (train_with_cat.groupby(['userId','category'])['clicked'].count().unstack(fill_value = 0).astype('float32'))

# Normalise rows to unit L2 norm

norms = np.linalg.norm(user_cat.values, axis = 1, keepdims = True).clip(min = 1e-9)

user_cat_affinity = pd.DataFrame(user_cat.values / norms, index = user_cat.index, columns = user_cat.columns)

# Align article-category matrix columns with user-category matrix

article_cat_aligned = article_cat.reindex(columns = user_cat.columns, fill_value = 0)

article_cat_norm = normalize(article_cat_aligned.values.astype('float32'), norm = 'l2', axis = 1)

article_cat_idx = article_cat_aligned.index.tolist()

print(f'user_cat_affinity : {user_cat_affinity.shape}')

print(f'article_cat_norm : {article_cat_norm.shape}')

user_cat_affinity.head(3)

user_cat_affinity : (50000, 16)

article_cat_norm : (65238, 16)

| category | autos | entertainment | finance | foodanddrink | health | kids | lifestyle | movies | music | news | northamerica | sports | travel | tv | video | weather |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| userId | ||||||||||||||||

| U100 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 1.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| U1000 | 0.0000 | 0.0000 | 0.0000 | 0.4082 | 0.0000 | 0.0000 | 0.0000 | 0.4082 | 0.0000 | 0.8165 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| U10001 | 0.5774 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.5774 | 0.5774 | 0.0000 | 0.0000 | 0.0000 |

Good to Know-

📐 Why L2-normalise the affinity vectors?

After normalising both

user_cat_affinity(user rows) andarticle_cat(article rows) to unit L2 norm, their dot product equals cosine similarity — a value in [–1, 1] that measures directional agreement, independent of how many clicks a user has. This prevents heavy users (who click 200+ articles) from dominating the ranking signal purely because their affinity magnitudes are large.The same logic applies to TF-IDF centroids: unit-norm centroids mean that a user with 3 clicks and a user with 300 clicks are compared on the same scale when scoring article relevance.

4. Article-based collaborative filtering

📖 Batched sparse co-click similarity

We build a article × user click matrix from positive training interactions, normalise rows (articles) to unit L2 norm, and compute cosine similarities between articles in batches to avoid OOM. The result is an item_sim_lookup dict mapping newsId → [(newsId, similarity), …] for the top-50 nearest neighbours.

This creates the S3 retriever: for a given user, find all articles they clicked, look up each article’s nearest neighbours, aggregate scores (weighted by similarity × recency), and surface the top-N unseen articles.

%%time

# Build article x user_click matrices

article_ids_cf = train_clicks['newsId'].unique()

user_ids_cf = train_clicks['userId'].unique()

a_idx = {a: i for i, a in enumerate(article_ids_cf)}

u_idx = {u: i for i, u in enumerate(user_ids_cf)}

idx_a = {i: a for a, i in a_idx.items()}

R_cf = csr_matrix((np.ones(len(train_clicks), dtype='float32'), (train_clicks['newsId'].map(a_idx).values, train_clicks['userId'].map(u_idx).values)), shape = (len(article_ids_cf), len(user_ids_cf)))

R_norm_cf = normalize(R_cf, norm = 'l2', axis = 1)

print(f'Click matrix: {R_cf.shape} nnz={R_cf.nnz:,}')

print(f'Memory: R={R_cf.data.nbytes/1e6:.0f} MB R_norm={R_norm_cf.data.nbytes/1e6:.0f} MB')

Click matrix: (7713, 50000) nnz=234,468

Memory: R=1 MB R_norm=1 MB

CPU times: user 149 ms, sys: 80 µs, total: 149 ms

Wall time: 148 ms

# Perform a batched knn to get similar articles

item_sim_lookup = {}

n_articles_cf = R_norm_cf.shape[0]

t0 = time.time()

for start in range(0, n_articles_cf, 1000):

batch = R_norm_cf[start : start + 1000]

sims = (batch @ R_norm_cf.T).toarray()

for local_i, sim_row in enumerate(sims):

global_i = start + local_i

sim_row[global_i] = 0.0

top_k = np.argpartition(sim_row, -50)[-50:]

top_k = top_k[np.argsort(sim_row[top_k])[::-1]]

aid = idx_a[global_i]

item_sim_lookup[aid] = [(idx_a[j], float(sim_row[j])) for j in top_k]

if start % 1000 == 0:

print(f' {start:>6}/{n_articles_cf} {time.time()-t0:.0f}s')

del R_cf, R_norm_cf; gc.collect()

print(f'\nItem-sim lookup: {len(item_sim_lookup):,} articles in {time.time()-t0:.0f}s')

0/7713 0s

1000/7713 1s

2000/7713 1s

3000/7713 1s

4000/7713 1s

5000/7713 2s

6000/7713 2s

7000/7713 2s

Item-sim lookup: 7,713 articles in 2s

🔗 How item-based CF works here:

The similarity lookup captures the intuition: “users who clicked article A also tended to click article B.”

Article × User click matrix R (shape: 65K articles × 50K users) R[i, u] = 1 if user u clicked article i, else 0 Normalise rows to unit L2: R_norm = R / ||R||₂ (row-wise) Similarity matrix: S = R_norm · R_normᵀ → cosine similarity between articles item_sim_lookup[A] = top-50 articles by S[A, :]Why batch the computation? A full 65K × 65K similarity matrix would require ~17 GB of float32 memory. Processing in batches of 1,000 articles keeps peak memory under 2 GB by materialising only one slice at a time.

Retriever score for a user: sum the similarity scores of all articles in the user’s click history toward each candidate article — the more co-clicked history overlaps with the candidate, the higher its S3 score.

5. Temporal sequence modelling

📖 Recency-weighted taste vectors

Recent clicks should dominate a user’s preference profile — an article clicked yesterday matters more than one from three weeks ago. We compute per-user category taste vectors using exponential decay (half-life = 7 days, matching news freshness intuition). The resulting matrix enables fast batch dot-products at inference time.

# Compute recency weighted taste vectors - one week

DECAY_HALF_LIFE = 7

DECAY_K = np.log(2) / DECAY_HALF_LIFE

now_ts = int(train_clicks['timestamp'].max())

clicks_ts = train_clicks[['userId','newsId','timestamp']].copy()

clicks_ts['weight'] = np.exp(-DECAY_K * (now_ts - clicks_ts['timestamp'].values.astype('float64')) / 86400).astype('float32')

# Join category info for each click

clicks_ts = clicks_ts.join(news.set_index('newsId')[['category']], on='newsId')

clicks_ts = clicks_ts.dropna(subset=['category'])

# Aggregate: user × category, weighted by recency

user_taste = (clicks_ts.groupby(['userId','category'])['weight'].sum().unstack(fill_value=0).astype('float32'))

# Normalise to unit L2 so dot-products equal cosine similarity

taste_norms = np.linalg.norm(user_taste.values, axis=1, keepdims=True).clip(min=1e-9)

user_taste_norm = pd.DataFrame(user_taste.values / taste_norms, index = user_taste.index, columns = user_taste.columns)

# Align with article-category matrix

article_cat_taste = article_cat.reindex(columns=user_taste.columns, fill_value=0)

article_cat_taste_norm = normalize(article_cat_taste.values.astype('float32'), norm='l2', axis=1)

taste_article_idx = article_cat_taste.index.tolist()

print(f'user_taste_norm : {user_taste_norm.shape}')

print(f'article_cat_taste : {article_cat_taste_norm.shape}')

user_taste_norm.head(3)

user_taste_norm : (50000, 16)

article_cat_taste : (65238, 16)

| category | autos | entertainment | finance | foodanddrink | health | kids | lifestyle | movies | music | news | northamerica | sports | travel | tv | video | weather |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| userId | ||||||||||||||||

| U100 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 1.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| U1000 | 0.0000 | 0.0000 | 0.0000 | 0.4027 | 0.0000 | 0.0000 | 0.0000 | 0.4402 | 0.0000 | 0.8025 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| U10001 | 0.6261 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.4647 | 0.6261 | 0.0000 | 0.0000 | 0.0000 |

⏱️ Exponential Decay — The Intuition

The recency weight for a click is:

\[w(t) = e^{-k \cdot \Delta t_{days}}, \quad k = \frac{\ln 2}{\text{half-life}}\]With half-life = 7 days, a click from:

| Days ago | Weight |

|---|---|

| 0 (today) | 1.000 |

| 3.5 days | ~0.707 |

| 7 days | 0.500 ← half-life |

| 14 days | 0.250 |

| 28 days | 0.063 |

| 42 days | 0.016 |

The six-week MIND window (Oct 12–Nov 22) means clicks from the start of the window receive weight ≈ 0.016 relative to the most recent clicks — effectively negligible. This mirrors real editorial news consumption where interests shift week-to-week.

Alternative half-lives to consider: Shorter (3 days) captures breaking-news spikes; longer (14 days) suits evergreen topic interests (e.g. a user researching a health condition over two weeks). The 7-day default is a reasonable starting point for general news.

# Feature column list — all base LGB and meta-ranker base features

FEATURE_COLS = ['u_click_count', 'u_click_freq', # user engagement

'm_log_clicks', 'm_log_impr', # article global popularity

'm_article_len', # article length

'cat_affinity', 'taste_affinity', # collaborative signals

'tfidf_sim', # content similarity (full history centroid)

'recent_tfidf_sim', # content similarity (last-20 clicks centroid)

'article_age_days', # news recency

'ctr_norm_rank', # rank by CTR within impression (0=most popular)

'imp_size', # number of candidates in impression

'subcat_clicks'] # user click count for this sub-category

# Select num candidates for training

K_CAND = 200

rng_feat = np.random.default_rng(100)

print(f'FEATURE_COLS ({len(FEATURE_COLS)}): {FEATURE_COLS}')

FEATURE_COLS (13): ['u_click_count', 'u_click_freq', 'm_log_clicks', 'm_log_impr', 'm_article_len', 'cat_affinity', 'taste_affinity', 'tfidf_sim', 'recent_tfidf_sim', 'article_age_days', 'ctr_norm_rank', 'imp_size', 'subcat_clicks']

# Split the data for training / testing (70-30)

rng_oof = np.random.default_rng(42)

all_train_users = np.array(list(train_users & set(user_stats.index)))

rng_oof.shuffle(all_train_users)

split_idx = int(len(all_train_users) * 0.70)

SET_A_users = set(all_train_users[:split_idx])

SET_B_users = set(all_train_users[split_idx:])

print(f'Training users total : {len(all_train_users):,}')

print(f' SET_A (base LGB) : {len(SET_A_users):,}')

print(f' SET_B (meta OOF) : {len(SET_B_users):,}')

# user_click_sets is needed by s3_itemcf and training loops

user_click_sets = train_clicks.groupby('userId')['newsId'].apply(set).to_dict()

Training users total : 50,000

SET_A (base LGB) : 35,000

SET_B (meta OOF) : 15,000

# Pre-compile feature dicts (O(1) lookups at scoring time)

art_pos = {a: i for i, a in enumerate(article_cat_idx)}

taste_pos = {a: i for i, a in enumerate(taste_article_idx)}

af_log_clicks = article_feat['log_clicks'].to_dict()

af_log_impr = article_feat['log_impr'].to_dict()

af_bayesian_ctr = article_feat['bayesian_ctr'].to_dict()

af_article_len = article_feat['article_len'].to_dict()

af_article_age = article_feat['article_age_days'].to_dict()

us_click_count = user_stats['click_count'].to_dict()

us_click_freq = user_stats['click_freq'].to_dict()

newsid_to_subcat = news.set_index('newsId')['subCategory'].to_dict()

%%time

# Build training pairs from actual MIND impression rows (SET_A users only).

# Each impression is one ranking query; every article shown is a candidate;

# the click label is the ground truth. This aligns train and eval distributions.

print('Parsing training impressions for SET_A users...', end = ' ', flush = True)

# Init

imp_rows = []

# Iterate

for _, r in raw_train.iterrows():

uid = r['userId']

if uid not in SET_A_users:

continue

imp_id = r['impressionId']

if pd.notna(r['impressions']):

for pair in str(r['impressions']).split():

nid, lbl = pair.rsplit('-', 1)

imp_rows.append((imp_id, uid, str(nid), int(lbl)))

# Compile the iterations

imp_train_df = pd.DataFrame(imp_rows, columns = ['impressionId','userId','newsId','label'])

del imp_rows; gc.collect()

n_pos = int(imp_train_df['label'].sum())

print(f'done ({len(imp_train_df):,} rows | {imp_train_df["impressionId"].nunique():,} impressions | '

f'pos={n_pos:,} neg={len(imp_train_df)-n_pos:,})')

# Merge user fts

imp_train_df = imp_train_df.join(user_stats[['click_count','click_freq']].rename(columns = {'click_count':'u_click_count','click_freq':'u_click_freq'}), on = 'userId')

imp_train_df = imp_train_df.join(article_feat[['log_clicks','log_impr','bayesian_ctr','article_len','article_age_days']].rename(columns = {'log_clicks':'m_log_clicks','log_impr':'m_log_impr', 'bayesian_ctr':'m_bayesian_ctr','article_len':'m_article_len', 'article_age_days':'article_age_days'}), on = 'newsId')

# Merge category and taste affinity

newsid_to_cat = news.set_index('newsId')['category'].to_dict()

imp_train_df['category'] = imp_train_df['newsId'].map(newsid_to_cat)

relevant_users = imp_train_df['userId'].unique()

uca_long = (user_cat_affinity.reindex(index=relevant_users).stack().reset_index().rename(columns={'level_0':'userId','level_1':'category',0:'cat_affinity'}))

imp_train_df = imp_train_df.merge(uca_long, on = ['userId','category'], how = 'left')

del uca_long

uta_long = (user_taste_norm.reindex(index=relevant_users).stack().reset_index().rename(columns = {'level_0':'userId','level_1':'category',0:'taste_affinity'}))

imp_train_df = imp_train_df.merge(uta_long, on = ['userId','category'], how = 'left')

del uta_long; gc.collect()

# Compute the tf-idf similarities

print('Computing TF-IDF affinities...', end = ' ', flush = True)

uid_nid_sim = {}

for uid, grp in imp_train_df.groupby('userId'):

centroid = user_tfidf_centroids.get(uid)

if centroid is None:

continue

nids = grp['newsId'].unique()

idxs = [tfidf_idx[nid] for nid in nids if nid in tfidf_idx]

valid = [(nid, tfidf_idx[nid]) for nid in nids if nid in tfidf_idx]

if not valid:

continue

v_nids, v_idxs = zip(*valid)

sims = np.asarray(tfidf_mat[list(v_idxs)].dot(centroid)).ravel()

for nid, sim in zip(v_nids, sims):

uid_nid_sim[(uid, nid)] = float(sim)

imp_train_df['tfidf_sim'] = [uid_nid_sim.get((r.userId, r.newsId), 0.0) for r in imp_train_df.itertuples()]

del uid_nid_sim; gc.collect()

print('done.')

# recent_tfidf_sim — centroid of user's last 20 clicks

print('Computing recent TF-IDF affinities...', end = ' ', flush = True)

uid_nid_recent_sim = {}

for uid, grp in imp_train_df.groupby('userId'):

centroid = user_recent_tfidf_centroids.get(uid)

if centroid is None:

continue

valid = [(nid, tfidf_idx[nid]) for nid in grp['newsId'].unique() if nid in tfidf_idx]

if not valid:

continue

v_nids, v_idxs = zip(*valid)

sims = np.asarray(tfidf_mat[list(v_idxs)].dot(centroid)).ravel()

for nid, sim in zip(v_nids, sims):

uid_nid_recent_sim[(uid, nid)] = float(sim)

imp_train_df['recent_tfidf_sim'] = [uid_nid_recent_sim.get((r.userId, r.newsId), 0.0) for r in imp_train_df.itertuples()]

del uid_nid_recent_sim; gc.collect()

print('done.')

# subcat_clicks — user click count for candidate's specific sub-category

imp_train_df['_subcat'] = imp_train_df['newsId'].map(newsid_to_subcat)

_subcat_lkp = pd.DataFrame([(u, sc, cnt) for (u, sc), cnt in user_subcat_clicks.items()], columns = ['userId', '_subcat', 'subcat_clicks'])

imp_train_df = imp_train_df.merge(_subcat_lkp, on=['userId', '_subcat'], how='left')

imp_train_df['subcat_clicks'] = imp_train_df['subcat_clicks'].fillna(0).astype('float32')

imp_train_df.drop(columns=['_subcat'], inplace=True)

del _subcat_lkp

# Within-impression context features

imp_train_df['imp_size'] = (imp_train_df.groupby('impressionId')['newsId'].transform('count').astype('float32'))

imp_train_df['ctr_norm_rank'] = (imp_train_df.groupby('impressionId')['m_bayesian_ctr'].transform(lambda x: (x.rank(ascending=False, method='average') - 1).div(max(1, len(x) - 1))).astype('float32'))

imp_train_df[FEATURE_COLS] = imp_train_df[FEATURE_COLS].fillna(0).astype('float32')

print(f'imp_train_df shape: {imp_train_df.shape}')

Parsing training impressions for SET_A users... done (4,090,484 rows | 110,162 impressions | pos=165,852 neg=3,924,632)

Computing TF-IDF affinities... done.

Computing recent TF-IDF affinities... done.

imp_train_df shape: (4090484, 19)

CPU times: user 1min 24s, sys: 1.27 s, total: 1min 25s

Wall time: 1min 25s

imp_train_df.head()

| impressionId | userId | newsId | label | u_click_count | u_click_freq | m_log_clicks | m_log_impr | m_bayesian_ctr | m_article_len | article_age_days | category | cat_affinity | taste_affinity | tfidf_sim | recent_tfidf_sim | subcat_clicks | imp_size | ctr_norm_rank | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 3 | U73700 | N50014 | 0 | 3.0000 | 1.8087 | 3.8067 | 8.2895 | 0.0114 | 163.0000 | 1.1812 | sports | 0.8944 | 0.8616 | 0.0236 | 0.0236 | 0.0000 | 36.0000 | 1.0000 |

| 1 | 3 | U73700 | N23877 | 0 | 3.0000 | 1.8087 | 6.4232 | 9.3310 | 0.0545 | 340.0000 | 0.7478 | news | 0.0000 | 0.0000 | 0.0219 | 0.0219 | 0.0000 | 36.0000 | 0.2857 |

| 2 | 3 | U73700 | N35389 | 0 | 3.0000 | 1.8087 | 5.5053 | 8.1259 | 0.0720 | 244.0000 | 1.1917 | finance | 0.0000 | 0.0000 | 0.0423 | 0.0423 | 0.0000 | 36.0000 | 0.0857 |

| 3 | 3 | U73700 | N49712 | 0 | 3.0000 | 1.8087 | 6.2305 | 8.9469 | 0.0658 | 290.0000 | 0.8228 | news | 0.0000 | 0.0000 | 0.0161 | 0.0161 | 0.0000 | 36.0000 | 0.1429 |

| 4 | 3 | U73700 | N16844 | 0 | 3.0000 | 1.8087 | 5.5294 | 8.4845 | 0.0518 | 278.0000 | 1.1625 | autos | 0.0000 | 0.0000 | 0.0219 | 0.0219 | 0.0000 | 36.0000 | 0.3143 |

# Training data summary

print(imp_train_df.dtypes)

print(f'\nLabel distribution:\n{imp_train_df["label"].value_counts()}')

impressionId int64

userId object

newsId object

label int64

u_click_count float32

u_click_freq float32

m_log_clicks float32

m_log_impr float32

m_bayesian_ctr float32

m_article_len float32

article_age_days float32

category object

cat_affinity float32

taste_affinity float32

tfidf_sim float32

recent_tfidf_sim float32

subcat_clicks float32

imp_size float32

ctr_norm_rank float32

dtype: object

Label distribution:

label

0 3924632

1 165852

Name: count, dtype: int64

📋

imp_train_df— the learning-to-rank training table:Each row is one (user, article, impression) triple from an actual MIND impression session. The

impressionIdgroups rows so the ranker knows which candidates competed against each other in the same session:impressionId | userId | newsId | label | u_click_count | m_log_clicks | cat_affinity | … | tfidf_sim ─────────────┼────────┼────────┼───────┼───────────────┼──────────────┼──────────────┼───┼────────── imp-001 | U1234 | N5001 | 1 | 42 | 2.30 | 0.81 | … | 0.67 imp-001 | U1234 | N5002 | 0 | 42 | 1.10 | 0.23 | … | 0.12 imp-001 | U1234 | N5003 | 0 | 42 | 3.45 | 0.61 | … | 0.44 imp-002 | U9876 | N1001 | 0 | 8 | 2.30 | 0.05 | … | 0.31 …The

impressionIdcolumn becomes the LightGBM query group — the model is told “these rows compete against each other, optimise their relative ordering” via LambdaRank.

6. Evaluation harness & S1–S5 strategies

Five metrics evaluated at K = 5 and K = 10. Composite score = mean(NDCG@K, Hit-Rate@K) — avoids double-counting Precision and Recall through F1.

| Strategy | Description |

|---|---|

| S1 | Global popularity — Bayesian CTR ranking |

| S2 | Category affinity — dot product of user preferences with article categories |

| S3 | Item-based CF — aggregate neighbour scores from clicked articles |

| S4 | Temporal taste — recency-weighted category preference |

| S5 | LightGBM LambdaRank ranker |

📏 Evaluation Metrics — Quick Reference

All metrics are computed per impression (one ranking query = one session), then averaged across users. K ∈ {5, 10} controls the cutoff — only the top-K predicted articles count.

| Metric | Formula (simplified) | Interpretation |

|---|---|---|

| Precision@K | (# clicked in top-K) / K | Of K articles shown, how many did the user click? |

| Recall@K | (# clicked in top-K) / (# total clicks in session) | Of all clicked articles, how many were in top-K? |

| F1@K | 2 · P · R / (P + R) | Harmonic mean of precision and recall |

| NDCG@K | DCG@K / IDCG@K | Position-weighted relevance; clicked articles ranked first score highest |

| Hit-Rate@K | 1 if ≥ 1 clicked article in top-K else 0 | Did the user find at least one article they liked? |

| Composite | mean(NDCG@K, HR@K) | Summary score used for leaderboard ranking |

Why Composite = mean(NDCG, HR)? Using their mean avoids double-counting the Precision and Recall components that are already captured by F1, while still rewarding both ranked quality (NDCG) and binary coverage (HR).

Why per-impression, not global? Evaluating globally would mix impressions from different sessions and let popular articles dominate. Per-impression evaluation mirrors deployment: the model ranks a specific set of candidates for one user at one moment.

%%time

# LambdaRank objective with per-impression query groups- LambdaMART directly optimises NDCG within each impression list

# Sort by impressionId so groups are contiguous

imp_train_df = imp_train_df.sort_values('impressionId').reset_index(drop = True)

# 85 / 15 impression-level split (no leakage across impression boundaries)