MovieLens User Data: Machine Learning Recommendation Engine

Published:

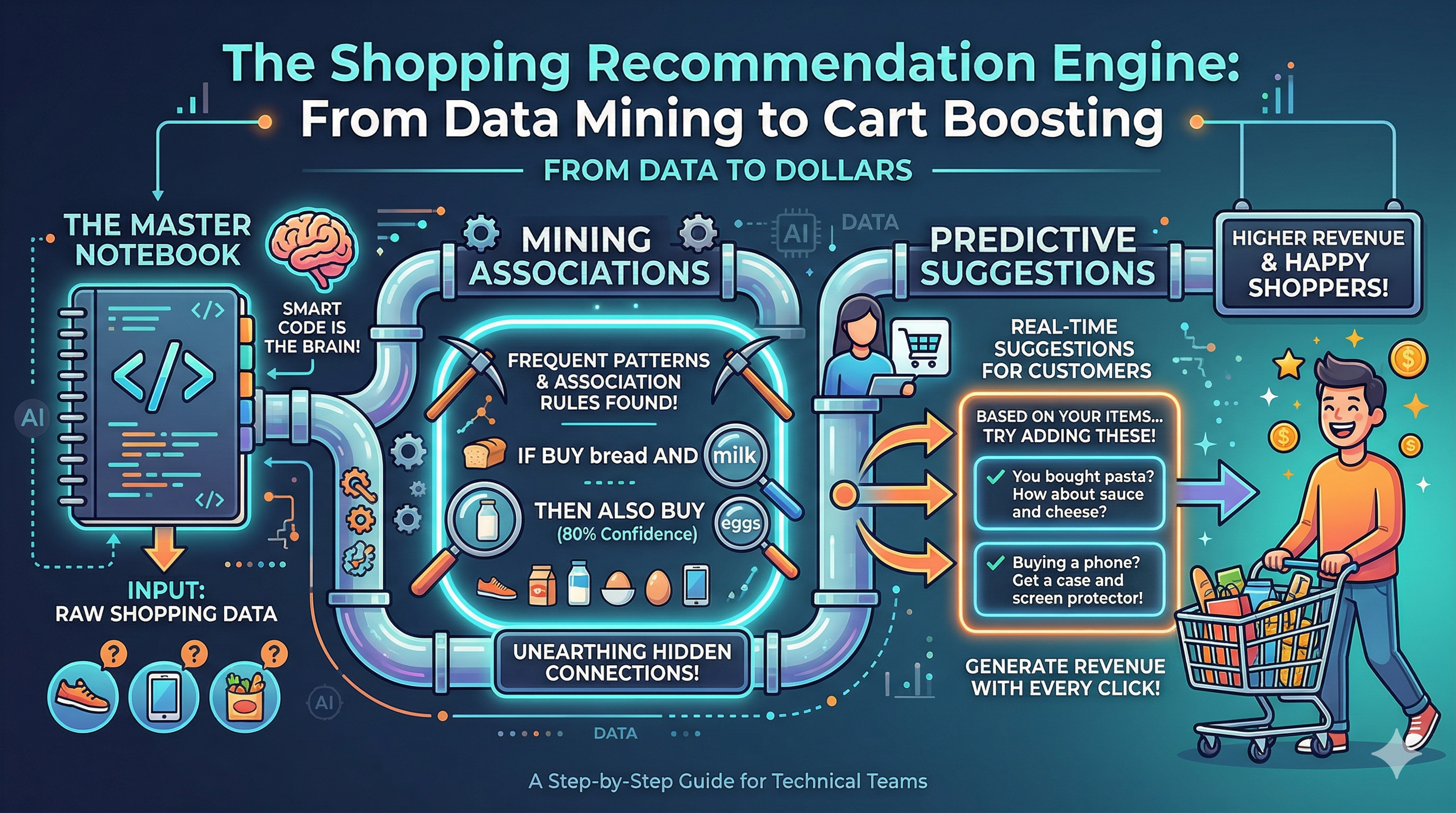

In this post, we take the MovieLens 32M User data and build a series of recommendation algorithms that are then combined to yield a master ensemble strategy which ens up winning in terms of performance metrics scores

🎬 MovieLens 32M — Two-Stage Generate-&-Rerank Recommendation Ensemble Model to Generate Recommendations

Data: Download

ml-32m.zipfrom https://grouplens.org/datasets/movielens/32m/, mount your Google Drive, and pointZIP_PATHto the archive. All other paths are derived automatically.

In the last study we examined five standalone recommendation strategies applied to InstaCart shopping data.

Here we assess a combined ensemble strategy — S6 — that draws on all five priors simultaneously to produce more accurate top-N movie recommendations.

📋 Table of Contents

1. Setup & data loading

📖 Dataset & Problem Framing

What the data is. The MovieLens 32M dataset contains 32,000,204 explicit 5-star ratings (in 0.5-star increments) and 2,000,072 tag applications across 87,585 movies by 200,948 users, collected between January 1995 and October 2023.

| File | Key columns | Role |

|---|---|---|

ratings.csv | userId, movieId, rating, timestamp | Primary signal |

movies.csv | movieId, title, genres | Item metadata |

tags.csv | userId, movieId, tag, timestamp | Auxiliary text signal |

links.csv | movieId, imdbId, tmdbId | External enrichment (optional) |

Task framing. Given a user’s historical rating behaviour, predict which movies they will rate highly (≥ 4.0 stars) that they have not yet seen. Ratings below 4.0 are treated as implicit negatives; ≥ 4.0 as positives.

Train/test split strategy. For each user, all ratings before the 90th-percentile timestamp are training data; ratings at or after form the ground truth. This preserves the causal structure and avoids leakage.

Memory strategy. Downcast userId / movieId to int32, rating to float32. Free large intermediates with del + gc.collect() after their last usage point.

# Import libraries

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

import seaborn as sns

import zipfile, gc, time, warnings, os

from collections import defaultdict, Counter

from itertools import combinations

import lightgbm as lgb

from xgboost import XGBClassifier

from sklearn.model_selection import GroupShuffleSplit, train_test_split

from sklearn.metrics import roc_auc_score, average_precision_score

from scipy.sparse import csr_matrix

from sklearn.preprocessing import normalize

from google.colab import drive

from matplotlib.gridspec import GridSpec

from joblib import Parallel, delayed

warnings.filterwarnings('ignore')

plt.style.use('seaborn-v0_8-whitegrid')

sns.set_palette('husl')

pd.set_option('display.float_format', '{:.4f}'.format)

pd.set_option('display.max_columns', None)

print('✅ Libraries loaded')

✅ Libraries loaded

# Read the data

drive.mount('/content/drive/')

Mounted at /content/drive/

# Define functions to compute metrics

def precision_at_k(recs, true_set, k):

return len(set(recs[:k]) & true_set) / k if k else 0.0

def recall_at_k(recs, true_set, k):

return len(set(recs[:k]) & true_set) / len(true_set) if true_set else 0.0

def f1_at_k(recs, true_set, k):

p = precision_at_k(recs, true_set, k)

r = recall_at_k(recs, true_set, k)

return 2*p*r/(p+r) if (p+r) > 0 else 0.0

def ndcg_at_k(recs, true_set, k):

dcg = sum(1/np.log2(i+2) for i, m in enumerate(recs[:k]) if m in true_set)

ideal = sum(1/np.log2(i+2) for i in range(min(len(true_set), k)))

return dcg/ideal if ideal else 0.0

def score_recs(recs, true_set, K):

return {

'precision': precision_at_k(recs, true_set, K),

'recall' : recall_at_k(recs, true_set, K),

'f1' : f1_at_k(recs, true_set, K),

'ndcg' : ndcg_at_k(recs, true_set, K),

'hit_rate' : 1 if any(m in true_set for m in recs[:K]) else 0,

}

def evaluate_strategy(fn, data_df, K=10, n=None, seed=42):

rows = data_df if n is None else data_df.sample(n=n, random_state=seed)

m = {k: [] for k in ('precision','recall','f1','ndcg','hit_rate')}

for _, row in rows.iterrows():

s = score_recs(fn(row['userId'], K=K), row['true_items'], K)

for key in m: m[key].append(s[key])

return {k: float(np.mean(v)) for k, v in m.items()}

# ── Lookup caches ─────────────────────────────────────────────────────────

# _seen_cache : user_id -> set of seen movie IDs

# _s2_score_cache : user_id -> precomputed S2 genre score vector (n_movies,)

# _s4_score_cache : user_id -> precomputed S4 taste score vector (n_movies,)

#

# All three are initialised empty here and populated before they are needed.

# When a score cache is empty (e.g. during training pair construction, which

# uses its own batch arrays), the functions fall back to computing on the fly.

_seen_cache = {}

_s2_score_cache = {}

_s4_score_cache = {}

def get_seen(user_id):

return _seen_cache.get(user_id, set())

# Function to compute global popularity

def s1_popularity(user_id, K=10, **_):

seen = get_seen(user_id)

return [m for m in POPULARITY_POOL if m not in seen][:K]

# Function to compute genre-aware personal history

def s2_personal(user_id, K=10, **_):

seen = get_seen(user_id)

if user_id not in user_genre_affinity.index:

return s1_popularity(user_id, K=K)

# Use precomputed score vector when available (evaluation path); fall back to on-the-fly matmul otherwise (any ad-hoc call).

if user_id in _s2_score_cache:

scores = _s2_score_cache[user_id]

else:

u_genre = user_genre_affinity.loc[user_id].values

u_norm = np.linalg.norm(u_genre)

if u_norm == 0:

return s1_popularity(user_id, K=K)

scores = movie_genre_norm @ (u_genre / u_norm)

top_k = np.argpartition(scores, -K)[-K:]

top_k = top_k[np.argsort(scores[top_k])[::-1]]

ranked = [genre_index_order[i] for i in top_k if genre_index_order[i] not in seen]

if len(ranked) >= K:

return ranked[:K]

# Pad with additional candidates if top-K after filtering is short

all_ranked = [genre_index_order[i] for i in np.argsort(scores)[::-1] if genre_index_order[i] not in seen]

return all_ranked[:K]

# Function to compute collaborative filtering

def s3_item_cf(user_id, K=10, **_):

seen = get_seen(user_id)

liked = set(user_liked.get(user_id, []))

if not liked:

return s1_popularity(user_id, K=K)

scores = defaultdict(float)

for mid in liked:

for neighbour, sim in item_sim_lookup.get(mid, []):

if neighbour not in seen:

scores[neighbour] += sim

ranked = sorted(scores, key=scores.get, reverse=True)

if len(ranked) >= K:

return ranked[:K]

extra = [m for m in s2_personal(user_id, K=K*3) if m not in set(ranked)]

return (ranked + extra)[:K]

# Function to compute temporal taste drift

def s4_temporal(user_id, K=10, **_):

seen = get_seen(user_id)

# Use precomputed score vector when available (evaluation path); fall back to on-the-fly matmul otherwise.

if user_id in _s4_score_cache:

sims = _s4_score_cache[user_id]

else:

taste_vec = user_taste_vec.get(user_id)

if taste_vec is None:

return s1_popularity(user_id, K=K)

sims = movie_genre_norm @ taste_vec

top_k = np.argpartition(sims, -K)[-K:]

top_k = top_k[np.argsort(sims[top_k])[::-1]]

ranked = [genre_index_order[i] for i in top_k if genre_index_order[i] not in seen]

if len(ranked) >= K:

return ranked[:K]

all_ranked = [genre_index_order[i] for i in np.argsort(sims)[::-1] if genre_index_order[i] not in seen]

return all_ranked[:K]

# Function to build features

def build_lgb_features(user_id, candidate_movies):

if not candidate_movies:

return pd.DataFrame(columns = FEATURE_COLS)

mf = movie_feat.reindex(candidate_movies)

mg = movie_genre.reindex(candidate_movies).fillna(0).values.astype('float32')

mg_norms = np.linalg.norm(mg, axis=1, keepdims=True).clip(min=1e-9)

mg_norm = mg / mg_norms

taste_v = user_taste_vec.get(user_id, np.zeros(len(genre_cols), dtype='float32'))

genre_affinity = mg_norm @ taste_v

u_row = user_stats.loc[user_id] if user_id in user_stats.index else None

return pd.DataFrame({'userId' : user_id,

'movieId' : candidate_movies,

'u_rating_count' : float(u_row['rating_count']) if u_row is not None else 0.0,

'u_mean_rating' : float(u_row['mean_rating']) if u_row is not None else GLOBAL_MEAN,

'u_rating_freq' : float(u_row['rating_freq']) if u_row is not None else 0.0,

'u_rating_std' : float(u_row['rating_std']) if u_row is not None else 0.0,

'm_global_count' : mf['global_count'].fillna(0).values,

'm_log_count' : mf['log_count'].fillna(0).values,

'm_global_mean' : mf['global_mean'].fillna(GLOBAL_MEAN).values,

'm_bayesian' : mf['bayesian_score'].fillna(GLOBAL_MEAN).values,

'm_rating_variance' : mf['rating_variance'].fillna(0).values,

'm_tag_count' : mf['tag_count'].fillna(0).values,

'genre_affinity' : genre_affinity,})

# Function to perform lgb recommendations

def s5_lgbm(user_id, K = 10, n_candidates = 200, **_):

seen = get_seen(user_id)

candidates = list(dict.fromkeys(s2_personal(user_id, K = n_candidates) + s3_item_cf( user_id, K = n_candidates) + s4_temporal(user_id, K=n_candidates)))

candidates = [m for m in candidates if m not in seen][:n_candidates]

if not candidates:

return s1_popularity(user_id, K=K)

feat_df = build_lgb_features(user_id, candidates)

feat_df['score'] = lgb_model.predict(feat_df[FEATURE_COLS])

return feat_df.sort_values('score', ascending = False)['movieId'].tolist()[:K]

# Function to build second-stage features

def build_stage2_features(user_id, candidates, s2_list, s3_list, s4_list):

s2r = {m: r for r, m in enumerate(s2_list)}

s3r = {m: r for r, m in enumerate(s3_list)}

s4r = {m: r for r, m in enumerate(s4_list)}

base = build_lgb_features(user_id, candidates)

base['in_s2'] = base['movieId'].isin(s2r).astype('int8')

base['in_s3'] = base['movieId'].isin(s3r).astype('int8')

base['in_s4'] = base['movieId'].isin(s4r).astype('int8')

base['rank_s2'] = base['movieId'].map(s2r).fillna(N_STAGE1).astype('int16')

base['rank_s3'] = base['movieId'].map(s3r).fillna(N_STAGE1).astype('int16')

base['rank_s4'] = base['movieId'].map(s4r).fillna(N_STAGE1).astype('int16')

base['n_retrievers']= base[['in_s2','in_s3','in_s4']].sum(axis=1).astype('int8')

base['s5_score'] = lgb_model.predict(base[FEATURE_COLS]).astype('float32')

return base

# Create a check to see if there is user history

def is_cold(user_id):

return len(user_liked.get(user_id, [])) < COLD_START_THRESHOLD

# Function to get stage 1 candidates

def stage1_candidates(user_id):

seen = get_seen(user_id)

pool = list(dict.fromkeys(s2_personal(user_id, K=N_STAGE1) + s3_item_cf( user_id, K=N_STAGE1) + s4_temporal(user_id, K = N_STAGE1)))

return [m for m in pool if m not in seen]

# Function to get ensemble recommendations

def s7_ensemble(user_id, K = 10, model = 'lgb', **_):

if is_cold(user_id):

return s1_popularity(user_id, K = K)

s2l = s2_personal(user_id, K=N_STAGE1)

s3l = s3_item_cf( user_id, K=N_STAGE1)

s4l = s4_temporal(user_id, K=N_STAGE1)

candidates = list(dict.fromkeys(s2l + s3l + s4l))

if not candidates:

return s1_popularity(user_id, K = K)

feat = build_stage2_features(user_id, candidates, s2l, s3l, s4l)

if model == 'lgb':

feat['score'] = meta_lgb.predict(feat[STAGE2_FEATURE_COLS])

else:

feat['score'] = meta_xgb.predict_proba(feat[STAGE2_FEATURE_COLS])[:, 1]

return feat.sort_values('score', ascending=False)['movieId'].tolist()[:K]

def s6_xgboost(user_id, K = 10, **_):

return s7_ensemble(user_id, K = K, model = 'xgb')

# Load the data

data = {}

files = [i for i in os.listdir('/content/drive/MyDrive/ml-32m/')]

file_list = [f for f in files if f.endswith('.csv')]

print(f'Files in archive: {file_list}\n')

for fn in file_list:

key = fn.replace('.csv', '')

data[key] = pd.read_csv(f"/content/drive/MyDrive/ml-32m/{fn}")

print(f' ✓ {fn}: {data[key].shape[0]:,} rows × {data[key].shape[1]} cols')

print('\n✅ All datasets loaded')

Files in archive: ['ratings.csv', 'tags.csv', 'movies.csv', 'links.csv']

✓ ratings.csv: 32,000,204 rows × 4 cols

✓ tags.csv: 2,000,072 rows × 4 cols

✓ movies.csv: 87,585 rows × 3 cols

✓ links.csv: 87,585 rows × 3 cols

✅ All datasets loaded

# Downcast to save ~40% RAM

for col in ['userId', 'movieId']:

data['ratings'][col] = data['ratings'][col].astype('int32')

data['tags'][col] = data['tags'][col].astype('int32')

data['movies']['movieId'] = data['movies']['movieId'].astype('int32')

data['ratings']['rating'] = data['ratings']['rating'].astype('float32')

# Extract the data into objects

ratings = data['ratings']

movies = data['movies']

tags = data['tags']

movies.head()

| movieId | title | genres | |

|---|---|---|---|

| 0 | 1 | Toy Story (1995) | Adventure|Animation|Children|Comedy|Fantasy |

| 1 | 2 | Jumanji (1995) | Adventure|Children|Fantasy |

| 2 | 3 | Grumpier Old Men (1995) | Comedy|Romance |

| 3 | 4 | Waiting to Exhale (1995) | Comedy|Drama|Romance |

| 4 | 5 | Father of the Bride Part II (1995) | Comedy |

tags.head()

| userId | movieId | tag | timestamp | |

|---|---|---|---|---|

| 0 | 22 | 26479 | Kevin Kline | 1583038886 |

| 1 | 22 | 79592 | misogyny | 1581476297 |

| 2 | 22 | 247150 | acrophobia | 1622483469 |

| 3 | 34 | 2174 | music | 1249808064 |

| 4 | 34 | 2174 | weird | 1249808102 |

ratings.head()

| userId | movieId | rating | timestamp | |

|---|---|---|---|---|

| 0 | 1 | 17 | 4.0000 | 944249077 |

| 1 | 1 | 25 | 1.0000 | 944250228 |

| 2 | 1 | 29 | 2.0000 | 943230976 |

| 3 | 1 | 30 | 5.0000 | 944249077 |

| 4 | 1 | 32 | 5.0000 | 943228858 |

%%time

# Create a temporal training split for modelling

RATING_THRESHOLD = 4.0

# Cutoff users based on time interval, 90%

user_cutoff = (ratings.groupby('userId')['timestamp'].quantile(0.90).rename('cutoff').astype('int32'))

ratings = ratings.join(user_cutoff, on = 'userId')

# Split the data for training

train_ratings = ratings[ratings['timestamp'] < ratings['cutoff']]

test_ratings = ratings[ratings['timestamp'] >= ratings['cutoff']]

# Drop cutoff column immediately - saves ~100 MB across both halves

train_ratings.drop(columns = ['cutoff'], inplace = True)

test_ratings.drop(columns = ['cutoff'], inplace = True)

del ratings, user_cutoff; gc.collect()

# Ground truth: movies rated >= threshold in test, not seen in training

train_seen = train_ratings.groupby('userId')['movieId'].apply(set)

# Function to extract the truth

def get_true_items(grp):

uid = grp.name

liked = set(grp[grp['rating'] >= RATING_THRESHOLD]['movieId'])

return liked - train_seen.get(uid, set())

# Apply func to get data

ground_truth = (test_ratings.groupby('userId').apply(get_true_items).rename('true_movies'))

ground_truth = ground_truth[ground_truth.map(len) > 0]

# Inspect

print(f'Train rows : {len(train_ratings):,} | {train_ratings.memory_usage(deep = True).sum()/1e6:.0f} MB')

print(f'Test rows : {len(test_ratings):,} | {test_ratings.memory_usage(deep = True).sum()/1e6:.0f} MB')

print(f'Eval users : {len(ground_truth):,}')

Train rows : 28,583,599 | 800 MB

Test rows : 3,416,605 | 96 MB

Eval users : 192,131

CPU times: user 1min 31s, sys: 3.75 s, total: 1min 35s

Wall time: 1min 36s

ground_truth.head()

| true_movies | |

|---|---|

| userId | |

| 1 | {1056, 1952, 2020, 645, 2313, 1228, 2125, 302,... |

| 2 | {520, 616, 362, 783, 594} |

| 3 | {4995, 5380, 1957, 2150, 11, 3248, 1873, 2353,... |

| 4 | {2841} |

| 5 | {480, 356, 454, 364, 110} |

# Create a global popularity pool to use as a cold-start ratings guide

pop_stats = (train_ratings[train_ratings['rating'] >= RATING_THRESHOLD].groupby('movieId').agg(pos_count = ('rating','count'), mean_rating = ('rating','mean')).sort_values(['pos_count','mean_rating'], ascending = False))

POPULARITY_POOL = pop_stats.index.tolist()

print(f'Popularity pool: {len(POPULARITY_POOL):,} movies')

pop_stats.head(10).join(movies.set_index('movieId')['title'])

Popularity pool: 50,644 movies

| pos_count | mean_rating | title | |

|---|---|---|---|

| movieId | |||

| 318 | 84861 | 4.6437 | Shawshank Redemption, The (1994) |

| 296 | 73438 | 4.6011 | Pulp Fiction (1994) |

| 2571 | 69017 | 4.5596 | Matrix, The (1999) |

| 356 | 68815 | 4.5235 | Forrest Gump (1994) |

| 593 | 67138 | 4.5071 | Silence of the Lambs, The (1991) |

| 260 | 60648 | 4.5635 | Star Wars: Episode IV - A New Hope (1977) |

| 2959 | 58522 | 4.5773 | Fight Club (1999) |

| 527 | 55945 | 4.5847 | Schindler's List (1993) |

| 1196 | 52583 | 4.5564 | Star Wars: Episode V - The Empire Strikes Back... |

| 4993 | 51969 | 4.5687 | Lord of the Rings: The Fellowship of the Ring,... |

# Create a global popularity pool

GLOBAL_MEAN = float(train_ratings['rating'].mean())

pop_stats = (train_ratings[train_ratings['rating'] >= RATING_THRESHOLD].groupby('movieId').agg(pos_count = ('rating','count'), mean_rating = ('rating','mean')).sort_values(['pos_count','mean_rating'], ascending = False))

POPULARITY_POOL = pop_stats.index.tolist()

print(f'Global mean : {GLOBAL_MEAN:.4f}')

print(f'Popularity pool : {len(POPULARITY_POOL):,} movies')

pop_stats.head(8).join(movies.set_index('movieId')['title'])

Global mean : 3.5460

Popularity pool : 50,644 movies

| pos_count | mean_rating | title | |

|---|---|---|---|

| movieId | |||

| 318 | 84861 | 4.6437 | Shawshank Redemption, The (1994) |

| 296 | 73438 | 4.6011 | Pulp Fiction (1994) |

| 2571 | 69017 | 4.5596 | Matrix, The (1999) |

| 356 | 68815 | 4.5235 | Forrest Gump (1994) |

| 593 | 67138 | 4.5071 | Silence of the Lambs, The (1991) |

| 260 | 60648 | 4.5635 | Star Wars: Episode IV - A New Hope (1977) |

| 2959 | 58522 | 4.5773 | Fight Club (1999) |

| 527 | 55945 | 4.5847 | Schindler's List (1993) |

2. Exploratory data analysis

📖 Understanding the data before modelling

This section answers eight diagnostic questions that directly motivate modelling decisions later:

| Question | Modelling implication |

|---|---|

| How are ratings distributed across the 0.5–5.0 scale? | Validates our 4.0 ‘liked’ threshold |

| How many ratings does a typical user have? | Informs cold-start threshold |

| How many ratings does a typical movie have? | Informs Bayesian smoothing prior |

| Which genres dominate the catalogue? | Explains genre-affinity feature range |

| How do mean ratings differ across genres? | Reveals genre-level rating bias |

| How has rating volume changed over time? | Motivates temporal recency weighting |

| How concentrated is movie popularity? | Reveals long-tail severity |

| What do user rating habits look like? | Identifies lenient vs strict raters |

# Combine train + test for full-dataset EDA stats

n_tr, n_te = len(train_ratings), len(test_ratings)

total_ratings = n_tr + n_te

# Union of user/movie IDs across splits without creating a combined DataFrame

users_tr = set(train_ratings['userId'].unique())

users_te = set(test_ratings['userId'].unique())

movies_tr = set(train_ratings['movieId'].unique())

movies_te = set(test_ratings['movieId'].unique())

n_users_total = len(users_tr | users_te)

n_movies_rated = len(movies_tr | movies_te)

del users_tr, users_te, movies_tr, movies_te; gc.collect()

global_sum = float(train_ratings['rating'].sum()) + float(test_ratings['rating'].sum())

global_mean_all = global_sum / total_ratings

ts_min = min(int(train_ratings['timestamp'].min()), int(test_ratings['timestamp'].min()))

ts_max = max(int(train_ratings['timestamp'].max()), int(test_ratings['timestamp'].max()))

r_min = min(float(train_ratings['rating'].min()), float(test_ratings['rating'].min()))

r_max = max(float(train_ratings['rating'].max()), float(test_ratings['rating'].max()))

sparsity = 1 - total_ratings / (n_users_total * n_movies_rated)

print('=' * 55)

print(' MOVIELENS 32M - DATASET SNAPSHOT')

print('=' * 55)

print(f' Total ratings : {total_ratings:>12,}')

print(f' Unique users : {n_users_total:>12,}')

print(f' Unique movies rated: {n_movies_rated:>12,}')

print(f' Movies in catalogue: {len(movies):>12,}')

print(f' Date range : {pd.to_datetime(ts_min,unit="s").date()} -> {pd.to_datetime(ts_max,unit="s").date()}')

print(f' Rating scale : {r_min} - {r_max} stars')

print(f' Mean rating : {global_mean_all:.3f}')

print(f' Matrix sparsity : {sparsity:.4%}')

print('=' * 55)

=======================================================

MOVIELENS 32M - DATASET SNAPSHOT

=======================================================

Total ratings : 32,000,204

Unique users : 200,948

Unique movies rated: 84,432

Movies in catalogue: 87,585

Date range : 1995-01-09 -> 2023-10-13

Rating scale : 0.5 - 5.0 stars

Mean rating : 3.540

Matrix sparsity : 99.8114%

=======================================================

# Combine data for vis

all_ratings_eda = pd.concat([train_ratings.assign(split='train'),test_ratings.assign(split='test')], ignore_index = True)

all_ratings_eda['date'] = pd.to_datetime(all_ratings_eda['timestamp'], unit='s')

all_ratings_eda['year'] = all_ratings_eda['date'].dt.year

all_ratings_eda['year_month'] = all_ratings_eda['date'].dt.to_period('M')

print(f'all_ratings_eda: {all_ratings_eda.shape} | '

f'{all_ratings_eda.memory_usage(deep=True).sum()/1e6:.0f} MB')

all_ratings_eda: (32000204, 8) | 3005 MB

# Ratings distribution

fig, axes = plt.subplots(1, 2, figsize=(20, 5))

# Left: count per star value

ax = axes[0]

rating_counts = all_ratings_eda['rating'].value_counts().sort_index()

bars = ax.bar(rating_counts.index, rating_counts.values,

width=0.35, color=sns.color_palette('husl', len(rating_counts)))

ax.axvline(4.0, color='crimson', lw=2, ls='--', label='Liked threshold (4.0)')

ax.set_xlabel('Star Rating')

ax.set_ylabel('Number of Ratings')

ax.set_title('Rating Distribution (raw counts)')

ax.legend()

ax.yaxis.set_major_formatter(plt.FuncFormatter(lambda x, _: f'{x/1e6:.1f}M'))

for bar in bars:

h = bar.get_height()

ax.text(bar.get_x()+bar.get_width()/2, h*1.01,

f'{h/1e6:.1f}M', ha='center', va='bottom', fontsize=7)

# Right: positive label rate vs threshold

ax2 = axes[1]

thresholds = np.arange(0.5, 5.5, 0.5)

pct_liked = [(all_ratings_eda['rating'] >= t).mean() * 100 for t in thresholds]

ax2.plot(thresholds, pct_liked, marker='o', lw=2.5, color='steelblue')

ax2.axvline(4.0, color='crimson', lw=2, ls='--', label='Our threshold (4.0)')

chosen_pct = pct_liked[int((4.0-0.5)/0.5)]

ax2.axhline(chosen_pct, color='crimson', lw=1, ls=':')

ax2.annotate(f'{chosen_pct:.1f}% liked at 4.0',

xy = (4.0, chosen_pct),

xytext = (2.5, chosen_pct-16),

arrowprops = dict(arrowstyle='->', color = 'crimson'),

color = 'crimson',

fontsize = 10)

ax2.set_xlabel('Threshold')

ax2.set_ylabel('% of Ratings Classified as Liked')

ax2.set_title('Positive Label Rate vs Threshold')

ax2.legend()

ax2.set_ylim(0, 105)

plt.suptitle('Rating Scale Analysis', fontsize=14, y=1.02)

plt.tight_layout()

plt.savefig('eda_01_rating_distribution.png', dpi=150, bbox_inches='tight')

plt.show()

print(f'Ratings >= 4.0 stars: {(all_ratings_eda["rating"]>=4.0).mean():.1%} of all ratings')

Ratings >= 4.0 stars: 49.8% of all ratings

# User actvitity distribution

user_rating_counts = all_ratings_eda.groupby('userId')['rating'].count()

fig, axes = plt.subplots(1, 3, figsize=(20, 4))

# Histogram (log y)

ax = axes[0]

ax.hist(user_rating_counts, bins=80, color='steelblue', edgecolor='white', lw=0.3)

ax.set_yscale('log')

ax.set_xlabel('Ratings per User')

ax.set_ylabel('Number of Users (log)')

ax.set_title('User Activity Distribution')

for p in [10, 50, 90]:

v = np.percentile(user_rating_counts, p)

ax.axvline(v, ls='--', lw=1.2, label=f'p{p}={v:.0f}')

ax.legend(fontsize=8)

# CCDF — power-law tail

ax2 = axes[1]

sorted_counts = np.sort(user_rating_counts)[::-1]

rank = np.arange(1, len(sorted_counts)+1)

ax2.loglog(sorted_counts, rank / len(sorted_counts), lw=2, color='darkorange')

ax2.set_xlabel('Ratings per User (log)')

ax2.set_ylabel('P(X >= x) [log]')

ax2.set_title('CCDF — Heavy Tail in User Activity')

ax2.grid(True, which='both', alpha=0.3)

# Activity segments

ax3 = axes[2]

bins_ = [0, 20, 50, 100, 250, 500, int(user_rating_counts.max())+1]

labels_ = ['<20', '20-50', '51-100', '101-250', '251-500', '500+']

seg = pd.cut(user_rating_counts, bins=bins_, labels=labels_)

seg_ct = seg.value_counts().sort_index()

bars3 = ax3.bar(range(len(seg_ct)), seg_ct.values,

color=sns.color_palette('husl', len(seg_ct)),

tick_label=labels_)

ax3.set_xlabel('Ratings per User')

ax3.set_ylabel('Number of Users')

ax3.set_title('User Activity Segments')

for i, v in enumerate(seg_ct.values):

ax3.text(i, v + 200, f'{v:,}', ha='center', va='bottom', fontsize=8)

plt.suptitle('User Activity Analysis', fontsize=14, y=1.02)

plt.tight_layout()

plt.savefig('eda_02_user_activity.png', dpi=150, bbox_inches='tight')

plt.show()

print(f'Median ratings/user : {user_rating_counts.median():.0f}')

print(f'Mean ratings/user : {user_rating_counts.mean():.0f}')

top1 = user_rating_counts.nlargest(int(len(user_rating_counts)*0.01)).sum()

print(f'Top 1% of users account for {top1/user_rating_counts.sum():.1%} of all ratings')

Median ratings/user : 73

Mean ratings/user : 159

Top 1% of users account for 12.6% of all ratings

# Investigate movie popularities

movie_rating_counts = (all_ratings_eda.groupby('movieId')['rating'].count().sort_values(ascending=False))

movie_pop_named = (movie_rating_counts.to_frame('n_ratings').join(movies.set_index('movieId')['title']))

fig, axes = plt.subplots(1, 2, figsize=(20, 5))

# Log-log rank curve

ax = axes[0]

ranks = np.arange(1, len(movie_rating_counts)+1)

ax.loglog(ranks, movie_rating_counts.values, lw=2, color='steelblue')

ax.fill_between(ranks, movie_rating_counts.values, alpha=0.15, color='steelblue')

top1pct = int(len(movie_rating_counts)*0.01)

ax.axvline(top1pct, color='crimson', ls='--', lw=1.5,

label=f'Top 1% = {top1pct:,} movies')

ax.set_xlabel('Movie Rank (log)')

ax.set_ylabel('Number of Ratings (log)')

ax.set_title('The Long Tail: Movie Popularity')

ax.legend(fontsize=9)

# Rating concentration curve

ax2 = axes[1]

cum_pct = movie_rating_counts.cumsum() / movie_rating_counts.sum() * 100

ax2.plot(range(len(cum_pct)), cum_pct.values, lw=2, color='darkorange')

for target in [50, 80, 95]:

idx_pos = int((cum_pct >= target).argmax())

ax2.axhline(target, ls=':', color='grey', lw=1)

ax2.axvline(idx_pos, ls=':', color='grey', lw=1)

ax2.text(idx_pos + 500, target - 4, f'{target}%: {idx_pos:,} movies',

fontsize=8, color='dimgrey')

ax2.set_xlabel('Number of Movies (ranked by popularity)')

ax2.set_ylabel('Cumulative % of All Ratings')

ax2.set_title('Rating Concentration Curve')

plt.suptitle('Movie Popularity Analysis', fontsize=14, y=1.02)

plt.tight_layout()

plt.savefig('eda_03_movie_longtail.png', dpi=150, bbox_inches='tight')

plt.show()

print('Top 10 most-rated movies:')

print(movie_pop_named.head(10).to_string())

Top 10 most-rated movies:

n_ratings title

movieId

318 102929 Shawshank Redemption, The (1994)

356 100296 Forrest Gump (1994)

296 98409 Pulp Fiction (1994)

2571 93808 Matrix, The (1999)

593 90330 Silence of the Lambs, The (1991)

260 85010 Star Wars: Episode IV - A New Hope (1977)

2959 77332 Fight Club (1999)

480 75233 Jurassic Park (1993)

527 73849 Schindler's List (1993)

4993 73122 Lord of the Rings: The Fellowship of the Ring, The (2001)

%%time

# Analyze the genres

movies_exploded = (movies.assign(genre=movies['genres'].str.split('|')).explode('genre').query("genre != '(no genres listed)'"))

genre_ratings = (all_ratings_eda.merge(movies_exploded[['movieId','genre']], on='movieId', how='left').dropna(subset=['genre']))

genre_stats = genre_ratings.groupby('genre').agg(n_ratings = ('rating','count'),

mean_rating = ('rating','mean'),

median_rating = ('rating','median'),

n_movies = ('movieId','nunique'),).sort_values('n_ratings', ascending = False)

CPU times: user 44.7 s, sys: 9.67 s, total: 54.4 s

Wall time: 54.4 s

fig, axes = plt.subplots(1, 2, figsize=(20, 6))

# Volume per genre

ax = axes[0]

palette = sns.color_palette('husl', len(genre_stats))

ax.barh(genre_stats.index, genre_stats['n_ratings'], color=palette[::-1])

ax.invert_yaxis()

ax.xaxis.set_major_formatter(plt.FuncFormatter(lambda x, _: f'{x/1e6:.0f}M'))

ax.set_xlabel('Total Ratings')

ax.set_title('Rating Volume by Genre')

# Mean rating per genre (dot plot)

ax2 = axes[1]

gs_sorted = genre_stats.sort_values('mean_rating')

g_mean = all_ratings_eda['rating'].mean()

colors = ['#2ecc71' if v >= g_mean + 0.15

else '#e74c3c' if v < g_mean - 0.15

else '#3498db' for v in gs_sorted['mean_rating']]

ax2.scatter(gs_sorted['mean_rating'], range(len(gs_sorted)),

s=gs_sorted['n_movies']/15, c=colors, alpha=0.85, zorder=3)

ax2.set_yticks(range(len(gs_sorted)))

ax2.set_yticklabels(gs_sorted.index)

ax2.axvline(g_mean, color='black', ls='--', lw=1.2,

label=f'Global mean ({g_mean:.2f})')

ax2.set_xlabel('Mean Star Rating')

ax2.set_title('Mean Rating by Genre\n(bubble size = # movies in genre)')

ax2.legend(fontsize=9)

plt.suptitle('Genre Analysis', fontsize=14, y=1.02)

plt.tight_layout()

plt.savefig('eda_04_genre_analysis.png', dpi=150, bbox_inches='tight')

plt.show()

print(genre_stats[['n_movies','n_ratings','mean_rating','median_rating']].to_string())

n_movies n_ratings mean_rating median_rating

genre

Drama 33152 13973271 3.6825 4.0000

Comedy 22448 11206926 3.4324 3.5000

Action 9296 9665213 3.4764 3.5000

Thriller 11555 8679464 3.5317 3.5000

Adventure 5156 7590522 3.5234 3.5000

Sci-Fi 4797 5717337 3.4917 3.5000

Romance 10048 5524615 3.5450 3.5000

Crime 6704 5373051 3.6918 4.0000

Fantasy 3784 3702759 3.5122 3.5000

Children 4447 2731841 3.4392 3.5000

Mystery 3894 2615322 3.6731 4.0000

Horror 8468 2492315 3.3072 3.5000

Animation 4586 2214562 3.6153 4.0000

War 2225 1594110 3.7917 4.0000

IMAX 195 1494179 3.5933 4.0000

Musical 1033 1159516 3.5543 4.0000

Western 1489 596654 3.6002 4.0000

Documentary 9103 427353 3.6912 4.0000

Film-Noir 350 304710 3.9158 4.0000

# Genre stats

def movie_level_agg(df):

return df.groupby('movieId').agg(n = ('rating','count'), s=('rating','sum'))

ml_tr = movie_level_agg(train_ratings)

ml_te = movie_level_agg(test_ratings)

ml = ml_tr.add(ml_te, fill_value=0).fillna(0)

ml['mean_rating'] = ml['s'] / ml['n'].clip(lower=1)

del ml_tr, ml_te; gc.collect()

# Explode the movie catalogue by genre

movies_exp = (movies.assign(genre = movies['genres'].str.split('|')).explode('genre').query("genre != '(no genres listed)'")[['movieId','genre']])

genre_movie = movies_exp.merge(ml, on='movieId', how='left').fillna(0)

del ml; gc.collect()

# Genre stats

genre_stats = genre_movie.groupby('genre').agg(n_ratings = ('n', 'sum'),

sum_rating = ('s', 'sum'),

n_movies = ('movieId','nunique'),)

genre_stats['mean_rating'] = genre_stats['sum_rating'] / genre_stats['n_ratings'].clip(lower=1)

genre_stats['median_rating'] = (genre_movie.groupby('genre')['mean_rating'].median())

genre_stats = genre_stats.drop(columns='sum_rating').sort_values('n_ratings', ascending=False)

del genre_movie; gc.collect()

0

# Generate co-occurence rates for the entire catalogue

genre_list_per_movie = (

movies.assign(genre=movies['genres'].str.split('|'))

.set_index('movieId')['genre']

)

top_genres = genre_stats.index[:15].tolist()

g2i = {g: i for i, g in enumerate(top_genres)}

cooc = np.zeros((len(top_genres), len(top_genres)), dtype=np.int32)

for glist in genre_list_per_movie:

gs = [g for g in glist if g in g2i]

for g in gs:

cooc[g2i[g], g2i[g]] += 1

for a, b in combinations(gs, 2):

cooc[g2i[a], g2i[b]] += 1

cooc[g2i[b], g2i[a]] += 1

cooc_df = pd.DataFrame(cooc, index=top_genres, columns=top_genres)

diag = np.diag(cooc).astype(float)

jaccard = cooc / (diag[:, None] + diag[None, :] - cooc + 1e-9)

jaccard_df = pd.DataFrame(jaccard, index=top_genres, columns=top_genres)

fig, axes = plt.subplots(1, 2, figsize = (20, 6))

sns.heatmap(cooc_df/1000, ax=axes[0], cmap='YlOrRd', annot = False, linewidths = 0.3, cbar_kws = {'label': 'Co-occurrences (thousands)'})

axes[0].set_title('Genre Co-occurrence Count')

mask = np.eye(len(top_genres), dtype=bool)

sns.heatmap(jaccard_df, ax=axes[1], cmap='Blues', mask=mask,

annot=True, fmt='.2f', annot_kws={'size':7},

linewidths=0.3, vmin=0, vmax=0.5)

axes[1].set_title('Genre Co-occurrence (Jaccard) 1.0 = always appear together')

plt.suptitle('Genre Co-occurrence in the Catalogue', fontsize=14, y=1.02)

plt.tight_layout()

plt.savefig('eda_05_genre_cooccurrence.png', dpi=150, bbox_inches='tight')

plt.show()

# Temporal trends

SECS_PER_YEAR = 365.2425 * 86400

def year_agg(df):

year = ((df['timestamp'].astype('float32') / SECS_PER_YEAR) + 1970).astype('int16')

tmp = pd.DataFrame({'year': year, 'r': df['rating'], 'u': df['userId']})

out = tmp.groupby('year').agg(n=('r','count'), s=('r','sum'), nu=('u','nunique'))

del tmp, year

return out

yr_tr = year_agg(train_ratings)

yr_te = year_agg(test_ratings)

yearly = pd.DataFrame({

'n_ratings' : yr_tr['n'].add(yr_te['n'], fill_value=0).astype(int),

'sum_rating' : yr_tr['s'].add(yr_te['s'], fill_value=0),

'n_users' : yr_tr['nu'].add(yr_te['nu'], fill_value=0).astype(int), # approx

}).reset_index()

yearly['mean_rating'] = yearly['sum_rating'] / yearly['n_ratings'].clip(lower=1)

del yr_tr, yr_te; gc.collect()

# Monthly (only recent years, parse subset to reduce datetime overhead)

RECENT_TS = int((2013 - 1970) * SECS_PER_YEAR)

def monthly_recent(df, cutoff_ts):

sub = df[df['timestamp'] >= cutoff_ts][['timestamp','rating']].copy()

sub['period'] = pd.to_datetime(sub['timestamp'], unit='s').dt.to_period('M')

out = sub.groupby('period')['rating'].count()

del sub; return out

mo_tr = monthly_recent(train_ratings, RECENT_TS)

mo_te = monthly_recent(test_ratings, RECENT_TS)

monthly = mo_tr.add(mo_te, fill_value=0).reset_index()

monthly.columns = ['period','n_ratings']

monthly['dt'] = monthly['period'].dt.to_timestamp()

del mo_tr, mo_te; gc.collect()

fig = plt.figure(figsize = (24, 9))

gs2 = GridSpec(2, 2, figure=fig, hspace=0.4, wspace=0.32)

ax1 = fig.add_subplot(gs2[0, :])

bars = ax1.bar(yearly['year'], yearly['n_ratings']/1e6,

color=sns.color_palette('husl', len(yearly)))

ax1.set_xlabel('Year'); ax1.set_ylabel('Ratings (millions)')

ax1.set_title('Annual Rating Volume (1995-2023)')

ax1.bar_label(bars, fmt='{:.1f}M', padding=2, fontsize=7)

ax2 = fig.add_subplot(gs2[1, 0])

ax2.plot(monthly['dt'], monthly['n_ratings']/1e3, lw=1.5, color='steelblue')

ax2.fill_between(monthly['dt'], monthly['n_ratings']/1e3, alpha=0.15)

ax2.set_xlabel('Month'); ax2.set_ylabel('Ratings (thousands)')

ax2.set_title('Monthly Volume (2013-2023)')

ax3 = fig.add_subplot(gs2[1, 1])

ax3.plot(yearly['year'], yearly['mean_rating'], marker='o', lw=2, color='darkorange')

ax3.axhline(global_mean_all, ls='--', color='grey', lw=1.2,

label=f'Overall mean ({global_mean_all:.2f})')

ax3.set_ylim(3.2, 4.1); ax3.set_xlabel('Year'); ax3.set_ylabel('Mean Rating')

ax3.set_title('Mean Rating Trend Over Time'); ax3.legend(fontsize=9)

plt.suptitle('Temporal Trends in Rating Activity', fontsize=14, y=1.01)

plt.savefig('eda_06_temporal_trends.png', dpi=150, bbox_inches='tight')

plt.show()

# Perform cold start analysis

lk_tr = train_ratings[train_ratings['rating'] >= RATING_THRESHOLD].groupby('userId')['rating'].count()

lk_te = test_ratings[ test_ratings['rating'] >= RATING_THRESHOLD].groupby('userId')['rating'].count()

user_liked_counts_all = lk_tr.add(lk_te, fill_value=0).astype(int).rename('liked_count')

del lk_tr, lk_te; gc.collect()

COLD_START_THRESHOLD = 5

fig, axes = plt.subplots(1, 2, figsize=(24, 5))

ax = axes[0]

ax.hist(user_liked_counts_all.clip(upper=300), bins=60,

color='mediumseagreen', edgecolor='white', lw=0.3)

for thresh in [5, 10, 20]:

ax.axvline(thresh, ls='--', lw=1.5,

label=f'thresh={thresh} ({(user_liked_counts_all<thresh).mean():.1%} cold)')

ax.set_xlabel('Liked Ratings per User (clipped at 300)')

ax.set_ylabel('Users'); ax.set_title('Liked-Rating Count Distribution')

ax.legend(fontsize=8)

ax2 = axes[1]

thr_range = np.arange(1, 101)

pct_warm = [(user_liked_counts_all >= t).mean()*100 for t in thr_range]

ax2.plot(thr_range, pct_warm, lw=2.5, color='mediumseagreen')

ax2.axvline(COLD_START_THRESHOLD, color='crimson', ls='--', lw=2,

label=f'Chosen = {COLD_START_THRESHOLD}')

chosen_warm = pct_warm[COLD_START_THRESHOLD-1]

ax2.axhline(chosen_warm, color='crimson', ls=':', lw=1)

ax2.annotate(f'{chosen_warm:.1f}% warm',

xy=(COLD_START_THRESHOLD, chosen_warm),

xytext=(COLD_START_THRESHOLD+6, chosen_warm-8),

arrowprops=dict(arrowstyle='->', color='crimson'),

color='crimson', fontsize=10)

ax2.set_xlabel('Minimum Liked Ratings')

ax2.set_ylabel('% Users Classified as Warm')

ax2.set_title('Warm User Rate vs Cold-Start Threshold')

ax2.legend(fontsize=9)

plt.suptitle('Cold-Start Analysis', fontsize = 14, y = 1.02)

plt.tight_layout()

plt.savefig('eda_08_cold_start.png', dpi = 150, bbox_inches = 'tight')

plt.show()

del user_liked_counts_all; gc.collect()

34387

3. Feature engineering

We build three reusable feature tables:

user_stats— per-user: rating count, mean rating, activity span, rating frequencymovie_feat— per-movie: global count, mean rating, Bayesian-smoothed score, genre one-hotuser_genre_affinity— per-user per-genre: average rating given to each genre

Here we lean on explicit rating magnitudes and genre vectors as the primary content signal.

Bayesian-smoothed score: shrinks small-count movies toward the global mean.

\[\text{bayes\_score}(m) = \frac{N_m \cdot \bar{r}_m + C \cdot \bar{r}_{\text{global}}}{N_m + C}\]where $C = 50$ is the prior strength (equivalent rating count).

# Compile user stats

liked_mask = train_ratings['rating'] >= RATING_THRESHOLD

user_liked = (train_ratings[liked_mask].groupby('userId')['movieId'].apply(list))

user_stats = train_ratings.groupby('userId').agg(

rating_count = ('rating','count'),

mean_rating = ('rating','mean'),

rating_std = ('rating','std'),

last_ts = ('timestamp','max'),

first_ts = ('timestamp','min'),)

user_stats['active_days'] = ((user_stats['last_ts'] - user_stats['first_ts']) / 86_400).clip(lower=1).astype('float32')

user_stats['rating_freq'] = (user_stats['rating_count'] / user_stats['active_days']).astype('float32')

user_stats['mean_rating'] = user_stats['mean_rating'].astype('float32')

# Users with a single rating have undefined std; fill with 0

user_stats['rating_std'] = user_stats['rating_std'].fillna(0).astype('float32')

print('user_liked:', len(user_liked), 'users')

print('user_stats:', user_stats.shape)

user_liked: 200561 users

user_stats: (200880, 7)

# Extract genres and perform movie one hot encoding

genres_exploded = (movies.assign(genre = movies['genres'].str.split('|')).explode('genre').query("genre != '(no genres listed)'"))

ALL_GENRES = sorted(genres_exploded['genre'].unique())

print(f'Genres ({len(ALL_GENRES)}):', ALL_GENRES)

movie_genre = pd.get_dummies(genres_exploded.set_index('movieId')['genre'], prefix='g')

movie_genre = movie_genre.groupby(level=0).max()

genre_cols = movie_genre.columns.tolist()

print('movie_genre shape:', movie_genre.shape)

Genres (19): ['Action', 'Adventure', 'Animation', 'Children', 'Comedy', 'Crime', 'Documentary', 'Drama', 'Fantasy', 'Film-Noir', 'Horror', 'IMAX', 'Musical', 'Mystery', 'Romance', 'Sci-Fi', 'Thriller', 'War', 'Western']

movie_genre shape: (80505, 19)

# Generate Bayesian scores and movie statistics

GLOBAL_MEAN = train_ratings['rating'].mean()

C = 50

movie_stats = (

train_ratings

.groupby('movieId')

.agg(global_count = ('rating','count'),

global_mean = ('rating','mean'),

rating_variance = ('rating','var'))

)

movie_stats['bayesian_score'] = (

(movie_stats['global_count'] * movie_stats['global_mean'] + C * GLOBAL_MEAN)

/ (movie_stats['global_count'] + C)

)

# log1p compresses the very wide count range so the model sees a

# smooth popularity signal rather than a skewed raw count

movie_stats['log_count'] = np.log1p(movie_stats['global_count']).astype('float32')

# Single-rating movies have undefined variance; treat as 0

movie_stats['rating_variance'] = movie_stats['rating_variance'].fillna(0).astype('float32')

# Tag count from tags.csv (loaded in Section 1) — log1p scaled.

# Tags are user-applied descriptors; a high tag count signals that a

# movie has attracted community engagement beyond a simple star rating.

movie_tag_count = tags.groupby('movieId')['tag'].count().rename('tag_count')

movie_stats = movie_stats.join(movie_tag_count, how='left')

movie_stats['tag_count'] = np.log1p(movie_stats['tag_count'].fillna(0)).astype('float32')

del movie_tag_count

movie_feat = movie_stats.join(movie_genre, how='left').fillna(0)

print(f'movie_feat shape: {movie_feat.shape} global mean: {GLOBAL_MEAN:.4f}')

movie_feat[['global_count','log_count','global_mean','bayesian_score','rating_variance','tag_count']].describe()

movie_feat shape: (77369, 25) global mean: 3.5460

| global_count | log_count | global_mean | bayesian_score | rating_variance | tag_count | |

|---|---|---|---|---|---|---|

| count | 77369.0000 | 77369.0000 | 77369.0000 | 77369.0000 | 77369.0000 | 77369.0000 |

| mean | 369.4451 | 2.4734 | 3.0113 | 3.4606 | 0.8726 | 1.3936 |

| std | 2497.7195 | 2.0230 | 0.8208 | 0.2017 | 1.0182 | 1.5596 |

| min | 1.0000 | 0.6931 | 0.5000 | 1.2788 | 0.0000 | 0.0000 |

| 25% | 2.0000 | 1.0986 | 2.5462 | 3.4396 | 0.0000 | 0.0000 |

| 50% | 5.0000 | 1.7918 | 3.0789 | 3.5132 | 0.7455 | 1.0986 |

| 75% | 25.0000 | 3.2581 | 3.5000 | 3.5451 | 1.1864 | 2.1972 |

| max | 98368.0000 | 11.4965 | 5.0000 | 4.4373 | 10.1250 | 8.8096 |

# User genre affinity matrix

liked_fe = (train_ratings[train_ratings['rating'] >= RATING_THRESHOLD][['userId','movieId','rating']])

uid_uniq = liked_fe['userId'].unique()

mid_uniq = liked_fe['movieId'].unique()

u2i = {u: i for i, u in enumerate(uid_uniq)}

m2i = {m: i for i, m in enumerate(mid_uniq)}

rows_fe = liked_fe['userId'].map(u2i).values

cols_fe = liked_fe['movieId'].map(m2i).values

del liked_fe; gc.collect()

# Binary matrix: 1 where user liked a movie, 0 elsewhere.

# Using rating values in the numerator (previous approach) inflates genres

# where the user gave 5-star ratings vs 4-star ratings for the same number

# of films. Count-based gives the clean interpretation: fraction of a

# user's liked movies that belong to each genre.

R_bin = csr_matrix(

(np.ones(len(rows_fe), dtype='float32'), (rows_fe, cols_fe)),

shape=(len(uid_uniq), len(mid_uniq)),

)

del rows_fe, cols_fe; gc.collect()

# Genre matrix aligned to the liked-movie order (float32)

G = movie_genre.reindex(mid_uniq).fillna(0).values.astype('float32')

# Sparse-binary x dense multiply -> (n_users x n_genres) count matrix

uga_counts = R_bin @ G

liked_counts = np.asarray(R_bin.sum(axis=1)).flatten()

del R_bin, G; gc.collect()

user_genre_affinity = pd.DataFrame(

uga_counts / liked_counts[:, None].clip(min=1),

index=uid_uniq,

columns=genre_cols,

dtype='float32',

)

del uga_counts, liked_counts; gc.collect()

print(f'user_genre_affinity: {user_genre_affinity.shape} | '

f'{user_genre_affinity.memory_usage(deep=True).sum()/1e6:.0f} MB')

user_genre_affinity: (200561, 19) | 16 MB

user_genre_affinity.head()

| g_Action | g_Adventure | g_Animation | g_Children | g_Comedy | g_Crime | g_Documentary | g_Drama | g_Fantasy | g_Film-Noir | g_Horror | g_IMAX | g_Musical | g_Mystery | g_Romance | g_Sci-Fi | g_Thriller | g_War | g_Western | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 0.1972 | 0.1549 | 0.0000 | 0.0141 | 0.3662 | 0.1268 | 0.0000 | 0.7183 | 0.0282 | 0.0141 | 0.0141 | 0.0000 | 0.0000 | 0.0845 | 0.2394 | 0.1549 | 0.1268 | 0.1690 | 0.0141 |

| 2 | 0.1622 | 0.1081 | 0.1351 | 0.1622 | 0.4865 | 0.0811 | 0.0000 | 0.4865 | 0.0811 | 0.0000 | 0.0000 | 0.0541 | 0.1351 | 0.0270 | 0.5135 | 0.0000 | 0.2432 | 0.0270 | 0.0000 |

| 3 | 0.3699 | 0.4521 | 0.1233 | 0.1507 | 0.2877 | 0.0959 | 0.0000 | 0.4795 | 0.0822 | 0.0000 | 0.0137 | 0.0411 | 0.0959 | 0.0137 | 0.1918 | 0.2055 | 0.2055 | 0.1233 | 0.0137 |

| 4 | 0.2000 | 0.4000 | 0.0000 | 0.0000 | 0.6000 | 0.0000 | 0.0000 | 0.2000 | 0.2000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.2000 | 0.0000 | 0.2000 | 0.0000 |

| 5 | 0.7500 | 0.3750 | 0.0000 | 0.0000 | 0.1250 | 0.3750 | 0.0000 | 0.2500 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.0000 | 0.1250 | 0.0000 | 1.0000 | 0.1250 | 0.0000 |

# Split data for training / validation

all_users = ground_truth.index.values

gss = GroupShuffleSplit(n_splits = 1, test_size = 0.15, random_state = 100)

train_idx, eval_idx = next(gss.split(all_users, groups=all_users))

train_fold_users = set(all_users[train_idx])

eval_fold_users = set(all_users[eval_idx])

assert not train_fold_users & eval_fold_users, 'Leakage!'

eval_df = (ground_truth[ground_truth.index.isin(eval_fold_users)].reset_index().rename(columns = {'true_movies': 'true_items'}))

warm_users = set(user_liked[user_liked.map(len) >= COLD_START_THRESHOLD].index)

eval_warm = eval_df[eval_df['userId'].isin(warm_users)].reset_index(drop=True)

print(f'Train fold : {len(train_fold_users):,} users')

print(f'Eval fold : {len(eval_fold_users):,} users')

print(f'Warm eval : {len(eval_warm):,} users')

cold_pct = (len(eval_df) - len(eval_warm)) / len(eval_df)

print(f'Cold eval : {len(eval_df)-len(eval_warm):,} ({cold_pct:.1%})')

# Populate _seen_cache here — before any training loop that calls get_seen().

# The empty-dict initialisation in the function cell is a safe fallback;

# this replaces it with the real per-user seen sets so that all retriever

# functions correctly exclude already-rated movies during training.

_seen_cache = train_ratings.groupby('userId')['movieId'].apply(set).to_dict()

print(f'_seen_cache populated: {len(_seen_cache):,} users')

Train fold : 163,311 users

Eval fold : 28,820 users

Warm eval : 28,509 users

Cold eval : 311 (1.1%)

_seen_cache populated: 200,880 users

4. Item-based collaborative filtering

📖 Batched sparse similarity

We build a sparse (movie x user) rating matrix, L2-normalise each movie’s row, then compute cosine similarity in batches of 2,000 movies. This avoids an 87k x 87k dense matrix (~30 GB). The full sparse matrix and its normalised copy are freed immediately after the lookup table is populated.

# Build a movie x user rating matrix

liked_cf = (train_ratings[train_ratings['rating'] >= RATING_THRESHOLD][['userId','movieId','rating']].copy())

movie_ids_cf = liked_cf['movieId'].unique()

user_ids_cf = liked_cf['userId'].unique()

m_idx = {m: i for i, m in enumerate(movie_ids_cf)}

u_idx = {u: i for i, u in enumerate(user_ids_cf)}

idx_m = {i: m for m, i in m_idx.items()}

R = csr_matrix((liked_cf['rating'].values.astype('float32'), (liked_cf['movieId'].map(m_idx).values, liked_cf['userId'].map(u_idx).values)), shape = (len(movie_ids_cf), len(user_ids_cf)),)

del liked_cf; gc.collect()

R_norm = normalize(R, norm='l2', axis=1)

print(f'Rating matrix: {R.shape} nnz={R.nnz:,}')

print(f'Memory: R={R.data.nbytes/1e6:.0f} MB R_norm={R_norm.data.nbytes/1e6:.0f} MB')

Rating matrix: (50644, 200561) nnz=14,311,277

Memory: R=57 MB R_norm=57 MB

# Batched KNN, top-K neighbors

K_NN = 50

BATCH = 2_000

item_sim_lookup = {}

n_movies_cf = R_norm.shape[0]

t0 = time.time()

for start in range(0, n_movies_cf, BATCH):

batch = R_norm[start : start + BATCH]

sims = (batch @ R_norm.T).toarray()

for local_i, sim_row in enumerate(sims):

global_i = start + local_i

sim_row[global_i] = 0.0

top_k = np.argpartition(sim_row, -K_NN)[-K_NN:]

top_k = top_k[np.argsort(sim_row[top_k])[::-1]]

movie_id = idx_m[global_i]

item_sim_lookup[movie_id] = [(idx_m[j], float(sim_row[j])) for j in top_k]

if start % 20000 == 0:

print(f' {start:>6}/{n_movies_cf} {time.time()-t0:.0f}s')

del R, R_norm; gc.collect()

print(f'Item-sim lookup built for {len(item_sim_lookup):,} movies in {time.time()-t0:.0f}s')

0/50644 14s

20000/50644 86s

40000/50644 156s

Item-sim lookup built for 50,644 movies in 190s

5. Temporal sequence modelling

📖 Recency-weighted taste vectors

We pre-compute per-user genre taste vectors using exponential recency weighting (half-life = 365 days). The movie genre matrix is pre-normalised as float32 and stored once; per-user dot products at inference time are fast matrix-vector multiplies.

# Compute recent user tastes

DECAY_HALF_LIFE = 365

DECAY_K = np.log(2) / DECAY_HALF_LIFE

now_ts = int(train_ratings['timestamp'].max())

liked_ts = train_ratings[train_ratings['rating'] >= RATING_THRESHOLD][['userId','movieId','timestamp']]

# Compute decay weight per row as a float32 column

liked_ts['weight'] = np.exp(-DECAY_K * (now_ts - liked_ts['timestamp'].values.astype('float32')) / 86400).astype('float32')

uid_uniq = liked_ts['userId'].unique()

mid_uniq = liked_ts['movieId'].unique()

u2i = {u: i for i, u in enumerate(uid_uniq)}

m2i = {m: i for i, m in enumerate(mid_uniq)}

W = csr_matrix((liked_ts['weight'].values,

(liked_ts['userId'].map(u2i).values,

liked_ts['movieId'].map(m2i).values)),

shape = (len(uid_uniq), len(mid_uniq)),)

del liked_ts; gc.collect()

# Genre matrix aligned to mid_uniq order

G = movie_genre.reindex(mid_uniq).fillna(0).values.astype('float32')

# One sparse matmul

taste_raw = W @ G

row_norms = np.linalg.norm(taste_raw, axis = 1, keepdims = True).clip(min = 1e-9)

taste_norm = (taste_raw / row_norms).astype('float32')

user_taste_vec = {uid_uniq[i]: taste_norm[i] for i in range(len(uid_uniq))}

del W, G, taste_raw, taste_norm, row_norms; gc.collect()

print(f'Taste vectors built for {len(user_taste_vec):,} users')

Taste vectors built for 200,561 users

# Build a normalised movie-genre matrix for S2 and S4 retrievers.

# Expanding to all rated movies (movie_feat.index) rather than just

# POPULARITY_POOL raises the retrieval ceiling: S2/S4 were previously

# blind to ~27K movies that had ratings but no liked (4+) ratings.

movie_genre_aligned = movie_genre.reindex(movie_feat.index).fillna(0)

movie_genre_norm = normalize(movie_genre_aligned.values.astype('float32'), norm='l2', axis=1)

genre_index_order = movie_genre_aligned.index.tolist()

del movie_genre_aligned; gc.collect()

print(f'genre_norm matrix: {movie_genre_norm.shape} | '

f'{movie_genre_norm.nbytes/1e6:.0f} MB')

genre_norm matrix: (77369, 19) | 6 MB

# Assemble the inputs for LightGBM

FEATURE_COLS = ['u_rating_count', 'u_mean_rating', 'u_rating_freq', 'u_rating_std', 'm_global_count', 'm_log_count', 'm_global_mean', 'm_bayesian', 'm_rating_variance', 'm_tag_count', 'genre_affinity',]

TRAIN_USERS_SAMPLE = 8000

NEGATIVES_PER_POS = 4

K_CAND = 200

rng = np.random.default_rng(100)

sample_users = rng.choice(list(train_fold_users & warm_users), size = min(TRAIN_USERS_SAMPLE, len(train_fold_users & warm_users)), replace = False,)

# ── Batch-precompute S2 and S4 genre scores ──────────────────────────────

# s2_personal and s4_temporal each run movie_genre_norm @ vector inside the

# loop. For 8,000 users that is 16,000 individual (77K×19)@(19,) BLAS calls.

# Two batch matmuls replace all of them; BLAS executes a single fused kernel

# that is 10-50x faster than the equivalent number of small calls.

# S2 batch: normalise each user's genre-affinity row, then one matmul

uga_sample = user_genre_affinity.reindex(sample_users).fillna(0).values.astype('float32')

uga_norms = np.linalg.norm(uga_sample, axis=1, keepdims=True).clip(min=1e-9)

s2_scores = movie_genre_norm @ (uga_sample / uga_norms).T # (n_movies, n_users)

del uga_sample, uga_norms; gc.collect()

# S4 batch: taste vectors are already unit-normalised

taste_sample = np.vstack([user_taste_vec.get(uid, np.zeros(len(genre_cols), dtype='float32')) for uid in sample_users]).astype('float32')

s4_scores = movie_genre_norm @ taste_sample.T # (n_movies, n_users)

del taste_sample; gc.collect()

print(f's2_scores: {s2_scores.shape} s4_scores: {s4_scores.shape} '

f'({(s2_scores.nbytes + s4_scores.nbytes)/1e6:.0f} MB combined)')

# ── Build (userId, movieId, label) pairs ─────────────────────────────────

# Each iteration slices one precomputed column (O(1)) instead of running a

# fresh matmul. np.argpartition finds the top-K in O(n) vs O(n log n) for

# a full argsort; only the K retained entries are then sorted.

pair_rows = []

for i, uid in enumerate(sample_users):

liked_list = user_liked.get(uid, [])

if not liked_list:

continue

seen = get_seen(uid)

# S2 candidates — slice precomputed column, partition, filter seen

s2_col = s2_scores[:, i]

s2_top = np.argpartition(s2_col, -K_CAND)[-K_CAND:]

s2_top = s2_top[np.argsort(s2_col[s2_top])[::-1]]

s2_cands = [genre_index_order[j] for j in s2_top if genre_index_order[j] not in seen]

# S3 candidates — item-CF uses irregular dict structure; stays sequential

s3_cands = s3_item_cf(uid, K=K_CAND)

# S4 candidates — slice precomputed column, partition, filter seen

s4_col = s4_scores[:, i]

s4_top = np.argpartition(s4_col, -K_CAND)[-K_CAND:]

s4_top = s4_top[np.argsort(s4_col[s4_top])[::-1]]

s4_cands = [genre_index_order[j] for j in s4_top if genre_index_order[j] not in seen]

liked_set = set(liked_list)

neg_pool = list(dict.fromkeys(s2_cands + s3_cands + s4_cands))

neg_pool = [m for m in neg_pool if m not in liked_set]

n_neg = min(len(liked_list) * NEGATIVES_PER_POS, len(neg_pool))

neg_movies = rng.choice(neg_pool, size=n_neg, replace=False).tolist() if n_neg > 0 else []

for m in liked_list:

pair_rows.append((uid, m, 1))

for m in neg_movies:

pair_rows.append((uid, m, 0))

del s2_scores, s4_scores; gc.collect()

pairs = pd.DataFrame(pair_rows, columns = ['userId', 'movieId', 'label'])

del pair_rows; gc.collect()

s2_scores: (77369, 8000) s4_scores: (77369, 8000) (4952 MB combined)

0

# Join user features

u_feat = user_stats[['rating_count','mean_rating','rating_freq','rating_std']].rename(columns = {'rating_count': 'u_rating_count',

'mean_rating' : 'u_mean_rating',

'rating_freq' : 'u_rating_freq',

'rating_std' : 'u_rating_std',})

pairs = pairs.merge(u_feat, left_on = 'userId', right_index = True, how = 'left')

pairs.head()

| userId | movieId | label | u_rating_count | u_mean_rating | u_rating_freq | u_rating_std | |

|---|---|---|---|---|---|---|---|

| 0 | 196703 | 10 | 1 | 34 | 3.6471 | 34.0000 | 0.9173 |

| 1 | 196703 | 16 | 1 | 34 | 3.6471 | 34.0000 | 0.9173 |

| 2 | 196703 | 22 | 1 | 34 | 3.6471 | 34.0000 | 0.9173 |

| 3 | 196703 | 47 | 1 | 34 | 3.6471 | 34.0000 | 0.9173 |

| 4 | 196703 | 58 | 1 | 34 | 3.6471 | 34.0000 | 0.9173 |

# Join movie features

m_feat = movie_feat[['global_count','log_count','global_mean','bayesian_score', 'rating_variance','tag_count']].rename(columns={'global_count' : 'm_global_count',

'log_count' : 'm_log_count',

'global_mean' : 'm_global_mean',

'bayesian_score' : 'm_bayesian',

'rating_variance': 'm_rating_variance',

'tag_count' : 'm_tag_count',})

pairs = pairs.merge(m_feat, left_on = 'movieId', right_index = True, how='left')

# Compute genre affinity via stacked dot products. L2-normalise the movie genre vectors so the result is cosine similarity — consistent with the per-movie normalisation inside build_lgb_features.

taste_matrix = np.vstack([user_taste_vec.get(uid, np.zeros(len(genre_cols), dtype='float32')) for uid in pairs['userId']]).astype('float32')

genre_matrix_raw = movie_genre.reindex(pairs['movieId']).fillna(0).values.astype('float32')

genre_norms = np.linalg.norm(genre_matrix_raw, axis=1, keepdims=True).clip(min=1e-9)

genre_matrix = genre_matrix_raw / genre_norms

pairs['genre_affinity'] = (taste_matrix * genre_matrix).sum(axis=1).astype('float32')

del taste_matrix, genre_matrix_raw, genre_norms, genre_matrix; gc.collect()

# Fill missing with global vals

pairs['u_rating_count'] = pairs['u_rating_count'].fillna(0).astype('float32')

pairs['u_mean_rating'] = pairs['u_mean_rating'].fillna(GLOBAL_MEAN).astype('float32')

pairs['u_rating_freq'] = pairs['u_rating_freq'].fillna(0).astype('float32')

pairs['u_rating_std'] = pairs['u_rating_std'].fillna(0).astype('float32')

pairs['m_global_count'] = pairs['m_global_count'].fillna(0).astype('float32')

pairs['m_log_count'] = pairs['m_log_count'].fillna(0).astype('float32')

pairs['m_global_mean'] = pairs['m_global_mean'].fillna(GLOBAL_MEAN).astype('float32')

pairs['m_bayesian'] = pairs['m_bayesian'].fillna(GLOBAL_MEAN).astype('float32')

pairs['m_rating_variance'] = pairs['m_rating_variance'].fillna(0).astype('float32')

pairs['m_tag_count'] = pairs['m_tag_count'].fillna(0).astype('float32')

lgb_train_df = pairs

del pairs; gc.collect()

print(f'LightGBM set: {lgb_train_df.shape} pos rate: {lgb_train_df["label"].mean():.2%}')

LightGBM set: (2275394, 14) pos rate: 26.12%

lgb_train_df.head()

| userId | movieId | label | u_rating_count | u_mean_rating | u_rating_freq | u_rating_std | m_global_count | m_log_count | m_global_mean | m_bayesian | m_rating_variance | m_tag_count | genre_affinity | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 196703 | 10 | 1 | 34.0000 | 3.6471 | 34.0000 | 0.9173 | 30624.0000 | 10.3296 | 3.4252 | 3.4254 | 0.7641 | 6.0753 | 0.4776 |

| 1 | 196703 | 16 | 1 | 34.0000 | 3.6471 | 34.0000 | 0.9173 | 20209.0000 | 9.9139 | 3.8338 | 3.8331 | 0.7297 | 6.7558 | 0.6299 |

| 2 | 196703 | 22 | 1 | 34.0000 | 3.6471 | 34.0000 | 0.9173 | 9327.0000 | 9.1408 | 3.3093 | 3.3106 | 0.8477 | 4.6444 | 0.7114 |

| 3 | 196703 | 47 | 1 | 34.0000 | 3.6471 | 34.0000 | 0.9173 | 58465.0000 | 10.9762 | 4.0874 | 4.0869 | 0.7139 | 7.8652 | 0.4049 |

| 4 | 196703 | 58 | 1 | 34.0000 | 3.6471 | 34.0000 | 0.9173 | 10647.0000 | 9.2731 | 3.9646 | 3.9627 | 0.9441 | 3.6376 | 0.7347 |

6. Evaluation harness & S1-S5 strategies

Five metrics at K=5 and K=10. The composite score is their unweighted mean. All strategies exclude movies already seen in train_ratings.

# Train base LightGBM model

xtrain = lgb_train_df[FEATURE_COLS].values

ytrain = lgb_train_df['label'].values

del lgb_train_df; gc.collect()

# Hold out 15% of rows as a proper validation set for early stopping

x_tr, x_val, y_tr, y_val = train_test_split(xtrain, ytrain, test_size = 0.15, random_state = 100, stratify = ytrain)

lgb_params = {'objective' : 'binary',

'metric' : 'auc',

'learning_rate' : 0.05,

# 'num_leaves' : 63,

# 'min_child_samples': 20,

'feature_fraction' : 0.8,

'bagging_fraction' : 0.8,

'bagging_freq' : 5,

'verbose' : -1,

'n_jobs' : -1,}

lgb_model = lgb.train(lgb_params,

lgb.Dataset(x_tr,

label = y_tr,

feature_name = FEATURE_COLS),

num_boost_round = 500,

valid_sets = [lgb.Dataset(x_val, label = y_val)],

callbacks = [lgb.early_stopping(20, verbose = False), lgb.log_evaluation(50)],)

del xtrain, ytrain, x_tr, x_val, y_tr, y_val; gc.collect()

print(f'LightGBM trained best_iter = {lgb_model.best_iteration}')

[50] valid_0's auc: 0.85308

[100] valid_0's auc: 0.857502

[150] valid_0's auc: 0.860966

[200] valid_0's auc: 0.864246

[250] valid_0's auc: 0.866321

[300] valid_0's auc: 0.868303

[350] valid_0's auc: 0.869454

[400] valid_0's auc: 0.871189

[450] valid_0's auc: 0.872391

[500] valid_0's auc: 0.87376

LightGBM trained best_iter = 500

7. S6 architecture & cold-start gate

# Apply function to check for cold starts

cold_n = sum(is_cold(uid) for uid in eval_fold_users)

print(f'Cold in eval fold: {cold_n:,} ({100*cold_n/len(eval_fold_users):.1f}%)')

Cold in eval fold: 311 (1.1%)

Stage 1 — Expanded candidate pool

# Get stage 1 candidates and diagnostics

N_STAGE1 = 200

DIAG_N = 500

diag_users = eval_warm.sample(n = DIAG_N, random_state = 100)

recalls = []

for _, row in diag_users.iterrows():

pool = set(stage1_candidates(row['userId']))

true = row['true_items']

recalls.append(len(pool & true) / len(true) if true else 0.0)

print(f'Stage-1 Recall@{N_STAGE1} (n={DIAG_N}): {np.mean(recalls):.4f}')

Stage-1 Recall@200 (n=500): 0.5524

Stage 2 — Meta-ranker training

# Init params

STAGE2_FEATURE_COLS = FEATURE_COLS + ['in_s2','in_s3','in_s4', 'rank_s2','rank_s3','rank_s4', 'n_retrievers', 's5_score',] # base LightGBM predicted probability — strongest available signal

META_TRAIN_USERS = 8000 # doubled from 4000

META_NEG_RATIO = 5

meta_users = rng.choice(list(train_fold_users & warm_users), size = min(META_TRAIN_USERS, len(train_fold_users & warm_users)), replace = False,)

# ── Batch-precompute S2 and S4 genre scores for meta training ───────────

# Same optimisation as the base LGB pairs cell: replace per-user matmuls

# with two batch matmuls before the loop.

uga_meta = user_genre_affinity.reindex(meta_users).fillna(0).values.astype('float32')

uga_norms = np.linalg.norm(uga_meta, axis=1, keepdims=True).clip(min=1e-9)

s2_scores_meta = movie_genre_norm @ (uga_meta / uga_norms).T # (n_movies, n_meta_users)

del uga_meta, uga_norms; gc.collect()

taste_meta = np.vstack([user_taste_vec.get(uid, np.zeros(len(genre_cols), dtype='float32')) for uid in meta_users]).astype('float32')

s4_scores_meta = movie_genre_norm @ taste_meta.T # (n_movies, n_meta_users)

del taste_meta; gc.collect()

print(f'Meta score matrices: {s2_scores_meta.shape} '

f'({(s2_scores_meta.nbytes + s4_scores_meta.nbytes)/1e6:.0f} MB combined)')

# ── Collect (uid, movie, label, ranks) ───────────────────────────────────

pair_rows = []

t0 = time.time()

for i, uid in enumerate(meta_users):

# S2: slice precomputed column, partition top-N_STAGE1, filter seen

seen = get_seen(uid)

s2_col = s2_scores_meta[:, i]

s2_top = np.argpartition(s2_col, -N_STAGE1)[-N_STAGE1:]

s2_top = s2_top[np.argsort(s2_col[s2_top])[::-1]]

s2l = [genre_index_order[j] for j in s2_top

if genre_index_order[j] not in seen][:N_STAGE1]

# S3: item-CF stays sequential

s3l = s3_item_cf(uid, K=N_STAGE1)

# S4: slice precomputed column, partition top-N_STAGE1, filter seen

s4_col = s4_scores_meta[:, i]

s4_top = np.argpartition(s4_col, -N_STAGE1)[-N_STAGE1:]

s4_top = s4_top[np.argsort(s4_col[s4_top])[::-1]]

s4l = [genre_index_order[j] for j in s4_top if genre_index_order[j] not in seen][:N_STAGE1]

cands = list(dict.fromkeys(s2l + s3l + s4l))

# Positives: candidates that appear in the user's held-out ground truth.

# Using ground_truth (test-set labels) rather than user_liked (training-set

# liked items) ensures positives are present in the retrieval pool and

# therefore carry meaningful retriever rank features (in_s2/s3/s4, rank_s*).

true_set = ground_truth[uid] if uid in ground_truth.index else set()

pos_c = [m for m in cands if m in true_set]

if not pos_c:

continue

# Negatives: candidates not in the user's true set

neg_c = [m for m in cands if m not in true_set]

n_neg = min(len(pos_c) * META_NEG_RATIO, len(neg_c))

neg_s = rng.choice(neg_c, size=n_neg, replace=False).tolist() if n_neg > 0 else []

all_c = pos_c + neg_s

if not all_c:

continue

s2r = {m: r for r, m in enumerate(s2l)}

s3r = {m: r for r, m in enumerate(s3l)}

s4r = {m: r for r, m in enumerate(s4l)}

for m in all_c:

pair_rows.append((uid,

m,

int(m in true_set),

int(m in s2r),

int(m in s3r),

int(m in s4r),

s2r.get(m, N_STAGE1),

s3r.get(m, N_STAGE1),

s4r.get(m, N_STAGE1),))

if (i + 1) % 500 == 0:

print(f' {i+1}/{len(meta_users)} {time.time()-t0:.0f}s')

del s2_scores_meta, s4_scores_meta; gc.collect()

print(f'Retrieval done: {len(pair_rows):,} pairs in {time.time()-t0:.0f}s')

Meta score matrices: (77369, 8000) (4952 MB combined)

500/8000 5s

1000/8000 10s

1500/8000 14s

2000/8000 19s

2500/8000 24s

3000/8000 28s

3500/8000 33s

4000/8000 38s

4500/8000 43s

5000/8000 47s

5500/8000 53s

6000/8000 57s

6500/8000 62s

7000/8000 67s

7500/8000 72s

8000/8000 77s

Retrieval done: 188,689 pairs in 80s

# Build the data from the results

pairs = pd.DataFrame(pair_rows, columns = ['userId','movieId','label', 'in_s2','in_s3','in_s4', 'rank_s2','rank_s3','rank_s4',])

pairs['n_retrievers'] = (pairs['in_s2'] + pairs['in_s3'] + pairs['in_s4']).astype('int8')

pairs[['in_s2','in_s3','in_s4']] = pairs[['in_s2','in_s3','in_s4']].astype('int8')

pairs[['rank_s2','rank_s3','rank_s4']] = pairs[['rank_s2','rank_s3','rank_s4']].astype('int16')

# Join user features

u_feat = user_stats[['rating_count','mean_rating','rating_freq','rating_std']].rename(columns = {'rating_count': 'u_rating_count',

'mean_rating' : 'u_mean_rating',

'rating_freq' : 'u_rating_freq',

'rating_std' : 'u_rating_std',})

pairs = pairs.merge(u_feat, left_on = 'userId', right_index = True, how = 'left')

# Join movie features

m_feat = movie_feat[['global_count','log_count','global_mean','bayesian_score', 'rating_variance','tag_count']].rename(columns = {'global_count' : 'm_global_count',

'log_count' : 'm_log_count',

'global_mean' : 'm_global_mean',

'bayesian_score' : 'm_bayesian',

'rating_variance': 'm_rating_variance',

'tag_count' : 'm_tag_count',})

pairs = pairs.merge(m_feat, left_on = 'movieId', right_index = True, how = 'left')

# Join the vectorized genre affinity

taste_matrix = np.vstack([user_taste_vec.get(uid, np.zeros(len(genre_cols), dtype = 'float32')) for uid in pairs['userId']]).astype('float32')

genre_matrix_raw = movie_genre.reindex(pairs['movieId']).fillna(0).values.astype('float32')

genre_norms = np.linalg.norm(genre_matrix_raw, axis=1, keepdims=True).clip(min=1e-9)

genre_matrix = genre_matrix_raw / genre_norms

pairs['genre_affinity'] = (taste_matrix * genre_matrix).sum(axis=1).astype('float32')

del taste_matrix, genre_matrix_raw, genre_norms, genre_matrix; gc.collect()

0

pairs.head()

| userId | movieId | label | in_s2 | in_s3 | in_s4 | rank_s2 | rank_s3 | rank_s4 | n_retrievers | u_rating_count | u_mean_rating | u_rating_freq | u_rating_std | m_global_count | m_log_count | m_global_mean | m_bayesian | m_rating_variance | m_tag_count | genre_affinity | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 182041 | 1196 | 1 | 0 | 1 | 0 | 200 | 0 | 200 | 1 | 19 | 3.5789 | 19.0000 | 0.7685 | 68455 | 11.1339 | 4.1351 | 4.1347 | 0.9112 | 7.5348 | 0.8041 |

| 1 | 182041 | 95167 | 1 | 0 | 1 | 0 | 200 | 104 | 200 | 1 | 19 | 3.5789 | 19.0000 | 0.7685 | 8632 | 9.0633 | 3.4822 | 3.4826 | 0.9153 | 5.9506 | 0.7783 |

| 2 | 182041 | 86332 | 0 | 0 | 1 | 0 | 200 | 167 | 200 | 1 | 19 | 3.5789 | 19.0000 | 0.7685 | 12975 | 9.4709 | 3.3188 | 3.3197 | 1.0297 | 7.1301 | 0.8060 |

| 3 | 182041 | 590 | 0 | 0 | 1 | 0 | 200 | 70 | 200 | 1 | 19 | 3.5789 | 19.0000 | 0.7685 | 44195 | 10.6964 | 3.7277 | 3.7275 | 0.9189 | 5.9584 | 0.5203 |

| 4 | 182041 | 37830 | 0 | 1 | 1 | 1 | 14 | 184 | 6 | 3 | 19 | 3.5789 | 19.0000 | 0.7685 | 1799 | 7.4955 | 3.3894 | 3.3936 | 1.1338 | 4.7958 | 0.8427 |

pairs.isnull().sum()

| 0 | |

|---|---|

| userId | 0 |

| movieId | 0 |

| label | 0 |

| in_s2 | 0 |

| in_s3 | 0 |

| in_s4 | 0 |

| rank_s2 | 0 |

| rank_s3 | 0 |

| rank_s4 | 0 |

| n_retrievers | 0 |

| u_rating_count | 0 |

| u_mean_rating | 0 |

| u_rating_freq | 0 |

| u_rating_std | 0 |

| m_global_count | 0 |

| m_log_count | 0 |

| m_global_mean | 0 |

| m_bayesian | 0 |

| m_rating_variance | 0 |

| m_tag_count | 0 |

| genre_affinity | 0 |

# Fill missing values

#for col, default in [('u_rating_count', 0), ('u_mean_rating', GLOBAL_MEAN),

# ('u_rating_freq', 0), ('u_rating_std', 0),

# ('m_global_count', 0), ('m_log_count', 0),

# ('m_global_mean', GLOBAL_MEAN), ('m_bayesian', GLOBAL_MEAN),

# ('m_rating_variance', 0), ('m_tag_count', 0)]:#

# pairs[col] = pairs[col].fillna(default).astype('float32')

# Compute S5 predicted probability for each candidate.

# This is the single strongest per-(user,movie) relevance signal;

# the meta-ranker can learn to weight it, correct it with retriever

# rank signals, or override it for users where CF is more reliable.

pairs['s5_score'] = lgb_model.predict(pairs[FEATURE_COLS].values).astype('float32')

meta_train_df = pairs[STAGE2_FEATURE_COLS + ['label']].reset_index(drop = True)

print(f'Meta set: {meta_train_df.shape} pos rate: {meta_train_df["label"].mean():.2%}')

Meta set: (188689, 20) pos rate: 16.68%

meta_train_df.head()

| u_rating_count | u_mean_rating | u_rating_freq | u_rating_std | m_global_count | m_log_count | m_global_mean | m_bayesian | m_rating_variance | m_tag_count | genre_affinity | in_s2 | in_s3 | in_s4 | rank_s2 | rank_s3 | rank_s4 | n_retrievers | s5_score | label | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 19 | 3.5789 | 19.0000 | 0.7685 | 68455 | 11.1339 | 4.1351 | 4.1347 | 0.9112 | 7.5348 | 0.8041 | 0 | 1 | 0 | 200 | 0 | 200 | 1 | 0.8162 | 1 |

| 1 | 19 | 3.5789 | 19.0000 | 0.7685 | 8632 | 9.0633 | 3.4822 | 3.4826 | 0.9153 | 5.9506 | 0.7783 | 0 | 1 | 0 | 200 | 104 | 200 | 1 | 0.2577 | 1 |

| 2 | 19 | 3.5789 | 19.0000 | 0.7685 | 12975 | 9.4709 | 3.3188 | 3.3197 | 1.0297 | 7.1301 | 0.8060 | 0 | 1 | 0 | 200 | 167 | 200 | 1 | 0.1825 | 0 |

| 3 | 19 | 3.5789 | 19.0000 | 0.7685 | 44195 | 10.6964 | 3.7277 | 3.7275 | 0.9189 | 5.9584 | 0.5203 | 0 | 1 | 0 | 200 | 70 | 200 | 1 | 0.5174 | 0 |

| 4 | 19 | 3.5789 | 19.0000 | 0.7685 | 1799 | 7.4955 | 3.3894 | 3.3936 | 1.1338 | 4.7958 | 0.8427 | 1 | 1 | 1 | 14 | 184 | 6 | 3 | 0.0626 | 0 |

# Train the meta-ranker models with a proper held-out validation split

xmeta = meta_train_df[STAGE2_FEATURE_COLS].values

ymeta = meta_train_df['label'].values

# Hold out 15% for validation to enable meaningful early stopping

xm_tr, xm_val, ym_tr, ym_val = train_test_split(

xmeta, ymeta, test_size=0.15, random_state=42, stratify=ymeta)

meta_lgb_params = {'objective':'binary',

'metric':'auc',

# 'num_leaves':127,

'learning_rate':0.03,

'feature_fraction':0.8,

'bagging_fraction':0.8,

'bagging_freq':5,

'verbose':-1,

'n_jobs':-1,}

meta_lgb = lgb.train(meta_lgb_params,

lgb.Dataset(xm_tr,

label = ym_tr,

feature_name = STAGE2_FEATURE_COLS),

num_boost_round = 500,

valid_sets = [lgb.Dataset(xm_val, label = ym_val)],

callbacks = [lgb.early_stopping(30,verbose = False), lgb.log_evaluation(100)],)

print(f'Meta-LightGBM trained best_iter={meta_lgb.best_iteration}')

meta_xgb = XGBClassifier(learning_rate = 0.03,

#max_depth = 6,

#n_estimators = 400,

subsample = 0.8,

colsample_bytree = 0.8,

eval_metric = 'auc',

early_stopping_rounds = 30,

verbosity = 0,)

meta_xgb.fit(xm_tr, ym_tr, eval_set = [(xm_val, ym_val)], verbose=False)

print(f'Meta-XGBoost trained best_iter={meta_xgb.best_iteration}')

[100] valid_0's auc: 0.856981

[200] valid_0's auc: 0.859101

[300] valid_0's auc: 0.860041

[400] valid_0's auc: 0.860901

Meta-LightGBM trained best_iter=441

Meta-XGBoost trained best_iter=99

del xmeta, ymeta, xm_tr, xm_val, ym_tr, ym_val, meta_train_df, pairs, pair_rows; gc.collect()

28

10. Full benchmark: S1 → S7

# Verify _seen_cache is populated (built after the user split; confirmed here)

print(f'_seen_cache: {len(_seen_cache):,} users | '

f'{sum(len(v) for v in _seen_cache.values()):,} total seen entries')

_seen_cache: 200,880 users | 28,583,599 total seen entries

%%time

# ── Batch-precompute S2 and S4 score vectors for all eval_warm users ─────

# evaluate_strategy calls s2_personal and s4_temporal per user, each firing

# a (77K×19)@(19,) matmul. For 2,000 users × 7 strategies × 2 Ks that is

# 56,000 individual BLAS calls — and S5/S6/S7 call S2+S4 internally, tripling

# the count further. Two batch matmuls replace all of them.

eval_user_ids = eval_warm['userId'].values

uga_eval = user_genre_affinity.reindex(eval_user_ids).fillna(0).values.astype('float32')

uga_norms = np.linalg.norm(uga_eval, axis = 1, keepdims = True).clip(min = 1e-9)

s2_matrix = movie_genre_norm @ (uga_eval / uga_norms).T

del uga_eval, uga_norms

taste_eval = np.vstack([user_taste_vec.get(uid, np.zeros(len(genre_cols), dtype='float32')) for uid in eval_user_ids]).astype('float32')

s4_matrix = movie_genre_norm @ taste_eval.T

del taste_eval

# Populate the score caches so s2_personal / s4_temporal skip the matmul

_s2_score_cache = {uid: s2_matrix[:, i] for i, uid in enumerate(eval_user_ids)}

_s4_score_cache = {uid: s4_matrix[:, i] for i, uid in enumerate(eval_user_ids)}

del s2_matrix, s4_matrix; gc.collect()

print(f'Score caches populated for {len(_s2_score_cache):,} eval users')

# Perform the benchmark analysis

print('Initiating Benchmark Analysis..')

STRATEGIES = {'S1: Popularity' : s1_popularity,

'S2: Personal' : s2_personal,

'S3: Item-CF' : s3_item_cf,

'S4: Temporal' : s4_temporal,

'S5: LightGBM' : s5_lgbm,

'S6: XGBoost' : s6_xgboost,

'S7: Master Ensemble' : s7_ensemble,}

# All five metrics are reported; F1 is retained for display but excluded from

# the composite score. F1 = HM(Precision, Recall) — including it alongside

# Precision and Recall gives the P/R axis 3x the weight of NDCG and Hit Rate

# in a simple average, making the composite uninterpretable as a balanced

# summary. The composite uses only the four non-redundant metrics.