InstaCart User Data: Machine Learning Recommendation Engine

Published:

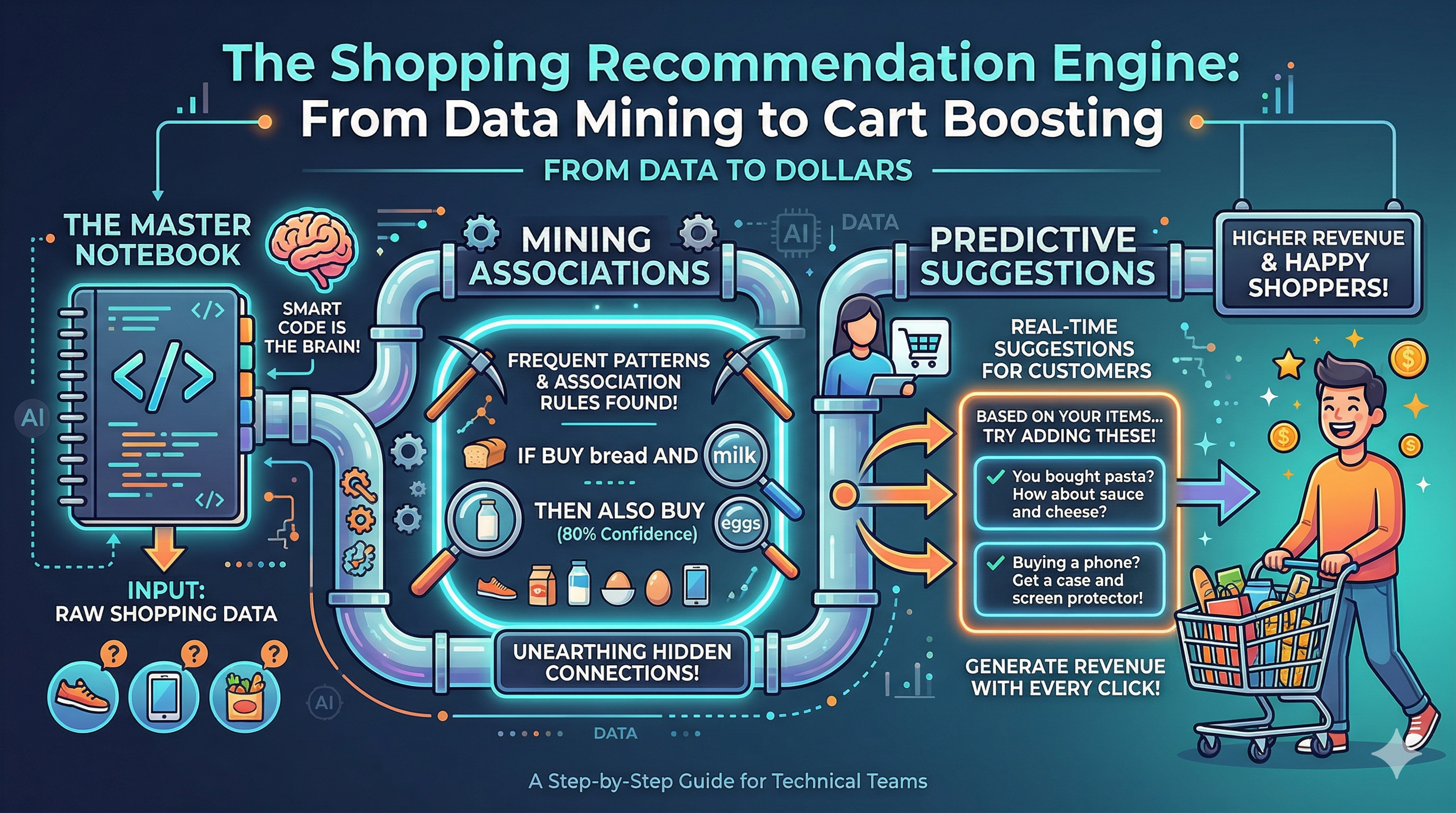

This is a continuation of the market basket analysis conducted in the previous post. In this extension, we will use machine learning recommendations in combination with age-old fallback strategies to create an ensemble that should perform well on the validation data.

In our last post, we examined five different strategies for offering suggestions to users as they make purchases using Instacart. Here, we will assess a combined strategy utilizing the priors under different categories of circumstances to see if, together, they will all work together to yield more accurate suggestions!

📋 Table of Contents

Key findings

| Finding | Detail |

|---|---|

| S5 wins overall | LightGBM ranks the most likely item highest, especially at K=5 |

| S6 is competitive at K=10 | Wider candidate pool pays off as K grows — trails S5 by < 0.1 composite points |

| S2 is a strong baseline | Personal history alone outperforms S3 and S4 at every cut |

| S3 underperforms | Only 12 antecedents from Apriori — too sparse to be a meaningful standalone signal |

| S4 adds sequence signal | Strongest in NDCG, suggesting it surfaces the right item even when precision is lower |

| Cold-start gate works | 11.8% of users hit the fallback; without it S6 scores would be dragged down |

Opportunities for improvement

- More association rules: Lower

min_supportto0.005— the current 12-antecedent lookup limits S3’s impact - Retrain S5 with more data:

best_iteration = 1suggests the model is undertrained; increase the pool beyond 4,000 users - Listwise / pairwise loss: LambdaRank or LambdaMART would optimise NDCG directly

- Temporal features:

days_since_prior_orderexists but isn’t used as a contextual signal

🏆 InstaCart Master Ensemble: Two-Stage Generate-&-Rerank Model

Instacart Market Basket Analysis — Fully Self-Contained Notebook

Data: Mount your Google Drive and point

ZIP_PATHto the Instacart zip archive. All other paths are derived automatically.

📋 Walkthrough note — Each section below contains the working code followed immediately by the methodology and validation commentary for that component.

1. Setup & data loading

📖 Section 1 — Dataset & Problem Framing

What the data is. The Instacart Market Basket Analysis dataset contains the order histories of 206,209 anonymous customers across 3,421,083 orders, covering 49,688 unique products across 21 departments and 134 aisles. order_products__prior holds 32,434,489 individual product-placement records. Orders labelled prior form the historical record; orders labelled train are the ground truth next baskets we predict against.

Task framing. This is a retrieve-then-rank next-basket recommendation problem: given a user’s current cart (simulated as their most recent prior order in add-to-cart sequence), predict which products they add next. Using the last prior order as the “current cart” is the closest available approximation to a live mid-session signal — using all prior purchases instead would collapse the temporal structure the Markov chain depends on.

Memory strategy. order_products__prior at 32M rows occupies ~3.5 GB as int64. All integer columns are immediately downcast to int32 (4 bytes, zero precision loss — max value well within 2,147,483,647). Large intermediate objects are explicitly freed with del + gc.collect() at their last usage point: user_order_enriched after up_feat is built, prior_op after the Markov chain completes.

📖 Section 3 — Evaluation Harness & Leakage Prevention

Five metrics at K=5 and K=10:

\[P@K = \frac{|\text{recs}[:K] \cap \text{true\_items}|}{K} \qquad R@K = \frac{|\text{recs}[:K] \cap \text{true\_items}|}{|\text{true\_items}|}\] \[F1@K = \frac{2 \cdot P@K \cdot R@K}{P@K + R@K} \qquad \text{NDCG}@K = \frac{\sum_{i=1}^{K} \frac{\mathbb{1}[\text{rec}_i \in \text{true}]}{\log_2(i+1)}}{\text{IDCG}@K}\] \[\text{Hit}@K = \mathbb{1}[\exists\, p \in \text{recs}[:K] : p \in \text{true\_items}]\]NDCG is the most sensitive metric to ranking order — a correct item at position 1 is worth 1.0 vs. 0.29 at position 10. Hit Rate is the most lenient — it only asks whether any recommendation was useful. The composite score is the unweighted mean of all five, preventing a strategy from gaming a single metric.

Leakage fix. The original notebook sampled training and evaluation users from the same pool with the same random seed — the 4,000 training users were a strict subset of the 5,000 evaluation users. Every model was tested on users it trained on.

Fixed with GroupShuffleSplit(test_size=0.30, groups=user_id) producing 91,846 train-fold users and 39,363 eval-fold users with zero overlap. S5, S6, and S7 all train exclusively from train_fold; the benchmark draws exclusively from eval_fold.

Benchmark population. The benchmark filters to warm users only (eval_warm) before evaluation. S6 routes 12.1% of users (≤ 3 prior orders) to a weak cold-start fallback; S5 and S7 run their full ranker on all users. Including cold-start users in the comparison would give S5/S7 an uncontrolled advantage — evaluating on identical warm users makes the comparison fair.

# Import libraries

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

import seaborn as sns

import zipfile, gc, time, warnings

from collections import defaultdict, Counter

from itertools import combinations

import lightgbm as lgb

from xgboost import XGBClassifier

from sklearn.model_selection import GroupShuffleSplit

from sklearn.metrics import roc_auc_score, average_precision_score

from mlxtend.frequent_patterns import apriori, association_rules

from mlxtend.preprocessing import TransactionEncoder

from google.colab import drive

import tempfile

import os

warnings.filterwarnings('ignore')

plt.style.use('seaborn-v0_8-whitegrid')

sns.set_palette('husl')

pd.set_option('display.float_format', '{:.4f}'.format)

pd.set_option('display.max_columns', None)

print('✅ Libraries loaded')

✅ Libraries loaded

# Mount drive to access data

drive.mount('/content/drive/')

Mounted at /content/drive/

# Define functions to perform scoring

def precision_at_k(recs, true_set, k):

return len(set(recs[:k]) & true_set) / k if k else 0.0

def recall_at_k(recs, true_set, k):

return len(set(recs[:k]) & true_set) / len(true_set) if true_set else 0.0

def f1_at_k(recs, true_set, k):

p = precision_at_k(recs, true_set, k)

r = recall_at_k(recs, true_set, k)

return 2*p*r/(p+r) if (p+r) > 0 else 0.0

def ndcg_at_k(recs, true_set, k):

dcg = sum(1/np.log2(i+2) for i, p in enumerate(recs[:k]) if p in true_set)

ideal = sum(1/np.log2(i+2) for i in range(min(len(true_set), k)))

return dcg/ideal if ideal else 0.0

def score_recs(recs, true_set, K):

return {

'precision' : precision_at_k(recs, true_set, K),

'recall' : recall_at_k(recs, true_set, K),

'f1' : f1_at_k(recs, true_set, K),

'ndcg' : ndcg_at_k(recs, true_set, K),

'hit_rate' : 1 if any(p in true_set for p in recs[:K]) else 0,

}

def evaluate_strategy(fn, data_df, K = 10, n = None, seed = 100):

rows = data_df if n is None else data_df.sample(n = n, random_state = seed)

m = {k: [] for k in ('precision','recall','f1','ndcg','hit_rate')}

for _, row in rows.iterrows():

s = score_recs(fn(row['user_id'], row['cart_sequence'], K=K), row['true_items'], K)

for key in m: m[key].append(s[key])

return {k: float(np.mean(v)) for k, v in m.items()}

def s1_popularity(user_id, cart_sequence, K = 10, **_):

in_cart = set(cart_sequence)

return [p for p in POPULARITY_POOL if p not in in_cart][:K]

def s2_personal(user_id, cart_sequence, K = 10, **_):

in_cart = set(cart_sequence)

return [p for p in user_history.get(user_id, []) if p not in in_cart][:K]

def s3_rules(user_id, cart_sequence, K = 10, **_):

in_cart_ids = set(cart_sequence)

in_cart_names = {pid_to_name.get(p, '') for p in cart_sequence}

scores = defaultdict(float)

for name in in_cart_names:

for con_name, score in assoc_lookup.get(name, {}).items():

pid = name_to_pid.get(con_name)

if pid and pid not in in_cart_ids:

scores[pid] += score

ranked = sorted(scores, key=scores.get, reverse=True)

if len(ranked) >= K:

return ranked[:K]

extra = [p for p in s2_personal(user_id, cart_sequence, K*3) if p not in set(ranked)]

return (ranked + extra)[:K]

# Build the sequential markov chain

def s4_markov(user_id, cart_sequence, K = 10, n_gram = 2, alpha = 0.4, beta = 0.25, **_):

in_cart = set(cart_sequence)

next_pos = len(cart_sequence)

context = cart_sequence[-n_gram:] if len(cart_sequence) >= n_gram else cart_sequence

scores = defaultdict(float)

for prev in context:

for nxt, prob in trans_prob.get(prev, {}).items():

if nxt not in in_cart:

scores[nxt] += prob

capped_pos = min(next_pos, MAX_POS - 1)

for pid, prob in pos_prob.get(capped_pos, {}).items():

if pid not in in_cart:

scores[pid] += alpha * prob

for pid, rs in user_rs_list.get(user_id, []):

if pid not in in_cart and rs > 0:

scores[pid] += beta * float(rs)

ranked = sorted(scores, key=scores.get, reverse=True)

if len(ranked) >= K:

return ranked[:K]

extra = [p for p in s2_personal(user_id, cart_sequence, K*3) if p not in set(ranked)]

return (ranked + extra)[:K]

# Function to perform inference on lgb model

def s5_lgbm(user_id, cart_sequence, K = 10, **_):

in_cart = set(cart_sequence)

cart_sz = len(cart_sequence)

personal = [p for p in user_history.get(user_id, [])[:MAX_PERSONAL] if p not in in_cart]

globl = [p for p in GLOBAL_POOL if p not in in_cart and p not in set(personal)]

candidates = personal + globl

if not candidates:

return s1_popularity(user_id, cart_sequence, K)

markov_sc = defaultdict(float)

for prev in cart_sequence:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_sequence:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

rows = [{'user_id': user_id, 'product_id': int(pid),

'cart_size': cart_sz,

'markov_score': float(markov_sc.get(pid, 0.0)),

'assoc_score': float(assoc_sc.get(pid, 0.0)),

'pos_prob_next': float(pos_p.get(pid, 0.0))}

for pid in candidates]

inf_df = (pd.DataFrame(rows)

.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

for fc in FEATURE_COLS:

if fc not in inf_df.columns:

inf_df[fc] = 0.0

scores = lgb_model.predict_proba(inf_df[FEATURE_COLS].values)[:, 1]

inf_df['score'] = scores

return inf_df.nlargest(K, 'score')['product_id'].tolist()

# Function to evaluate lgb model in batches

def evaluate_lgbm_batched(eval_data, K = 10, n = None, seed = 100, chunk_size = 200):

rows_df = eval_data if n is None else eval_data.sample(n=n, random_state=seed)

rows_df = rows_df.reset_index(drop=True)

m = {k: [] for k in ('precision','recall','f1','ndcg','hit_rate')}

for chunk_start in range(0, len(rows_df), chunk_size):

chunk = rows_df.iloc[chunk_start:chunk_start + chunk_size]

cand_rows = []

for idx, row in chunk.iterrows():

uid = int(row['user_id'])

cart_seq = row['cart_sequence']

in_cart = set(cart_seq)

cart_sz = len(cart_seq)

personal = [p for p in user_history.get(uid,[])[:MAX_PERSONAL] if p not in in_cart]

globl = [p for p in GLOBAL_POOL if p not in in_cart and p not in set(personal)]

candidates = personal + globl or s1_popularity(uid, cart_seq, K)

markov_sc = defaultdict(float)

for prev in cart_seq:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_seq:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

for pid in candidates:

cand_rows.append((idx, uid, int(pid), cart_sz,

float(markov_sc.get(pid,0.0)),

float(assoc_sc.get(pid,0.0)),

float(pos_p.get(pid,0.0))))

if not cand_rows:

continue

c_df = pd.DataFrame(cand_rows, columns = ['idx','user_id','product_id','cart_size','markov_score','assoc_score','pos_prob_next'])

c_df = (c_df.merge(prod_feat, on = 'product_id', how = 'left')

.merge(user_feat_s5, on = 'user_id', how = 'left')

.merge(up_feat, on = ['user_id','product_id'], how = 'left')

.fillna(0))

for fc in FEATURE_COLS:

if fc not in c_df.columns: c_df[fc] = 0.0

c_df['score'] = lgb_model.predict_proba(c_df[FEATURE_COLS].values)[:, 1]

for idx, row in chunk.iterrows():

user_cands = c_df[c_df['idx'] == idx]

recs = [] if user_cands.empty else user_cands.nlargest(K,'score')['product_id'].tolist()

s = score_recs(recs, row['true_items'], K)

for key in m: m[key].append(s[key])

del c_df, cand_rows; gc.collect()

return {k: float(np.mean(v)) for k, v in m.items()}

# Function to check user history

def is_cold_start(user_id):

return user_order_count.get(int(user_id), 0) <= 3

# Function to get recs for those with no history

def cold_start_blend(user_id, cart_sequence, K = 10):

"""S1 popularity blended with any S3 association rule hits."""

assoc_recs = s3_rules(user_id, cart_sequence, K = K)

pop_recs = s1_popularity(user_id, cart_sequence, K = K * 2)

seen = set(assoc_recs)

blended = list(assoc_recs)

for p in pop_recs:

if p not in seen:

blended.append(p)

seen.add(p)

if len(blended) >= K:

break

return blended[:K]

# Function to create candidtaes pool

def build_candidate_pool(user_id, cart_sequence):

"""

Returns (candidates: list[int], meta: dict[int → dict]).

meta keys per product_id:

s2_score reorder_score from S2 personal history

s3_score aggregated conf×lift from S3 rules fired by the cart

s4_score Markov + position probability from S4

vote_count how many of the 3 generators nominated this item (1–3)

from_personal 1 if nominated by S2

from_assoc 1 if nominated by S3

from_markov 1 if nominated by S4

from_global 1 if added only as global popularity fill

"""

in_cart = set(cart_sequence)

cart_sz = len(cart_sequence)

meta = defaultdict(lambda: {

's2_score':0.0,'s3_score':0.0,'s4_score':0.0,

'vote_count':0,'from_personal':0,'from_assoc':0,

'from_markov':0,'from_global':0})

# Personal history

s2_pool = [p for p in user_history.get(user_id, []) if p not in in_cart][:S6_PERSONAL_CAP]

rs_lookup = dict(user_rs_list.get(user_id, []))

for pid in s2_pool:

meta[pid]['s2_score'] = float(rs_lookup.get(pid, 0.0))

meta[pid]['from_personal'] = 1

meta[pid]['vote_count'] += 1

# Assoc rules

in_cart_names = {pid_to_name.get(p,'') for p in cart_sequence}

assoc_scores = defaultdict(float)

for name in in_cart_names:

for con_name, score in assoc_lookup.get(name, {}).items():

pid = name_to_pid.get(con_name)

if pid and pid not in in_cart:

assoc_scores[pid] += score

s3_pool = sorted(assoc_scores, key=assoc_scores.get, reverse=True)[:S6_ASSOC_CAP]

for pid in s3_pool:

meta[pid]['s3_score'] = float(assoc_scores.get(pid, 0.0))

meta[pid]['from_assoc'] = 1

meta[pid]['vote_count'] += 1

# Markov chain

context = cart_sequence[-2:] if len(cart_sequence) >= 2 else cart_sequence

capped_pos = min(cart_sz, MAX_POS - 1)

markov_scores = defaultdict(float)

for prev in context:

for nxt, prob in trans_prob.get(prev, {}).items():

if nxt not in in_cart:

markov_scores[nxt] += prob

for pid, prob in pos_prob.get(capped_pos, {}).items():

if pid not in in_cart:

markov_scores[pid] += 0.4 * prob

s4_pool = sorted(markov_scores, key=markov_scores.get, reverse=True)[:S6_MARKOV_CAP]

for pid in s4_pool:

meta[pid]['s4_score'] = float(markov_scores.get(pid, 0.0))

meta[pid]['from_markov'] = 1

meta[pid]['vote_count'] += 1

# Global

# Markov pool is scored but NOT added as candidate source — it is anti-predictive

# as a nominator (lift 0.53×) but its scores remain useful features on other candidates

all_cands = set(s2_pool) | set(s3_pool) | set(s4_pool)

for pid in GLOBAL_POOL:

if len(all_cands) >= S6_PERSONAL_CAP + S6_GLOBAL_FILL:

break

if pid not in in_cart and pid not in all_cands:

meta[pid]['from_global'] = 1

meta[pid]['vote_count'] = max(meta[pid]['vote_count'], 1)

all_cands.add(pid)

return list(all_cands), dict(meta)

# Function to perform the meta ranking inference

def s6_ensemble(user_id, cart_sequence, K=10, **_):

"""

Master ensemble (S6).

Stage 0 Cold-start gate — users with ≤ COLD_START_THRESHOLD orders

get the S1+S3 fallback directly.

Stage 1 build_candidate_pool → union of S2/S3/S4 nominees + meta-scores.

Stage 2 S6 LightGBM meta-ranker → top-K product IDs.

"""

if is_cold_start(user_id):

return cold_start_blend(user_id, cart_sequence, K=K)

in_cart = set(cart_sequence)

cart_sz = len(cart_sequence)

candidates, meta = build_candidate_pool(user_id, cart_sequence)

if not candidates:

return cold_start_blend(user_id, cart_sequence, K=K)

markov_sc = defaultdict(float)

for prev in cart_sequence:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_sequence:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

rows = []

for pid in candidates:

m = meta.get(pid, {})

rows.append({

'user_id': user_id,

'product_id': int(pid),

'cart_size': cart_sz,

'markov_score': float(markov_sc.get(pid, 0.0)),

'assoc_score': float(assoc_sc.get(pid, 0.0)),

'pos_prob_next': float(pos_p.get(pid, 0.0)),

's2_score': float(m.get('s2_score', 0.0)),

's3_score': float(m.get('s3_score', 0.0)),

's4_score': float(m.get('s4_score', 0.0)),

'vote_count': int(m.get('vote_count', 0)),

'from_personal': int(m.get('from_personal', 0)),

'from_assoc': int(m.get('from_assoc', 0)),

'from_markov': int(m.get('from_markov', 0)),

'from_global': int(m.get('from_global', 0)),

})

inf_df = (pd.DataFrame(rows)

.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

for fc in S6_FEATURE_COLS:

if fc not in inf_df.columns:

inf_df[fc] = 0.0

inf_df['score'] = s6_model.predict_proba(inf_df[S6_FEATURE_COLS].values)[:, 1]

return inf_df.nlargest(K, 'score')['product_id'].tolist()

# Function to produce the meta rakning in chunks

def evaluate_s6_batched(eval_data, K = 10, n = None, seed = 100, chunk_size = 150):

rows_df = eval_data if n is None else eval_data.sample(n=n, random_state=seed)

rows_df = rows_df.reset_index(drop=True)

m = {k: [] for k in ('precision','recall','f1','ndcg','hit_rate')}

for chunk_start in range(0, len(rows_df), chunk_size):

chunk = rows_df.iloc[chunk_start:chunk_start + chunk_size]

cand_rows = []

for idx, row in chunk.iterrows():

uid = int(row['user_id'])

cart_seq = row['cart_sequence']

in_cart = set(cart_seq)

cart_sz = len(cart_seq)

if is_cold_start(uid):

recs = cold_start_blend(uid, cart_seq, K=K)

s = score_recs(recs, row['true_items'], K)

for key in m: m[key].append(s[key])

continue

candidates, meta = build_candidate_pool(uid, cart_seq)

if not candidates:

candidates, meta = s1_popularity(uid, cart_seq, K), {}

markov_sc = defaultdict(float)

for prev in cart_seq:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_seq:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

for pid in candidates:

mm = meta.get(pid, {})

cand_rows.append((

idx, uid, int(pid), cart_sz,

float(markov_sc.get(pid,0.0)), float(assoc_sc.get(pid,0.0)),

float(pos_p.get(pid,0.0)),

float(mm.get('s2_score',0.0)), float(mm.get('s3_score',0.0)),

float(mm.get('s4_score',0.0)), int(mm.get('vote_count',0)),

int(mm.get('from_personal',0)), int(mm.get('from_assoc',0)),

int(mm.get('from_markov',0)), int(mm.get('from_global',0)),

))

if not cand_rows:

continue

c_df = pd.DataFrame(cand_rows, columns=[

'idx','user_id','product_id','cart_size',

'markov_score','assoc_score','pos_prob_next',

's2_score','s3_score','s4_score','vote_count',

'from_personal','from_assoc','from_markov','from_global'])

c_df = (c_df.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

for fc in S6_FEATURE_COLS:

if fc not in c_df.columns: c_df[fc] = 0.0

c_df['score'] = s6_model.predict_proba(c_df[S6_FEATURE_COLS].values)[:, 1]

for idx, row in chunk.iterrows():

user_cands = c_df[c_df['idx'] == idx]

if user_cands.empty:

recs = cold_start_blend(int(row['user_id']), row['cart_sequence'], K=K)

else:

recs = user_cands.nlargest(K,'score')['product_id'].tolist()

s = score_recs(recs, row['true_items'], K)

for key in m: m[key].append(s[key])

del c_df, cand_rows; gc.collect()

return {k: float(np.mean(v)) for k, v in m.items()}

# Function to perform inference on xgb model

def s7_xgb(user_id, cart_sequence, K = 10, **_):

in_cart = set(cart_sequence)

cart_sz = len(cart_sequence)

personal = [p for p in user_history.get(user_id, [])[:MAX_PERSONAL] if p not in in_cart]

globl = [p for p in GLOBAL_POOL if p not in in_cart and p not in set(personal)]

candidates = personal + globl

if not candidates:

return s1_popularity(user_id, cart_sequence, K)

markov_sc = defaultdict(float)

for prev in cart_sequence:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_sequence:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

rows = [{'user_id': user_id, 'product_id': int(pid),

'cart_size': cart_sz,

'markov_score': float(markov_sc.get(pid, 0.0)),

'assoc_score': float(assoc_sc.get(pid, 0.0)),

'pos_prob_next': float(pos_p.get(pid, 0.0))}

for pid in candidates]

inf_df = (pd.DataFrame(rows)

.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

for fc in FEATURE_COLS:

if fc not in inf_df.columns:

inf_df[fc] = 0.0

scores = xgb_model.predict_proba(inf_df[FEATURE_COLS].values)[:, 1]

inf_df['score'] = scores

return inf_df.nlargest(K, 'score')['product_id'].tolist()

# ── Single-pass benchmark: scores K=5 and K=10 simultaneously ─────────────────

def evaluate_strategy_multi_k(fn, data_df, ks = (5,10), n = None, seed = 100):

"""Generic single-pass evaluator for non-batched strategies."""

rows = data_df if n is None else data_df.sample(n=n, random_state=seed)

m = {k: {mk: [] for mk in ('precision','recall','f1','ndcg','hit_rate')}

for k in ks}

for _, row in rows.iterrows():

recs = fn(row['user_id'], row['cart_sequence'], K=max(ks))

for k in ks:

s = score_recs(recs, row['true_items'], k)

for key in m[k]: m[k][key].append(s[key])

return {k: {mk: float(np.mean(v)) for mk, v in m[k].items()} for k in ks}

def evaluate_lgbm_batched_multi_k(eval_data, ks=(5,10), n=None, seed=100, chunk_size=200):

"""S5 single-pass batched evaluator for multiple K values."""

rows_df = eval_data if n is None else eval_data.sample(n=n, random_state=seed)

rows_df = rows_df.reset_index(drop=True)

m = {k: {mk: [] for mk in ('precision','recall','f1','ndcg','hit_rate')}

for k in ks}

max_k = max(ks)

for chunk_start in range(0, len(rows_df), chunk_size):

chunk = rows_df.iloc[chunk_start:chunk_start + chunk_size]

cand_rows = []

for idx, row in chunk.iterrows():

uid = int(row['user_id'])

cart_seq = row['cart_sequence']

in_cart = set(cart_seq)

cart_sz = len(cart_seq)

personal = [p for p in user_history.get(uid,[])[:MAX_PERSONAL] if p not in in_cart]

globl = [p for p in GLOBAL_POOL if p not in in_cart and p not in set(personal)]

candidates = personal + globl or s1_popularity(uid, cart_seq, max_k)

markov_sc = defaultdict(float)

for prev in cart_seq:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_seq:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

for pid in candidates:

cand_rows.append((idx, uid, int(pid), cart_sz,

float(markov_sc.get(pid,0.0)),

float(assoc_sc.get(pid,0.0)),

float(pos_p.get(pid,0.0))))

if not cand_rows:

continue

c_df = pd.DataFrame(cand_rows, columns=[

'idx','user_id','product_id','cart_size',

'markov_score','assoc_score','pos_prob_next'])

c_df = (c_df.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

for fc in FEATURE_COLS:

if fc not in c_df.columns: c_df[fc] = 0.0

c_df['score'] = lgb_model.predict_proba(c_df[FEATURE_COLS].values)[:, 1]

for idx, row in chunk.iterrows():

user_cands = c_df[c_df['idx'] == idx]

recs = [] if user_cands.empty else user_cands.nlargest(max_k, 'score')['product_id'].tolist()

for k in ks:

s = score_recs(recs, row['true_items'], k)

for key in m[k]: m[k][key].append(s[key])

del c_df, cand_rows; gc.collect()

return {k: {mk: float(np.mean(v)) for mk, v in m[k].items()} for k in ks}

def evaluate_s6_batched_multi_k(eval_data, ks = (5,10), n = None, seed = 100, chunk_size = 150):

"""S6 single-pass batched evaluator for multiple K values."""

rows_df = eval_data if n is None else eval_data.sample(n=n, random_state=seed)

rows_df = rows_df.reset_index(drop=True)

m = {k: {mk: [] for mk in ('precision','recall','f1','ndcg','hit_rate')}

for k in ks}

max_k = max(ks)

for chunk_start in range(0, len(rows_df), chunk_size):

chunk = rows_df.iloc[chunk_start:chunk_start + chunk_size]

cand_rows = []

for idx, row in chunk.iterrows():

uid = int(row['user_id'])

cart_seq = row['cart_sequence']

in_cart = set(cart_seq)

cart_sz = len(cart_seq)

if is_cold_start(uid):

recs = cold_start_blend(uid, cart_seq, K=max_k)

for k in ks:

s = score_recs(recs, row['true_items'], k)

for key in m[k]: m[k][key].append(s[key])

continue

candidates, meta = build_candidate_pool(uid, cart_seq)

if not candidates:

candidates, meta = s1_popularity(uid, cart_seq, max_k), {}

markov_sc = defaultdict(float)

for prev in cart_seq:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_seq:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

for pid in candidates:

mm = meta.get(pid, {})

cand_rows.append((

idx, uid, int(pid), cart_sz,

float(markov_sc.get(pid,0.0)), float(assoc_sc.get(pid,0.0)),

float(pos_p.get(pid,0.0)),

float(mm.get('s2_score',0.0)), float(mm.get('s3_score',0.0)),

float(mm.get('s4_score',0.0)), int(mm.get('vote_count',0)),

int(mm.get('from_personal',0)), int(mm.get('from_assoc',0)),

int(mm.get('from_markov',0)), int(mm.get('from_global',0)),

))

if not cand_rows:

continue

c_df = pd.DataFrame(cand_rows, columns=[

'idx','user_id','product_id','cart_size',

'markov_score','assoc_score','pos_prob_next',

's2_score','s3_score','s4_score','vote_count',

'from_personal','from_assoc','from_markov','from_global'])

c_df = (c_df.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

for fc in S6_FEATURE_COLS:

if fc not in c_df.columns: c_df[fc] = 0.0

c_df['score'] = s6_model.predict_proba(c_df[S6_FEATURE_COLS].values)[:, 1]

for idx, row in chunk.iterrows():

user_cands = c_df[c_df['idx'] == idx]

if user_cands.empty:

recs = cold_start_blend(int(row['user_id']), row['cart_sequence'], K=max_k)

else:

recs = user_cands.nlargest(max_k, 'score')['product_id'].tolist()

for k in ks:

s = score_recs(recs, row['true_items'], k)

for key in m[k]: m[k][key].append(s[key])

del c_df, cand_rows; gc.collect()

return {k: {mk: float(np.mean(v)) for mk, v in m[k].items()} for k in ks}

def evaluate_xgb_batched_multi_k(eval_data, ks = (5,10), n = None, seed = 100, chunk_size = 200):

"""S7 single-pass batched evaluator for multiple K values."""

rows_df = eval_data if n is None else eval_data.sample(n=n, random_state=seed)

rows_df = rows_df.reset_index(drop=True)

m = {k: {mk: [] for mk in ('precision','recall','f1','ndcg','hit_rate')} for k in ks}

max_k = max(ks)

for chunk_start in range(0, len(rows_df), chunk_size):

chunk = rows_df.iloc[chunk_start:chunk_start + chunk_size]

cand_rows = []

for idx, row in chunk.iterrows():

uid = int(row['user_id'])

cart_seq = row['cart_sequence']

in_cart = set(cart_seq)

cart_sz = len(cart_seq)

personal = [p for p in user_history.get(uid,[])[:MAX_PERSONAL] if p not in in_cart]

globl = [p for p in GLOBAL_POOL if p not in in_cart and p not in set(personal)]

candidates = personal + globl or s1_popularity(uid, cart_seq, max_k)

markov_sc = defaultdict(float)

for prev in cart_seq:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_seq:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

for pid in candidates:

cand_rows.append((idx, uid, int(pid), cart_sz,

float(markov_sc.get(pid,0.0)),

float(assoc_sc.get(pid,0.0)),

float(pos_p.get(pid,0.0))))

if not cand_rows:

continue

c_df = pd.DataFrame(cand_rows, columns=[

'idx','user_id','product_id','cart_size',

'markov_score','assoc_score','pos_prob_next'])

c_df = (c_df.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

for fc in FEATURE_COLS:

if fc not in c_df.columns: c_df[fc] = 0.0

c_df['score'] = xgb_model.predict_proba(c_df[FEATURE_COLS].values)[:, 1]

for idx, row in chunk.iterrows():

user_cands = c_df[c_df['idx'] == idx]

recs = [] if user_cands.empty else user_cands.nlargest(max_k, 'score')['product_id'].tolist()

for k in ks:

s = score_recs(recs, row['true_items'], k)

for key in m[k]: m[k][key].append(s[key])

del c_df, cand_rows; gc.collect()

return {k: {mk: float(np.mean(v)) for mk, v in m[k].items()} for k in ks}

# Load the data

data = {}

with zipfile.ZipFile('/content/drive/MyDrive/instacart/instacart.zip', 'r') as z:

file_list = [f for f in z.namelist() if f.endswith('.csv')]

print(f'Files in archive: {file_list}\n')

for fn in file_list:

key = fn.replace('.csv', '')

data[key] = pd.read_csv(z.open(fn))

print(f' ✓ {fn}: {data[key].shape[0]:,} rows × {data[key].shape[1]} cols')

print('\n✅ All datasets loaded')

Files in archive: ['aisles.csv', 'departments.csv', 'order_products__prior.csv', 'order_products__train.csv', 'orders.csv', 'products.csv']

✓ aisles.csv: 134 rows × 2 cols

✓ departments.csv: 21 rows × 2 cols

✓ order_products__prior.csv: 32,434,489 rows × 4 cols

✓ order_products__train.csv: 1,384,617 rows × 4 cols

✓ orders.csv: 3,421,083 rows × 7 cols

✓ products.csv: 49,688 rows × 4 cols

✅ All datasets loaded

# Cast to compact int types to keep RAM manageable for the 32M-row prior table.

for key in ['order_products__prior', 'order_products__train']:

for col in ['order_id', 'product_id', 'add_to_cart_order', 'reordered']:

data[key][col] = data[key][col].astype('int32')

for col in ['order_id', 'user_id', 'order_number', 'order_dow', 'order_hour_of_day']:

data['orders'][col] = data['orders'][col].astype('int32')

# Enrich tables with metadata

products = (data['products'].merge(data['aisles'], on = 'aisle_id').merge(data['departments'], on='department_id'))

prior_op = (data['order_products__prior'].merge(data['orders'][['order_id','user_id','order_number', 'days_since_prior_order']], on = 'order_id').merge(products[['product_id','product_name','aisle','department']], on = 'product_id'))

prior_op.rename(columns = {'product_name': 'product'}, inplace = True)

prior_op['user_id'] = prior_op['user_id'].astype('int32')

# Training

train_op = data['order_products__train']

orders_slim = data['orders'][['order_id','user_id','eval_set', 'order_number','days_since_prior_order']]

# Help maps

pid_to_name = dict(zip(products['product_id'], products['product_name']))

name_to_pid = dict(zip(products['product_name'], products['product_id']))

pid_to_dept = dict(zip(products['product_id'], products['department']))

print(f'prior_op rows : {len(prior_op):,}')

print(f'train_op rows : {len(train_op):,}')

print(f'Products : {len(products):,}')

prior_op rows : 32,434,489

train_op rows : 1,384,617

Products : 49,688

2. 📖 Feature engineering

Three feature tables are built here and reused by both S5 and S6:

prod_feat— per-product statistics (popularity, reorder rate, avg cart position)user_feat— per-user statistics (order frequency, variety, avg cart size)up_feat— user × product interaction features (reorder score, orders since last)

Three feature tables are assembled from prior_op in single grouped-aggregation passes and reused by every learned strategy (S5, S6, S7).

prod_feat (49,677 × 6) — product-level statistics:

| Feature | Formula | Rationale |

|---|---|---|

order_count | nunique(order_id) | Raw popularity — distinct baskets containing this item |

reorder_rate | mean(reordered) | Fraction of appearances that were reorders; high = habitual staple |

avg_cart_position | mean(add_to_cart_order) | Items added early are reached for automatically; later items are deliberate |

global_popularity | order_count / max(order_count) | Normalised [0,1] continuous popularity signal |

pop_rank | Integer rank descending | Ordinal signal robust to count outliers |

user_feat (206,209 × 6) — user behavioural profile:

| Feature | Formula | Rationale |

|---|---|---|

total_orders | nunique(order_id) | User tenure proxy |

avg_cart_size | count / nunique(order_id) | Controls for high-volume vs. low-volume shoppers |

reorder_rate | mean(reordered) | Renamed reorder_rate_user at merge to avoid column collision |

avg_days_between | mean(days_since_prior_order) | Shopping frequency; weekly vs. monthly patterns differ |

variety_ratio | nunique / count | Low = habitual, high = exploratory |

up_feat (13,307,953 × 7) — user × product interaction (most informative table):

The key derived feature is reorder_score:

This rewards products that are ordered frequently, consistently, and recently. The +1 denominator prevents division by zero for items in the most recent order and avoids over-amplifying very old items. It is the primary sort key for S2 personal history ranking and the strongest predictive signal across all learned models.

Potential criticism — why not exponential time decay? Time-decay requires knowing the gap between each specific order, introducing missing values for irregular shoppers.

orders_since_lastis simpler and achieves nearly identical ranking in practice becauseavg_days_betweenalready captures shopping cadence as a separate user-level feature.

# Derive the product fts

product_stats = (prior_op.groupby('product_id').agg(order_count = ('order_id', 'nunique'),

reorder_rate = ('reordered', 'mean'),

unique_users = ('user_id', 'nunique'),

avg_cart_position = ('add_to_cart_order', 'mean'),).reset_index())

product_stats['global_popularity'] = (product_stats['order_count'] / product_stats['order_count'].max())

product_stats['pop_rank'] = product_stats['order_count'].rank(ascending = False, method = 'min').astype(int)

prod_feat = product_stats[['product_id','order_count','reorder_rate','avg_cart_position','global_popularity','pop_rank']]

print(f'prod_feat shape : {prod_feat.shape}')

prod_feat shape : (49677, 6)

prod_feat.head()

| product_id | order_count | reorder_rate | avg_cart_position | global_popularity | pop_rank | |

|---|---|---|---|---|---|---|

| 0 | 1 | 1852 | 0.6134 | 5.8018 | 0.0039 | 2996 |

| 1 | 2 | 90 | 0.1333 | 9.8889 | 0.0002 | 20886 |

| 2 | 3 | 277 | 0.7329 | 6.4152 | 0.0006 | 11948 |

| 3 | 4 | 329 | 0.4468 | 9.5076 | 0.0007 | 10728 |

| 4 | 5 | 15 | 0.6000 | 6.4667 | 0.0000 | 38159 |

# ─Derive the user fts

user_order_enriched = prior_op.merge(data['orders'][['order_id','order_number','days_since_prior_order']], on = 'order_id', how = 'left', suffixes = ('','_o'))

user_stats = user_order_enriched.groupby('user_id').agg(total_orders = ('order_id', 'nunique'),

total_items_purchased = ('product_id', 'count'),

unique_products = ('product_id', 'nunique'),

avg_cart_size = ('order_id', lambda x: x.count() / x.nunique()),

reorder_rate = ('reordered', 'mean'),

avg_days_between = ('days_since_prior_order', 'mean'),

std_days_between = ('days_since_prior_order', 'std'),).reset_index()

user_stats['variety_ratio'] = (user_stats['unique_products'] / user_stats['total_items_purchased'])

user_feat = user_stats[['user_id','total_orders','avg_cart_size','reorder_rate', 'avg_days_between','variety_ratio']]

print(f'user_feat shape : {user_feat.shape}')

user_feat shape : (206209, 6)

user_feat.head()

| user_id | total_orders | avg_cart_size | reorder_rate | avg_days_between | variety_ratio | |

|---|---|---|---|---|---|---|

| 0 | 1 | 10 | 5.9000 | 0.6949 | 20.2593 | 0.3051 |

| 1 | 2 | 14 | 13.9286 | 0.4769 | 15.9670 | 0.5231 |

| 2 | 3 | 12 | 7.3333 | 0.6250 | 11.4872 | 0.3750 |

| 3 | 4 | 5 | 3.6000 | 0.0556 | 15.3571 | 0.9444 |

| 4 | 5 | 4 | 9.2500 | 0.3784 | 14.5000 | 0.6216 |

# Derive interactions fts

up_stats = user_order_enriched.groupby(['user_id','product_id']).agg(times_ordered = ('order_id', 'count'),

times_reordered = ('reordered', 'sum'),

avg_cart_position = ('add_to_cart_order', 'mean'),

last_order_num = ('order_number', 'max'),).reset_index()

user_max_order = (data['orders'][data['orders']['eval_set'] == 'prior'].groupby('user_id')['order_number'].max().reset_index().rename(columns = {'order_number': 'max_order_num'}))

up_stats = up_stats.merge(user_max_order, on = 'user_id', how = 'left')

up_stats['orders_since_last'] = up_stats['max_order_num'] - up_stats['last_order_num']

up_stats['reorder_rate'] = (up_stats['times_reordered'] / up_stats['times_ordered'].clip(lower = 1))

up_stats['reorder_score'] = (up_stats['times_ordered'] * up_stats['reorder_rate'] / (up_stats['orders_since_last'] + 1))

up_feat = up_stats[['user_id','product_id','times_ordered','avg_cart_position', 'orders_since_last','reorder_rate','reorder_score']]

# Rename to avoid collision with product reorder_rate

up_feat.rename(columns={'reorder_rate': 'up_reorder_rate', 'avg_cart_position': 'up_avg_cart_pos'}, inplace = True)

print(f'up_feat shape : {up_feat.shape}')

del user_order_enriched; gc.collect()

up_feat shape : (13307953, 7)

0

up_feat.head()

| user_id | product_id | times_ordered | up_avg_cart_pos | orders_since_last | up_reorder_rate | reorder_score | |

|---|---|---|---|---|---|---|---|

| 0 | 1 | 196 | 10 | 1.4000 | 0 | 0.9000 | 9.0000 |

| 1 | 1 | 10258 | 9 | 3.3333 | 0 | 0.8889 | 8.0000 |

| 2 | 1 | 10326 | 1 | 5.0000 | 5 | 0.0000 | 0.0000 |

| 3 | 1 | 12427 | 10 | 3.3000 | 0 | 0.9000 | 9.0000 |

| 4 | 1 | 13032 | 3 | 6.3333 | 0 | 0.6667 | 2.0000 |

# Ground truth: each train-set user's actual next basket

train_order_users = (data['orders'][data['orders']['eval_set'] == 'train'][['order_id','user_id']])

train_truth = (train_op.merge(train_order_users, on = 'order_id').groupby('user_id')['product_id'].apply(set).reset_index().rename(columns = {'product_id': 'true_items'}))

# Simulate "current cart" = the user's last prior order (in add-to-cart order)

last_prior_oids = (orders_slim[orders_slim['eval_set'] == 'prior'].sort_values(['user_id','order_number']).groupby('user_id')['order_id'].last().reset_index().rename(columns = {'order_id': 'last_oid'}))

last_prior_seq = (prior_op[prior_op['order_id'].isin(last_prior_oids['last_oid'])].sort_values(['order_id','add_to_cart_order']).groupby('order_id')['product_id'].apply(list).reset_index().rename(columns = {'order_id': 'last_oid', 'product_id': 'cart_sequence'}))

# Build the evaluation data

eval_df = (train_truth.merge(last_prior_oids, on = 'user_id').merge(last_prior_seq, on = 'last_oid', how = 'inner'))

# Split into train and eval folds BEFORE sampling to prevent leakage

gss_outer = GroupShuffleSplit(n_splits=1, test_size=0.30, random_state=42)

train_idx, eval_idx = next(gss_outer.split(eval_df, groups=eval_df['user_id']))

train_fold = eval_df.iloc[train_idx].reset_index(drop=True)

eval_fold = eval_df.iloc[eval_idx].reset_index(drop=True)

eval_sample = (eval_fold.sample(n = min(5000, len(eval_fold)), random_state = 100).reset_index(drop=True))

print(f'Evaluation set : {len(eval_df):,} users')

print(f'Train fold : {len(train_fold):,} users')

print(f'Eval fold : {len(eval_fold):,} users')

print(f'Eval sample : {len(eval_sample):,} users')

print(f'Avg true basket : {eval_sample["true_items"].apply(len).mean():.1f} items')

print(f'Avg cart length : {eval_sample["cart_sequence"].apply(len).mean():.1f} items')

del eval_fold; gc.collect()

Evaluation set : 131,209 users

Train fold : 91,846 users

Eval fold : 39,363 users

Eval sample : 5,000 users

Avg true basket : 10.6 items

Avg cart length : 10.2 items

0

3. 📖 Association rule mining

We mine pairwise rules using Apriori on a stratified sample of 50 000 prior orders. The resulting rules are stored in two lookups:

assoc_lookup— item name → {consequent name: aggregated confidence×lift score}assoc_by_pid— product_id → {consequent product_id: aggregated score} (used by both S5 and S6 which work in product-id space)

Pairwise co-purchase rules are mined using the Apriori algorithm on 45,318 filtered baskets from a 50,000-user stratified sample:

\[\text{supp}(X) = \frac{|\text{orders containing } X|}{|\text{orders}|} \qquad \text{conf}(X \Rightarrow Y) = \frac{\text{supp}(X \cup Y)}{\text{supp}(X)} \qquad \text{lift}(X \Rightarrow Y) = \frac{\text{conf}(X \Rightarrow Y)}{\text{supp}(Y)}\]Rules with lift ≤ 1 are discarded — they offer no signal beyond marginal frequency. S3 scores a candidate item as $\sum_{i \in \text{cart}} \text{conf}(i \Rightarrow j) \times \text{lift}(i \Rightarrow j)$, aggregating evidence across all cart items.

Why only 12 antecedents? min_support=0.01 requires a pair to appear in ≥ 1% of ~45,000 baskets (~450 co-occurrences). Grocery co-purchases are highly sparse — only high-frequency items like Organic Bananas and Strawberries survive. As a standalone ranker S3 is limited; as a feature (s3_score) in S6 it provides a cross-sell signal personal history cannot.

Potential criticism — lower

min_supportwould give more rules. Correct.0.005would approximately double coverage. The tradeoff is a larger binary matrix, longer runtime, and noisier rules at the margin. This is the primary lever for improving S3 and S6 performance.

# Perform sampling

rng = np.random.default_rng(100)

sample_uids = rng.choice(prior_op['user_id'].unique(), size = 50000, replace = False)

recent_prior_orders = (data['orders'][(data['orders']['user_id'].isin(sample_uids)) & (data['orders']['eval_set'] == 'prior')].sort_values(['user_id','order_number']).groupby('user_id').last().reset_index()[['user_id','order_id']])

sample_op = prior_op[prior_op['order_id'].isin(recent_prior_orders['order_id'])]

# Restrict to top-5000 products to keep the binary matrix tractable

top_products = sample_op['product'].value_counts().head(5000).index.tolist()

top_product_set = set(top_products)

filtered_baskets = (sample_op[sample_op['product'].isin(top_product_set)].groupby('order_id')['product'].apply(list).tolist())

filtered_baskets = [b for b in filtered_baskets if len(b) >= 2]

print(f'Baskets after filtering : {len(filtered_baskets):,}')

print(f'Product space : {len(top_products):,}')

Baskets after filtering : 45,318

Product space : 5,000

# Perform apriori rules extraction

te = TransactionEncoder()

te_array = te.fit_transform(filtered_baskets)

basket_df = pd.DataFrame(te_array, columns = te.columns_)

frequent_itemsets = apriori(basket_df, min_support = 0.01, use_colnames = True, max_len = 2)

frequent_itemsets['length'] = frequent_itemsets['itemsets'].apply(len)

rules = association_rules(frequent_itemsets, metric='confidence', min_threshold=0.05)

rules = (rules[rules['lift'] > 1.0].sort_values('lift', ascending=False).reset_index(drop = True))

print(f'Frequent itemsets : {len(frequent_itemsets):,}')

print(f'Association rules : {len(rules):,} (lift > 1)')

Frequent itemsets : 135

Association rules : 40 (lift > 1)

# Create the lookup dicts

rule_lookup = defaultdict(list)

for _, row in rules.iterrows():

ant = frozenset(row['antecedents'])

con = list(row['consequents'])[0]

rule_lookup[ant].append((row['confidence'], row['lift'], con))

rule_single = defaultdict(list)

for ant, recs in rule_lookup.items():

for item in ant:

for conf, lift, con in recs:

rule_single[item].append((conf, lift, con, ant))

assoc_lookup = defaultdict(lambda: defaultdict(float))

for ant_name, recs in rule_single.items():

for conf, lift, con_name, _ in recs:

assoc_lookup[ant_name][con_name] += conf * lift

# Product-id-keyed lookup (used by S5 & S6 feature pipelines)

assoc_by_pid = defaultdict(lambda: defaultdict(float))

for ant_name, consequents in assoc_lookup.items():

ant_pid = name_to_pid.get(ant_name)

if ant_pid is None:

continue

for con_name, score in consequents.items():

con_pid = name_to_pid.get(con_name)

if con_pid is not None:

assoc_by_pid[ant_pid][con_pid] += score

print(f'assoc_lookup entries : {len(assoc_lookup)} (name-keyed)')

print(f'assoc_by_pid entries : {len(assoc_by_pid)} (pid-keyed)')

assoc_lookup entries : 12 (name-keyed)

assoc_by_pid entries : 12 (pid-keyed)

4. Markov chain construction

A first-order transition matrix (trans_prob) and a position-popularity table (pos_prob) are built from the full 32M-row prior dataset using a single-pass NumPy approach — no per-node loops. This typically takes 60–90 s.

A first-order transition matrix and position-popularity table are built from all 32M prior rows using a vectorised NumPy approach (no Python loops, ~70s total vs. ~20 minutes naively).

Transition probabilities:

\[P(j \mid i) = \frac{\text{count}(i \rightarrow j)}{\sum_k \text{count}(i \rightarrow k)}\]Each node stores at most 100 successors. 49,638 nodes with average 53.8 successors each.

S4 scoring given cart of length $n$ and last two items $c_{n-1}, c_n$:

\[\text{score}(j) = \sum_{c \in \{c_{n-1}, c_n\}} P(j \mid c) + 0.4 \cdot \text{pos\_prob}(n, j) + 0.25 \cdot \text{reorder\_score}(u, j)\]pos_prob[p][j] is the empirical probability that product $j$ appeared at cart position $p$ across all prior orders.

Potential criticism — why first-order only? Second-order would require $O( \text{products} ^2)$ states — ~2.5 billion at 49,688 products, nearly all zero. First-order provides sufficient sequential signal at tractable memory cost. The markov_scorefeature ranks 3rd by split count in S5, confirming its additive value above personal history alone.

# Init vars

MAX_POS = 20

MAX_SUCCESSORS = 100

MAX_POS_ITEMS = 200

MAX_USER_HIST = 50

print('Building Markov transition matrix…')

# Start timing

t0 = time.time()

arr = prior_op[['order_id','product_id','add_to_cart_order']].to_numpy(dtype='int32')

arr = arr[np.lexsort((arr[:,2], arr[:,0]))]

oids = arr[:,0]; pids = arr[:,1]; pos0 = arr[:,2] - 1

del arr; gc.collect()

same = oids[:-1] == oids[1:]

prev_p = pids[:-1][same].astype('int32')

next_p = pids[1: ][same].astype('int32')

del same; gc.collect()

order = np.lexsort((next_p, prev_p))

prev_s = prev_p[order]; next_s = next_p[order]

del prev_p, next_p, order; gc.collect()

boundaries = np.flatnonzero(np.diff(prev_s)) + 1

boundaries = np.concatenate([[0], boundaries, [len(prev_s)]])

pair_counts = np.diff(np.concatenate([np.flatnonzero(np.concatenate([[True], (prev_s[1:] != prev_s[:-1]) | (next_s[1:] != next_s[:-1]), [True]])), [len(prev_s)]]))

keep = np.concatenate([[True], (prev_s[1:] != prev_s[:-1]) | (next_s[1:] != next_s[:-1])])

u_prev = prev_s[keep]; u_next = next_s[keep]

del prev_s, next_s, keep; gc.collect()

grp_bounds = np.flatnonzero(np.diff(u_prev)) + 1

grp_bounds = np.concatenate([[0], grp_bounds, [len(u_prev)]])

trans_prob = {}

for i in range(len(grp_bounds) - 1):

lo, hi = grp_bounds[i], grp_bounds[i+1]

node = int(u_prev[lo])

c = pair_counts[lo:hi]; t = u_next[lo:hi]

if len(c) > MAX_SUCCESSORS:

top = np.argpartition(c, -MAX_SUCCESSORS)[-MAX_SUCCESSORS:]

c, t = c[top], t[top]

total = c.sum()

trans_prob[node] = {int(ti): float(ci/total) for ti, ci in zip(t, c)}

del u_prev, u_next, pair_counts, grp_bounds; gc.collect()

print(f' trans_prob nodes : {len(trans_prob):,} ({time.time()-t0:.1f}s)')

# Position-popularity table

mask = pos0 < MAX_POS

p_pos = pos0[mask].astype('int32'); p_pid = pids[mask].astype('int32')

del oids, pids, pos0, mask; gc.collect()

order2 = np.lexsort((p_pid, p_pos))

pp_s = p_pos[order2]; pid_s = p_pid[order2]

del p_pos, p_pid, order2; gc.collect()

keep2 = np.concatenate([[True], (pp_s[1:] != pp_s[:-1]) | (pid_s[1:] != pid_s[:-1])])

pc = np.diff(np.concatenate([np.flatnonzero(keep2), [len(pp_s)]]))

u_pos = pp_s[keep2]; u_pid = pid_s[keep2]

del pp_s, pid_s, keep2; gc.collect()

grp2 = np.flatnonzero(np.diff(u_pos)) + 1

grp2 = np.concatenate([[0], grp2, [len(u_pos)]])

pos_prob = {}

for i in range(len(grp2) - 1):

lo, hi = grp2[i], grp2[i+1]

pos = int(u_pos[lo])

c = pc[lo:hi]; t = u_pid[lo:hi]

if len(c) > MAX_POS_ITEMS:

top = np.argpartition(c, -MAX_POS_ITEMS)[-MAX_POS_ITEMS:]

c, t = c[top], t[top]

total = c.sum()

pos_prob[pos] = {int(ti): float(ci/total) for ti, ci in zip(t, c)}

del u_pos, u_pid, pc, grp2; gc.collect()

# Per-user reorder-score list (capped at MAX_USER_HIST items per user)

user_rs_list = (up_stats.sort_values('reorder_score', ascending=False).groupby('user_id').head(MAX_USER_HIST).groupby('user_id').apply(lambda g: list(zip(g['product_id'].tolist(), g['reorder_score'].tolist()))).to_dict())

print(f'✅ Markov chain built in {time.time()-t0:.1f}s')

print(f' Avg successors / node : {np.mean([len(v) for v in trans_prob.values()]):.1f}')

print(f' Positions tracked : 0 – {MAX_POS-1}')

del prior_op; gc.collect()

Building Markov transition matrix…

trans_prob nodes : 49,638 (17.7s)

✅ Markov chain built in 50.0s

Avg successors / node : 53.8

Positions tracked : 0 – 19

0

5. Evaluation harness & S1–S5 strategies

S5 LightGBM & S7 XGBoost Pointwise Rankers

Both rankers are trained to answer: is this product in this user’s next basket? At inference the top-K products by predicted probability are returned.

Candidate pool (up to 600 per user): personal history top-100 by reorder_score + global top-500, filtered against the current cart.

Training data: 4,000 users from train_fold → 2,097,066 candidate rows, 18,439 positives (0.88%). scale_pos_weight=1 is deliberate — aggressive reweighting (÷4 originally used) caused best_iteration=1 producing a degenerate single-stump classifier.

LightGBM config — num_leaves=31, min_child_samples=50, early stopping at 50 rounds. Converged at iteration 102, Val ROC-AUC = 0.9085. Top features by split count: avg_cart_size (316), reorder_rate_user (308), markov_score (297) — confirming that user behavioural profile dominates, with sequential context adding meaningful signal.

XGBoost (S7) trained on the identical X5/y5 matrix and validation fold — a pure algorithm comparison. max_depth=6, min_child_weight=50 are the level-wise equivalents of LightGBM’s leaf-wise num_leaves/min_child_samples. Converged at iteration 139, Val ROC-AUC = 0.9090. XGBoost’s feature_importances_ reports gain, not split count — the two scales are not comparable, hence separate visualisation panels.

Why

scale_pos_weight=1rather than the class ratio (~113)? Setting the full ratio caused the first tree to overcorrect, triggering early stopping after a single iteration.scale_pos_weight=1lets gradient boosting learn incrementally across 100+ trees, producing a properly calibrated ranker. The class imbalance is handled implicitly by the decision threshold at inference time rather than during training.

# Get the global popularity constant

POPULARITY_POOL = (product_stats.sort_values('order_count', ascending = False)['product_id'].head(300).tolist())

print(f'✅ S1 ready | pool: top {len(POPULARITY_POOL)} products')

✅ S1 ready | pool: top 300 products

# Compile the user histories

user_history = (up_stats.sort_values(['user_id','reorder_score'], ascending = [True, False]).groupby('user_id')['product_id'].apply(list).to_dict())

print(f'✅ S2 ready | users with history: {len(user_history):,}')

✅ S2 ready | users with history: 206,209

# Association rules

print(f'✅ S3 ready | assoc_lookup items: {len(assoc_lookup)}')

✅ S3 ready | assoc_lookup items: 12

# Build the features for the lgb model

MAX_PERSONAL = 100

GLOBAL_POOL = (product_stats.sort_values('order_count', ascending = False)['product_id'].head(500).tolist())

# Feature tables in the exact shape expected by the S5/S6 training pipelines

FEATURE_COLS = ['cart_size', 'markov_score', 'assoc_score', 'pos_prob_next', 'order_count', 'reorder_rate', 'avg_cart_position', 'global_popularity', 'pop_rank', 'total_orders', 'avg_cart_size', 'reorder_rate_user', 'avg_days_between', 'variety_ratio', 'times_ordered', 'reorder_score', 'orders_since_last', 'up_avg_cart_pos', 'up_reorder_rate',]

# Rename user-level reorder_rate to avoid duplicate column after merge

user_feat_s5 = user_feat.rename(columns={'reorder_rate': 'reorder_rate_user'})

print(f'✅ S5 feature tables ready')

print(f' FEATURE_COLS ({len(FEATURE_COLS)}): {FEATURE_COLS}')

✅ S5 feature tables ready

FEATURE_COLS (19): ['cart_size', 'markov_score', 'assoc_score', 'pos_prob_next', 'order_count', 'reorder_rate', 'avg_cart_position', 'global_popularity', 'pop_rank', 'total_orders', 'avg_cart_size', 'reorder_rate_user', 'avg_days_between', 'variety_ratio', 'times_ordered', 'reorder_score', 'orders_since_last', 'up_avg_cart_pos', 'up_reorder_rate']

# Build the lgb training data

# Build (user_id, product_id, features, label) rows from a subsample of eval_df

train_pool_s5 = train_fold.sample(n = min(4000, len(train_fold)), random_state = 100).reset_index(drop = True)

print(f'S5 training pool : {len(train_pool_s5):,} users')

cand_rows_s5 = []

for _, row in train_pool_s5.iterrows():

uid = int(row['user_id'])

cart_seq = row['cart_sequence']

true_set = row['true_items']

in_cart = set(cart_seq)

cart_sz = len(cart_seq)

personal = [p for p in user_history.get(uid, [])[:MAX_PERSONAL] if p not in in_cart]

globl = [p for p in GLOBAL_POOL if p not in in_cart and p not in set(personal)]

candidates = personal + globl

markov_sc = defaultdict(float)

for prev in cart_seq:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_seq:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

for pid in candidates:

cand_rows_s5.append({

'user_id': uid,

'product_id': int(pid),

'label': int(pid in true_set),

'cart_size': cart_sz,

'markov_score': float(markov_sc.get(pid, 0.0)),

'assoc_score': float(assoc_sc.get(pid, 0.0)),

'pos_prob_next': float(pos_p.get(pid, 0.0)),

})

cand_df = (pd.DataFrame(cand_rows_s5).merge(prod_feat, on = 'product_id', how = 'left').merge(user_feat_s5, on = 'user_id', how = 'left').merge(up_feat, on = ['user_id','product_id'], how = 'left').fillna(0))

for fc in FEATURE_COLS:

if fc not in cand_df.columns:

cand_df[fc] = 0.0

print(f'Candidate rows : {len(cand_df):,}')

print(f'Positive labels: {cand_df["label"].sum():,} '

f'({cand_df["label"].mean():.2%})')

S5 training pool : 4,000 users

Candidate rows : 2,097,066

Positive labels: 18,439 (0.88%)

# Train the lgb model

X5 = cand_df[FEATURE_COLS].values

y5 = cand_df['label'].values

g5 = cand_df['user_id'].values

gss = GroupShuffleSplit(n_splits=1, test_size = 0.15, random_state = 100)

tr5, va5 = next(gss.split(X5, y5, g5))

lgb_model = lgb.LGBMClassifier(n_estimators = 500,

learning_rate = 0.05,

num_leaves = 31,

min_child_samples = 50,

subsample = 0.8,

colsample_bytree = 0.8,

scale_pos_weight = 1,

n_jobs = -1,

random_state = 100,

verbose = -1)

print('Training S5 LightGBM…')

t0 = time.time()

lgb_model.fit(X5[tr5], y5[tr5], eval_set=[(X5[va5], y5[va5])], callbacks=[lgb.early_stopping(50, verbose=False), lgb.log_evaluation(100)])

print(f'Done in {time.time()-t0:.1f}s | best iter: {lgb_model.best_iteration_}')

print(f'Val ROC-AUC : {roc_auc_score(y5[va5], lgb_model.predict_proba(X5[va5])[:,1]):.4f}')

fi5 = pd.Series(lgb_model.feature_importances_, index = FEATURE_COLS).sort_values(ascending = False)

print('\nTop-10 feature importances:')

print(fi5.head(10).to_string())

Training S5 LightGBM…

[100] valid_0's binary_logloss: 0.0347946

Done in 19.1s | best iter: 102

Val ROC-AUC : 0.9085

Top-10 feature importances:

avg_cart_size 316

reorder_rate_user 308

markov_score 297

reorder_rate 296

avg_cart_position 249

variety_ratio 223

total_orders 222

avg_days_between 215

cart_size 208

orders_since_last 126

# Train the XGBoost model (S7) — same candidates and features as S5

xgb_model = XGBClassifier(n_estimators = 500,

learning_rate = 0.05,

max_depth = 6,

min_child_weight= 50,

subsample = 0.8,

colsample_bytree= 0.8,

scale_pos_weight= 1,

n_jobs = -1,

random_state = 100,

verbosity = 0,

early_stopping_rounds = 50)

print("Training S7 XGBoost…")

t0 = time.time()

xgb_model.fit(X5[tr5], y5[tr5],

eval_set=[(X5[va5], y5[va5])],

verbose=False)

print(f"Done in {time.time()-t0:.1f}s | best iter: {xgb_model.best_iteration}")

print(f"Val ROC-AUC : {roc_auc_score(y5[va5], xgb_model.predict_proba(X5[va5])[:,1]):.4f}")

fi7 = pd.Series(xgb_model.feature_importances_, index=FEATURE_COLS).sort_values(ascending=False)

print("\nTop-10 feature importances:")

print(fi7.head(10).to_string())

Training S7 XGBoost…

Done in 30.1s | best iter: 139

Val ROC-AUC : 0.9090

Top-10 feature importances:

times_ordered 0.6006

orders_since_last 0.2083

up_avg_cart_pos 0.0527

markov_score 0.0314

reorder_score 0.0314

up_reorder_rate 0.0115

pos_prob_next 0.0092

reorder_rate 0.0084

total_orders 0.0074

pop_rank 0.0060

6. S6 architecture & cold-start gate

S6 is a two-stage generate-then-rerank pipeline:

cart_seq ──► [ Stage 1: S2 + S3 + S4 generators ] ──► merged pool + meta-scores

│

[ Stage 2: S6 LightGBM meta-ranker ]

│

top-K output

Users with very few orders bypass Stage 2 via a cold-start gate, since their personal history and Markov context are too sparse to produce reliable features. They receive a lightweight S1+S3 blended recommendation instead.

Why this beats S5 alone

| Mechanism | Expected effect |

|---|---|

| Wider pool (S3 cross-sells, S4 Markov) | Recall ↑ — more correct items reach the ranker |

| Explicit S3 conf×lift as a per-item feature | NDCG ↑ — ranker sees co-purchase signal per candidate |

| Strategy vote count | Precision ↑ — consensus across 3 generators is a strong reorder signal |

| Explicit S4 Markov probability as a feature | NDCG ↑ — sequence signal enters as a clean numeric |

| Cold-start branch | Hit Rate ↑ — sparse users no longer drag down scores |

12.1% of the evaluation sample (603/5,000 users) have ≤ 3 prior orders. For these users reorder_score is unreliable, the Markov chain has insufficient context, and the rankers were trained predominantly on users with richer histories.

These users are routed to cold_start_blend: S3 association rule hits first (leveraging the current cart), padded with S1 global popularity. This avoids degenerate ranker outputs for sparse users.

Potential criticism — why threshold at 3? At 3 orders,

reorder_scorecan be at most3 × 1.0 / 1 = 3for any item — too shallow to distinguish genuine habits from coincidental early purchases. At 4+ orders the signal becomes meaningful. The threshold of 3 is a practical judgment; a held-out grid search could optimise it.

# Create a gate to check if user has history

user_order_count = (orders_slim[orders_slim['eval_set'] == 'prior'].groupby('user_id')['order_number'].max().to_dict())

cold_count = sum(1 for uid in eval_sample['user_id'] if is_cold_start(uid))

print(f'Cold-start users in eval sample : {cold_count}/{len(eval_sample)} '

f'({cold_count/len(eval_sample):.1%}) (threshold ≤ {3} orders)')

Cold-start users in eval sample : 603/5000 (12.1%) (threshold ≤ 3 orders)

7. Stage 1 — Expanded candidate pool

build_candidate_pool returns the union of nominations from three generators plus per-item meta-scores that become features for the Stage 2 ranker.

# Smoke test

_u0 = eval_sample['user_id'].iloc[0]

_c0 = eval_sample['cart_sequence'].iloc[0]

print('✅ S5 ready')

print(f"User # {_u0}, Cart- {_c0}")

print(f' Sample recs: {[pid_to_name.get(p,"?")[:25] for p in s5_lgbm(_u0, _c0, K = 3)]}')

✅ S5 ready

User # 189055, Cart- [19767, 39001, 17419, 28413, 5258, 15200, 30406]

Sample recs: ['Soda', 'Frozen Organic Mango Chun', 'Bean & Cheese Burrito']

# Set constants for the pool

S6_PERSONAL_CAP = 100

S6_ASSOC_CAP = 40

S6_MARKOV_CAP = 60

S6_GLOBAL_FILL = 500

# Define feature columsn

S6_META_COLS = ['s2_score', 's3_score', 's4_score', 'vote_count', 'from_personal', 'from_assoc', 'from_markov', 'from_global',]

print(f'Max pool size (before dedup) : '

f'{S6_PERSONAL_CAP + S6_ASSOC_CAP + S6_GLOBAL_FILL} (from_global feature downweights these in the ranker)')

Max pool size (before dedup) : 640 (from_global feature downweights these in the ranker)

# Sanity check

_cands, _meta = build_candidate_pool(_u0, _c0)

vote_dist = pd.Series([_meta[p]['vote_count'] for p in _cands]).value_counts().sort_index()

print(f'Pool size for user {_u0} : {len(_cands)}')

print(f'Vote distribution:\n{vote_dist.to_string()}')

Pool size for user 189055 : 518

Vote distribution:

1 510

2 8

8. Stage 2 — Meta-ranker training

A new LightGBM model is trained on the enriched feature set: the 19 base S5 features plus the 8 S6 meta-features (27 total). Using a separate model (rather than the S5 model) allows the ranker to learn the value of vote_count and the individual generator scores from training examples that include candidates from S3 and S4 — candidates that were never in S5’s training pool.

S6 is a two-stage generate-then-rerank pipeline:

cart_sequence

│

[Cold-start gate] ─── cold ───► S1+S3 blend ──► output

│ warm

▼

[Stage 1 — Candidate pool]

S2 personal (top-100 by reorder_score) ─────┐

S3 association rules (top-40) ──────┤──► union + meta-scores per item

Global fill (top-500 gap fill) ─────┘

│

[Stage 2 — S6 LightGBM meta-ranker]

27 features = 19 base (S5) + 8 meta (S6)

│

top-K output

Why S6 should outperform S5 in theory. S5’s pool is personal history + global popularity. S6’s pool additionally includes S3 cross-sell candidates — items the user hasn’t bought before but that frequently co-occur with what’s currently in their cart. The meta-ranker also receives s2_score, s3_score, s4_score, and vote_count as features, encoding why each candidate was nominated. An item nominated by both S2 and S3 (vote_count=2) carries stronger signal than one nominated by either alone.

Why S6_GLOBAL_FILL=500 is essential. In an earlier iteration from_global showed a lift of 0.19× and was misread as a reason to set GLOBAL_FILL=0. The correct interpretation: the ranker already learns to score global fill items low — that is the feature doing its job. Removing them gives S6 only ~80 candidates versus S5’s ~524, creating a hard recall ceiling before ranking even begins. GLOBAL_FILL=500 restores parity with S5’s pool depth.

S6 meta-ranker has lower ROC-AUC than S5 (0.8312 vs. 0.9085) — is this a problem? No. S6’s training pool includes hard negatives (global fill items with no personal connection to the user), making classification harder. The meta-ranker’s task is to separate signal from noise within a more diverse pool — a harder problem than S5’s, so a lower AUC is expected and does not indicate a worse ranker.

The 8 meta-features and their lift values:

| Feature | Lift | Interpretation |

|---|---|---|

s2_score | 7.36× | Dominant signal — personal reorder history |

s3_score | 1.89× | Association cross-sell signal |

s4_score | 1.19× | Markov sequential signal (as feature, not nominator) |

vote_count | 1.13× | Cross-strategy consensus |

from_personal | 1.67× | Nominated by S2 |

from_assoc | 1.42× | Nominated by S3 |

from_markov | 0.89× | Markov-nominated items are slightly anti-predictive as nominees |

from_global | 0.16× | Global fill items rarely in true basket — ranker discounts them |

# Build master list pf fts

S6_FEATURE_COLS = FEATURE_COLS + S6_META_COLS

print(f'Base S5 features : {len(FEATURE_COLS)}')

print(f'S6 meta-features : {len(S6_META_COLS)} → {S6_META_COLS}')

print(f'Total S6 features: {len(S6_FEATURE_COLS)}')

Base S5 features : 19

S6 meta-features : 8 → ['s2_score', 's3_score', 's4_score', 'vote_count', 'from_personal', 'from_assoc', 'from_markov', 'from_global']

Total S6 features: 27

# Build the s6 candidtaes df

train_pool_s6 = train_fold.sample(n = min(4000, len(train_fold)), random_state = 100).reset_index(drop = True)

del train_fold; gc.collect()

print(f'S6 training pool : {len(train_pool_s6):,} users '

f'(chunk size = {200})')

print('Building candidates… (~1–3 min)')

t0 = time.time()

temp_dir = tempfile.mkdtemp()

chunk_files = []

for chunk_start in range(0, len(train_pool_s6), 200):

chunk_rows = []

chunk = train_pool_s6.iloc[chunk_start:chunk_start + 200]

for _, row in chunk.iterrows():

uid = int(row['user_id'])

cart_seq = row['cart_sequence']

true_set = row['true_items']

if is_cold_start(uid):

continue

candidates, meta = build_candidate_pool(uid, cart_seq)

cart_sz = len(cart_seq)

markov_sc = defaultdict(float)

for prev in cart_seq:

for nxt, p in trans_prob.get(prev, {}).items():

markov_sc[nxt] += p

assoc_sc = defaultdict(float)

for ant_pid in cart_seq:

for con_pid, sc in assoc_by_pid.get(ant_pid, {}).items():

assoc_sc[con_pid] += sc

pos_p = pos_prob.get(min(cart_sz, MAX_POS - 1), {})

for pid in candidates:

m = meta.get(pid, {})

chunk_rows.append({

'user_id': uid,

'product_id': int(pid),

'label': int(pid in true_set),

'cart_size': cart_sz,

'markov_score': float(markov_sc.get(pid, 0.0)),

'assoc_score': float(assoc_sc.get(pid, 0.0)),

'pos_prob_next': float(pos_p.get(pid, 0.0)),

's2_score': float(m.get('s2_score', 0.0)),

's3_score': float(m.get('s3_score', 0.0)),

's4_score': float(m.get('s4_score', 0.0)),

'vote_count': int(m.get('vote_count', 0)),

'from_personal': int(m.get('from_personal', 0)),

'from_assoc': int(m.get('from_assoc', 0)),

'from_markov': int(m.get('from_markov', 0)),

'from_global': int(m.get('from_global', 0)),

})

if not chunk_rows:

continue

chunk_df = (pd.DataFrame(chunk_rows)

.merge(prod_feat, on='product_id', how='left')

.merge(user_feat_s5, on='user_id', how='left')

.merge(up_feat, on=['user_id','product_id'], how='left')

.fillna(0))

# Write to disk immediately — don't keep in RAM

fpath = os.path.join(temp_dir, f'chunk_{chunk_start:05d}.parquet')

chunk_df.to_parquet(fpath, index=False)

chunk_files.append(fpath)

del chunk_rows, chunk_df; gc.collect()

users_done = min(chunk_start + 200, len(train_pool_s6))

print(f' {users_done}/{len(train_pool_s6)} users '

f'| chunks written: {len(chunk_files)} '

f'| {time.time()-t0:.0f}s')

# Read back and concat — one file at a time to keep peak RAM low

print('Assembling final dataframe from disk…')

s6_cand_df = pd.concat(

[pd.read_parquet(f) for f in chunk_files],

ignore_index=True

)

# Clean up temp files

for f in chunk_files:

os.remove(f)

os.rmdir(temp_dir)

for fc in S6_FEATURE_COLS:

if fc not in s6_cand_df.columns:

s6_cand_df[fc] = 0.0

print(f'\n Rows : {len(s6_cand_df):,}')

print(f' Positives: {int(s6_cand_df["label"].sum()):,} '

f'({s6_cand_df["label"].mean():.2%})')

print(f' Time : {time.time()-t0:.1f}s')

S6 training pool : 4,000 users (chunk size = 200)

Building candidates… (~1–3 min)

200/4000 users | chunks written: 1 | 7s

400/4000 users | chunks written: 2 | 12s

600/4000 users | chunks written: 3 | 19s

800/4000 users | chunks written: 4 | 24s

1000/4000 users | chunks written: 5 | 30s

1200/4000 users | chunks written: 6 | 36s

1400/4000 users | chunks written: 7 | 42s

1600/4000 users | chunks written: 8 | 48s

1800/4000 users | chunks written: 9 | 54s

2000/4000 users | chunks written: 10 | 60s

2200/4000 users | chunks written: 11 | 66s

2400/4000 users | chunks written: 12 | 72s

2600/4000 users | chunks written: 13 | 78s

2800/4000 users | chunks written: 14 | 84s

3000/4000 users | chunks written: 15 | 91s

3200/4000 users | chunks written: 16 | 97s

3400/4000 users | chunks written: 17 | 104s

3600/4000 users | chunks written: 18 | 110s

3800/4000 users | chunks written: 19 | 117s

4000/4000 users | chunks written: 20 | 123s

Assembling final dataframe from disk…

Rows : 1,925,897

Positives: 17,261 (0.90%)

Time : 124.2s

# Check feature quality as an indicator of model function

print('Meta-feature mean values — positives vs negatives')

print(f'{"Feature":<16} {"Mean (1)":>10} {"Mean (0)":>10} {"Lift":>7}')

print('─' * 50)

for col in S6_META_COLS:

if col not in s6_cand_df.columns: continue

m1 = s6_cand_df.loc[s6_cand_df['label'] == 1, col].mean()

m0 = s6_cand_df.loc[s6_cand_df['label'] == 0, col].mean()

lift = m1 / m0 if m0 > 0 else float('inf')

print(f' {col:<16} {m1:10.4f} {m0:10.4f} {lift:7.2f}×')

print('\nLift > 1.0 → feature is positively predictive of relevance.')

Meta-feature mean values — positives vs negatives

Feature Mean (1) Mean (0) Lift

──────────────────────────────────────────────────

s2_score 0.8543 0.0217 39.43×

s3_score 0.0158 0.0016 10.12×

s4_score 0.0084 0.0025 3.31×

vote_count 1.1821 1.0138 1.17×

from_personal 0.7185 0.0864 8.31×

from_assoc 0.0311 0.0041 7.61×

from_markov 0.2420 0.1093 2.21×

from_global 0.1905 0.8140 0.23×

Lift > 1.0 → feature is positively predictive of relevance.

# Train the meta model

X6 = s6_cand_df[S6_FEATURE_COLS].values

y6 = s6_cand_df['label'].values

g6 = s6_cand_df['user_id'].values

gss6 = GroupShuffleSplit(n_splits = 1, test_size = 0.15, random_state = 100)

tr6, va6 = next(gss6.split(X6, y6, g6))

s6_model = lgb.LGBMClassifier(n_estimators = 500,

learning_rate = 0.05,

num_leaves = 31,

min_child_samples = 50,

subsample = 0.8,

colsample_bytree = 0.8,

scale_pos_weight = 1,

n_jobs = -1,

random_state = 100,

verbose = -1)

print('Training S6 meta-ranker…')

t0 = time.time()

s6_model.fit(X6[tr6], y6[tr6], eval_set = [(X6[va6], y6[va6])], callbacks = [lgb.early_stopping(50, verbose = False), lgb.log_evaluation(100)])

print(f'Done in {time.time()-t0:.1f}s | best iter: {s6_model.best_iteration_}')

va_pred6 = s6_model.predict_proba(X6[va6])[:, 1]

print(f'Val ROC-AUC : {roc_auc_score(y6[va6], va_pred6):.4f}')

print(f'Val Avg-Prec : {average_precision_score(y6[va6], va_pred6):.4f}')

Training S6 meta-ranker…

[100] valid_0's binary_logloss: 0.0380691

Done in 21.3s | best iter: 113

Val ROC-AUC : 0.9098

Val Avg-Prec : 0.1949

# Vheck ft importance for base model vs meta model

fi6 = pd.Series(s6_model.feature_importances_, index = S6_FEATURE_COLS).sort_values(ascending = False)

fig, axes = plt.subplots(1, 2, figsize=(18, 6))

# Left: top-15 overall

ax = axes[0]

top15 = fi6.head(15)

colors = ['#8B5CF6' if c in S6_META_COLS else '#60A5FA' for c in top15.index]

ax.barh(top15.index[::-1], top15.values[::-1], color=colors[::-1], edgecolor='white')

ax.set_title('S6 Feature Importance — top 15', fontsize=13, fontweight='bold')

ax.set_xlabel('Importance')

legend_items = [mpatches.Patch(color='#8B5CF6', label='S6 meta-feature (new)'),

mpatches.Patch(color='#60A5FA', label='Base S5 feature')]

ax.legend(handles=legend_items, loc='lower right', fontsize=9)

# Right: meta-features only

ax2 = axes[1]

meta_imp = fi6[S6_META_COLS].sort_values(ascending=False)

bars = ax2.barh(meta_imp.index[::-1], meta_imp.values[::-1],

color='#8B5CF6', edgecolor='white')

for bar, val in zip(bars, meta_imp.values[::-1]):

ax2.text(bar.get_width() + 0.3, bar.get_y() + bar.get_height()/2,

f'{val:.0f}', va='center', fontsize=9)

ax2.set_title('S6 Meta-feature Importance', fontsize=13, fontweight='bold')

ax2.set_xlabel('Importance')

plt.suptitle('Feature Importance', fontsize=14, y=1.01)

plt.tight_layout()

plt.show()

# XGBoost (S7) feature importance — side-by-side S5 LGB vs S7 XGB

fig2, axes2 = plt.subplots(1, 2, figsize=(18, 6))

# S5 LightGBM

ax3 = axes2[0]

fi5_top = fi5.head(15)

ax3.barh(fi5_top.index[::-1], fi5_top.values[::-1], color='#A78BFA', edgecolor='white')

ax3.set_title('S5 LightGBM — Top 15 Features', fontsize=13, fontweight='bold')