The Data Guy’s Portfolio:

A showcase of data science experiments and notebooks to highlight important concepts and methodology

Hi!

I am the data guy and I am a data scientist with a passion for big data and machine learning.

In my free time, I love to undertake popular experiments in data science to sharpen my skills and knowledge base. I have documented that journey here in python using a series of informative Jupyter notebooks. Feel free to explore them in the blog!

🌌 Getting Started- The Data Science Galaxy

Welcome to the universe of modern data science which spans mathematics, machine learning, engineering, and production systems.

For more information, please see the following -

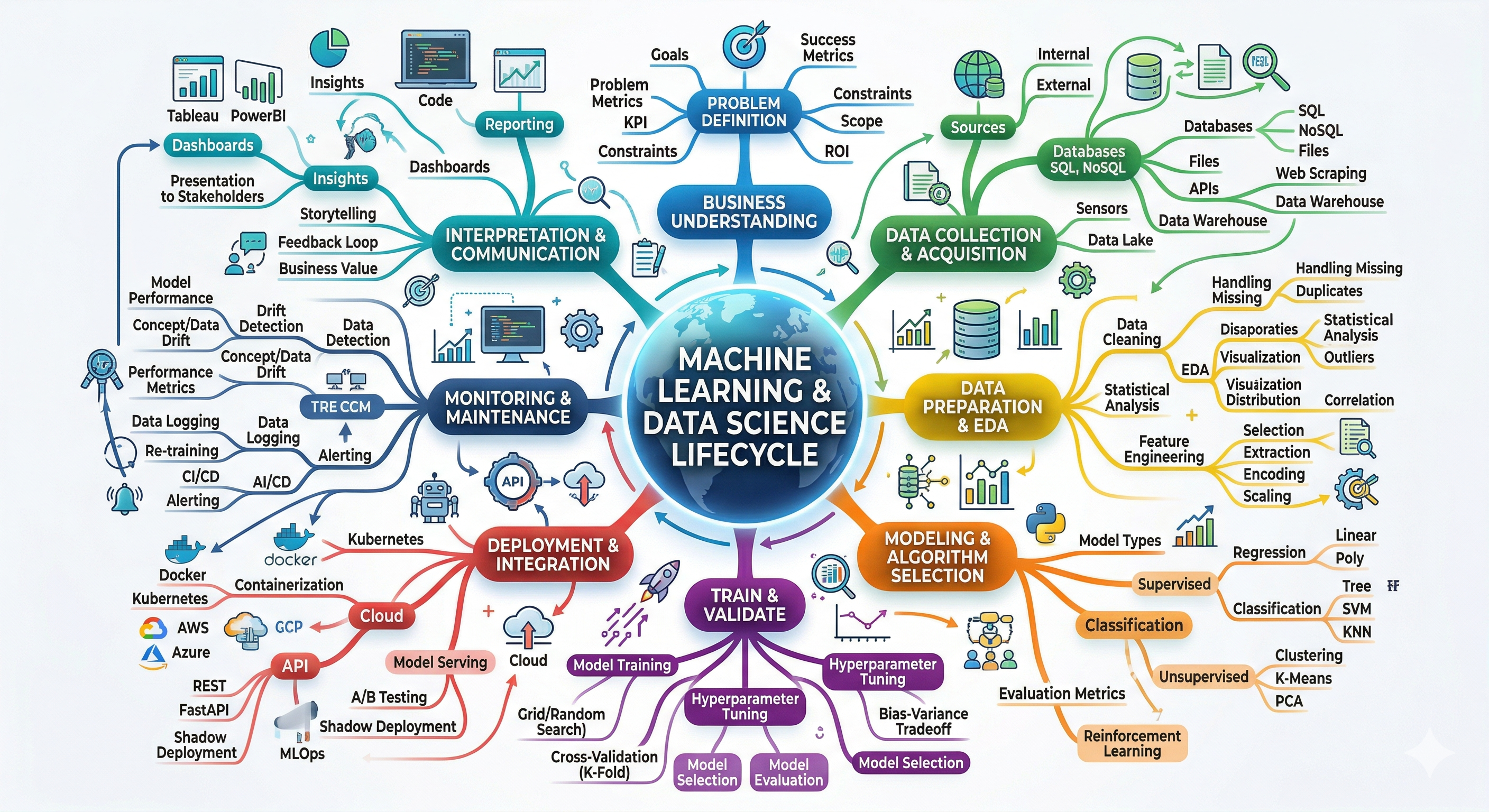

🌌 Data Science Ecosystem & Machine Learning Pipeline

From Raw Data to Intelligent Systems

🧠 What is Data Science?

Data science transforms raw data into insights, predictions, and intelligent systems by combining statistics, computing, and domain knowledge.

Field Purpose ——————— —————————– 📊 Statistics Understanding uncertainty 💻 Computer Science Building scalable systems 🤖 Machine Learning Predictive modeling 🧠 Domain Expertise Solving real-world problems

🌍 The Data Science Pipeline

Data Sources

↓

Data Engineering

↓

Exploratory Data Analysis

↓

Feature Engineering

↓

Machine Learning

↓

Model Evaluation

↓

Deployment

↓

Monitoring

↓

Retraining

Modern machine learning systems operate as continuous feedback loops.

📦 Data Sources

Common origins of data:

- APIs

- Databases

- Web scraping

- Sensors / IoT devices

- Logs

- Financial transactions

- Public datasets

Example data types:

User activity logs

Medical records

Financial transactions

Satellite imagery

Social media data

🏗 Data Engineering

Data engineering prepares raw data for analysis.

Typical tasks:

- ETL pipelines (Extract, Transform, Load)

- Data cleaning

- Handling missing values

- Data validation

- Data warehousing

Common tools:

Python

SQL

Apache Spark

Airflow

Kafka

Hadoop

🔍 Exploratory Data Analysis (EDA)

EDA helps analysts understand patterns within data.

Typical steps:

Load dataset

Inspect features

Visualize distributions

Analyze correlations

Identify anomalies

Generate hypotheses

Visualization libraries:

Matplotlib

Seaborn

Plotly

Altair

🧬 Feature Engineering

Feature engineering converts raw data into predictive signals.

Examples:

Raw Feature Engineered Feature —————— ————————- Timestamp Day of week Purchase history Customer lifetime value Text TF‑IDF vectors Images CNN embeddings

Common operations:

Scaling

Encoding

Aggregation

Dimensionality reduction

Text vectorization

🤖 Machine Learning

Machine learning algorithms learn patterns from historical data.

Supervised Learning

Examples:

Spam detection

Fraud detection

Medical diagnosis

House price prediction

Algorithms:

Linear Regression

Logistic Regression

Random Forest

Gradient Boosting

Neural Networks

Unsupervised Learning

Examples:

Customer segmentation

Anomaly detection

Topic modeling

Algorithms:

K-Means

DBSCAN

Hierarchical Clustering

PCA

Autoencoders

🧠 Deep Learning

Deep learning uses multi‑layer neural networks to learn complex patterns.

Applications:

Computer vision

Natural language processing

Speech recognition

Generative AI

Frameworks:

TensorFlow

PyTorch

Keras

JAX

📏 Model Evaluation

Models must be validated before deployment.

Classification Metrics

Accuracy

Precision

Recall

F1 Score

ROC-AUC

Regression Metrics

MAE

MSE

RMSE

R²

Validation workflow:

Train/Test Split

Cross Validation

Hyperparameter Tuning

Final Model Selection

🚀 Model Deployment

Deployment methods:

REST APIs

Batch pipelines

Real-time inference

Mobile / Edge AI

Common deployment stack:

FastAPI

Docker

Kubernetes

AWS / GCP / Azure

Example architecture:

User Request

↓

API Gateway

↓

Model Service

↓

Prediction

↓

Response

📡 Monitoring & MLOps

Machine learning models degrade over time due to data drift.

Challenges:

Data drift

Concept drift

Model decay

Latency issues

Monitoring tools:

MLflow

Weights & Biases

Prometheus

Grafana

EvidentlyAI

🔁 Continuous ML Lifecycle

Raw Data

↓

Data Cleaning

↓

Feature Engineering

↓

Model Training

↓

Evaluation

↓

Deployment

↓

Monitoring

↓

Retraining

This loop powers modern AI-driven systems.

🌐 Data Science Technology Stack

Programming

Python

R

Julia

Scala

Data Processing

Pandas

NumPy

Apache Spark

Dask

Machine Learning

Scikit-learn

TensorFlow

PyTorch

XGBoost

LightGBM

Data Storage

PostgreSQL

MongoDB

Snowflake

BigQuery

Redshift

Visualization

Matplotlib

Plotly

Tableau

Power BI

🌍 Real‑World Applications

Netflix → Recommendation systems

Amazon → Demand forecasting

Tesla → Autonomous driving

Google → Search ranking

Banks → Fraud detection

Hospitals → Disease prediction

🧭 Roles in the Data Science Ecosystem

Role Focus —————- —————————— Data Engineer Data infrastructure Data Analyst Insights and reporting Data Scientist Modeling and experimentation ML Engineer Production ML systems AI Researcher Algorithm development

Data science is a complete ecosystem combining:

Engineering

Statistics

Machine Learning

Software Development

Domain Expertise

Together these disciplines transform raw data into intelligent systems.